mirror of

https://github.com/songquanpeng/one-api.git

synced 2025-12-28 02:35:56 +08:00

Merge remote-tracking branch 'origin/upstream/main'

This commit is contained in:

17

Dockerfile

17

Dockerfile

@@ -7,7 +7,7 @@ COPY ./web .

|

|||||||

COPY ./VERSION .

|

COPY ./VERSION .

|

||||||

RUN DISABLE_ESLINT_PLUGIN='true' REACT_APP_VERSION=$(cat VERSION) npm run build

|

RUN DISABLE_ESLINT_PLUGIN='true' REACT_APP_VERSION=$(cat VERSION) npm run build

|

||||||

|

|

||||||

FROM golang:1.21.4 AS builder2

|

FROM golang:1.21.5-bullseye AS builder2

|

||||||

|

|

||||||

ENV GO111MODULE=on \

|

ENV GO111MODULE=on \

|

||||||

CGO_ENABLED=1 \

|

CGO_ENABLED=1 \

|

||||||

@@ -20,12 +20,17 @@ COPY . .

|

|||||||

COPY --from=builder /build/build ./web/build

|

COPY --from=builder /build/build ./web/build

|

||||||

RUN go build -ldflags "-s -w -X 'one-api/common.Version=$(cat VERSION)' -extldflags '-static'" -o one-api

|

RUN go build -ldflags "-s -w -X 'one-api/common.Version=$(cat VERSION)' -extldflags '-static'" -o one-api

|

||||||

|

|

||||||

FROM alpine

|

FROM debian:bullseye

|

||||||

|

|

||||||

RUN apk update \

|

RUN apt-get update

|

||||||

&& apk upgrade \

|

RUN apt-get install -y --no-install-recommends ca-certificates haveged tzdata \

|

||||||

&& apk add --no-cache ca-certificates tzdata \

|

# for google-chrome

|

||||||

&& update-ca-certificates 2>/dev/null || true

|

# libappindicator1 fonts-liberation xdg-utils wget \

|

||||||

|

# libasound2 libatk-bridge2.0-0 libatspi2.0-0 libcurl3-gnutls libcurl3-nss \

|

||||||

|

# libcurl4 libcurl3 libdrm2 libgbm1 libgtk-3-0 libgtk-4-1 libnspr4 libnss3 \

|

||||||

|

# libu2f-udev libvulkan1 libxkbcommon0 \

|

||||||

|

&& update-ca-certificates 2>/dev/null || true \

|

||||||

|

&& rm -rf /var/lib/apt/lists/*

|

||||||

|

|

||||||

COPY --from=builder2 /build/one-api /

|

COPY --from=builder2 /build/one-api /

|

||||||

EXPOSE 3000

|

EXPOSE 3000

|

||||||

|

|||||||

416

README.md

416

README.md

@@ -4,5 +4,421 @@ docker image: `ppcelery/one-api:latest`

|

|||||||

|

|

||||||

## New Features

|

## New Features

|

||||||

|

|

||||||

|

<<<<<<< HEAD

|

||||||

- update token usage by API

|

- update token usage by API

|

||||||

- support gpt-vision

|

- support gpt-vision

|

||||||

|

=======

|

||||||

|

<p align="center">

|

||||||

|

<a href="https://raw.githubusercontent.com/songquanpeng/one-api/main/LICENSE">

|

||||||

|

<img src="https://img.shields.io/github/license/songquanpeng/one-api?color=brightgreen" alt="license">

|

||||||

|

</a>

|

||||||

|

<a href="https://github.com/songquanpeng/one-api/releases/latest">

|

||||||

|

<img src="https://img.shields.io/github/v/release/songquanpeng/one-api?color=brightgreen&include_prereleases" alt="release">

|

||||||

|

</a>

|

||||||

|

<a href="https://hub.docker.com/repository/docker/justsong/one-api">

|

||||||

|

<img src="https://img.shields.io/docker/pulls/justsong/one-api?color=brightgreen" alt="docker pull">

|

||||||

|

</a>

|

||||||

|

<a href="https://github.com/songquanpeng/one-api/releases/latest">

|

||||||

|

<img src="https://img.shields.io/github/downloads/songquanpeng/one-api/total?color=brightgreen&include_prereleases" alt="release">

|

||||||

|

</a>

|

||||||

|

<a href="https://goreportcard.com/report/github.com/songquanpeng/one-api">

|

||||||

|

<img src="https://goreportcard.com/badge/github.com/songquanpeng/one-api" alt="GoReportCard">

|

||||||

|

</a>

|

||||||

|

</p>

|

||||||

|

|

||||||

|

<p align="center">

|

||||||

|

<a href="https://github.com/songquanpeng/one-api#部署">部署教程</a>

|

||||||

|

·

|

||||||

|

<a href="https://github.com/songquanpeng/one-api#使用方法">使用方法</a>

|

||||||

|

·

|

||||||

|

<a href="https://github.com/songquanpeng/one-api/issues">意见反馈</a>

|

||||||

|

·

|

||||||

|

<a href="https://github.com/songquanpeng/one-api#截图展示">截图展示</a>

|

||||||

|

·

|

||||||

|

<a href="https://openai.justsong.cn/">在线演示</a>

|

||||||

|

·

|

||||||

|

<a href="https://github.com/songquanpeng/one-api#常见问题">常见问题</a>

|

||||||

|

·

|

||||||

|

<a href="https://github.com/songquanpeng/one-api#相关项目">相关项目</a>

|

||||||

|

·

|

||||||

|

<a href="https://iamazing.cn/page/reward">赞赏支持</a>

|

||||||

|

</p>

|

||||||

|

|

||||||

|

> [!NOTE]

|

||||||

|

> 本项目为开源项目,使用者必须在遵循 OpenAI 的[使用条款](https://openai.com/policies/terms-of-use)以及**法律法规**的情况下使用,不得用于非法用途。

|

||||||

|

>

|

||||||

|

> 根据[《生成式人工智能服务管理暂行办法》](http://www.cac.gov.cn/2023-07/13/c_1690898327029107.htm)的要求,请勿对中国地区公众提供一切未经备案的生成式人工智能服务。

|

||||||

|

|

||||||

|

> [!WARNING]

|

||||||

|

> 使用 Docker 拉取的最新镜像可能是 `alpha` 版本,如果追求稳定性请手动指定版本。

|

||||||

|

|

||||||

|

> [!WARNING]

|

||||||

|

> 使用 root 用户初次登录系统后,务必修改默认密码 `123456`!

|

||||||

|

|

||||||

|

## 功能

|

||||||

|

1. 支持多种大模型:

|

||||||

|

+ [x] [OpenAI ChatGPT 系列模型](https://platform.openai.com/docs/guides/gpt/chat-completions-api)(支持 [Azure OpenAI API](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference))

|

||||||

|

+ [x] [Anthropic Claude 系列模型](https://anthropic.com)

|

||||||

|

+ [x] [Google PaLM2 系列模型](https://developers.generativeai.google)

|

||||||

|

+ [x] [百度文心一言系列模型](https://cloud.baidu.com/doc/WENXINWORKSHOP/index.html)

|

||||||

|

+ [x] [阿里通义千问系列模型](https://help.aliyun.com/document_detail/2400395.html)

|

||||||

|

+ [x] [讯飞星火认知大模型](https://www.xfyun.cn/doc/spark/Web.html)

|

||||||

|

+ [x] [智谱 ChatGLM 系列模型](https://bigmodel.cn)

|

||||||

|

+ [x] [360 智脑](https://ai.360.cn)

|

||||||

|

+ [x] [腾讯混元大模型](https://cloud.tencent.com/document/product/1729)

|

||||||

|

2. 支持配置镜像以及众多第三方代理服务:

|

||||||

|

+ [x] [OpenAI-SB](https://openai-sb.com)

|

||||||

|

+ [x] [CloseAI](https://referer.shadowai.xyz/r/2412)

|

||||||

|

+ [x] [API2D](https://api2d.com/r/197971)

|

||||||

|

+ [x] [OhMyGPT](https://aigptx.top?aff=uFpUl2Kf)

|

||||||

|

+ [x] [AI Proxy](https://aiproxy.io/?i=OneAPI) (邀请码:`OneAPI`)

|

||||||

|

+ [x] 自定义渠道:例如各种未收录的第三方代理服务

|

||||||

|

3. 支持通过**负载均衡**的方式访问多个渠道。

|

||||||

|

4. 支持 **stream 模式**,可以通过流式传输实现打字机效果。

|

||||||

|

5. 支持**多机部署**,[详见此处](#多机部署)。

|

||||||

|

6. 支持**令牌管理**,设置令牌的过期时间和额度。

|

||||||

|

7. 支持**兑换码管理**,支持批量生成和导出兑换码,可使用兑换码为账户进行充值。

|

||||||

|

8. 支持**通道管理**,批量创建通道。

|

||||||

|

9. 支持**用户分组**以及**渠道分组**,支持为不同分组设置不同的倍率。

|

||||||

|

10. 支持渠道**设置模型列表**。

|

||||||

|

11. 支持**查看额度明细**。

|

||||||

|

12. 支持**用户邀请奖励**。

|

||||||

|

13. 支持以美元为单位显示额度。

|

||||||

|

14. 支持发布公告,设置充值链接,设置新用户初始额度。

|

||||||

|

15. 支持模型映射,重定向用户的请求模型,如无必要请不要设置,设置之后会导致请求体被重新构造而非直接透传,会导致部分还未正式支持的字段无法传递成功。

|

||||||

|

16. 支持失败自动重试。

|

||||||

|

17. 支持绘图接口。

|

||||||

|

18. 支持 [Cloudflare AI Gateway](https://developers.cloudflare.com/ai-gateway/providers/openai/),渠道设置的代理部分填写 `https://gateway.ai.cloudflare.com/v1/ACCOUNT_TAG/GATEWAY/openai` 即可。

|

||||||

|

19. 支持丰富的**自定义**设置,

|

||||||

|

1. 支持自定义系统名称,logo 以及页脚。

|

||||||

|

2. 支持自定义首页和关于页面,可以选择使用 HTML & Markdown 代码进行自定义,或者使用一个单独的网页通过 iframe 嵌入。

|

||||||

|

20. 支持通过系统访问令牌访问管理 API(bearer token,用以替代 cookie,你可以自行抓包来查看 API 的用法)。

|

||||||

|

21. 支持 Cloudflare Turnstile 用户校验。

|

||||||

|

22. 支持用户管理,支持**多种用户登录注册方式**:

|

||||||

|

+ 邮箱登录注册(支持注册邮箱白名单)以及通过邮箱进行密码重置。

|

||||||

|

+ [GitHub 开放授权](https://github.com/settings/applications/new)。

|

||||||

|

+ 微信公众号授权(需要额外部署 [WeChat Server](https://github.com/songquanpeng/wechat-server))。

|

||||||

|

|

||||||

|

## 部署

|

||||||

|

### 基于 Docker 进行部署

|

||||||

|

```shell

|

||||||

|

# 使用 SQLite 的部署命令:

|

||||||

|

docker run --name one-api -d --restart always -p 3000:3000 -e TZ=Asia/Shanghai -v /home/ubuntu/data/one-api:/data justsong/one-api

|

||||||

|

# 使用 MySQL 的部署命令,在上面的基础上添加 `-e SQL_DSN="root:123456@tcp(localhost:3306)/oneapi"`,请自行修改数据库连接参数,不清楚如何修改请参见下面环境变量一节。

|

||||||

|

# 例如:

|

||||||

|

docker run --name one-api -d --restart always -p 3000:3000 -e SQL_DSN="root:123456@tcp(localhost:3306)/oneapi" -e TZ=Asia/Shanghai -v /home/ubuntu/data/one-api:/data justsong/one-api

|

||||||

|

```

|

||||||

|

|

||||||

|

其中,`-p 3000:3000` 中的第一个 `3000` 是宿主机的端口,可以根据需要进行修改。

|

||||||

|

|

||||||

|

数据和日志将会保存在宿主机的 `/home/ubuntu/data/one-api` 目录,请确保该目录存在且具有写入权限,或者更改为合适的目录。

|

||||||

|

|

||||||

|

如果启动失败,请添加 `--privileged=true`,具体参考 https://github.com/songquanpeng/one-api/issues/482 。

|

||||||

|

|

||||||

|

如果上面的镜像无法拉取,可以尝试使用 GitHub 的 Docker 镜像,将上面的 `justsong/one-api` 替换为 `ghcr.io/songquanpeng/one-api` 即可。

|

||||||

|

|

||||||

|

如果你的并发量较大,**务必**设置 `SQL_DSN`,详见下面[环境变量](#环境变量)一节。

|

||||||

|

|

||||||

|

更新命令:`docker run --rm -v /var/run/docker.sock:/var/run/docker.sock containrrr/watchtower -cR`

|

||||||

|

|

||||||

|

Nginx 的参考配置:

|

||||||

|

```

|

||||||

|

server{

|

||||||

|

server_name openai.justsong.cn; # 请根据实际情况修改你的域名

|

||||||

|

|

||||||

|

location / {

|

||||||

|

client_max_body_size 64m;

|

||||||

|

proxy_http_version 1.1;

|

||||||

|

proxy_pass http://localhost:3000; # 请根据实际情况修改你的端口

|

||||||

|

proxy_set_header Host $host;

|

||||||

|

proxy_set_header X-Forwarded-For $remote_addr;

|

||||||

|

proxy_cache_bypass $http_upgrade;

|

||||||

|

proxy_set_header Accept-Encoding gzip;

|

||||||

|

proxy_read_timeout 300s; # GPT-4 需要较长的超时时间,请自行调整

|

||||||

|

}

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

之后使用 Let's Encrypt 的 certbot 配置 HTTPS:

|

||||||

|

```bash

|

||||||

|

# Ubuntu 安装 certbot:

|

||||||

|

sudo snap install --classic certbot

|

||||||

|

sudo ln -s /snap/bin/certbot /usr/bin/certbot

|

||||||

|

# 生成证书 & 修改 Nginx 配置

|

||||||

|

sudo certbot --nginx

|

||||||

|

# 根据指示进行操作

|

||||||

|

# 重启 Nginx

|

||||||

|

sudo service nginx restart

|

||||||

|

```

|

||||||

|

|

||||||

|

初始账号用户名为 `root`,密码为 `123456`。

|

||||||

|

|

||||||

|

|

||||||

|

### 基于 Docker Compose 进行部署

|

||||||

|

|

||||||

|

> 仅启动方式不同,参数设置不变,请参考基于 Docker 部署部分

|

||||||

|

|

||||||

|

```shell

|

||||||

|

# 目前支持 MySQL 启动,数据存储在 ./data/mysql 文件夹内

|

||||||

|

docker-compose up -d

|

||||||

|

|

||||||

|

# 查看部署状态

|

||||||

|

docker-compose ps

|

||||||

|

```

|

||||||

|

|

||||||

|

### 手动部署

|

||||||

|

1. 从 [GitHub Releases](https://github.com/songquanpeng/one-api/releases/latest) 下载可执行文件或者从源码编译:

|

||||||

|

```shell

|

||||||

|

git clone https://github.com/songquanpeng/one-api.git

|

||||||

|

|

||||||

|

# 构建前端

|

||||||

|

cd one-api/web

|

||||||

|

npm install

|

||||||

|

npm run build

|

||||||

|

|

||||||

|

# 构建后端

|

||||||

|

cd ..

|

||||||

|

go mod download

|

||||||

|

go build -ldflags "-s -w" -o one-api

|

||||||

|

````

|

||||||

|

2. 运行:

|

||||||

|

```shell

|

||||||

|

chmod u+x one-api

|

||||||

|

./one-api --port 3000 --log-dir ./logs

|

||||||

|

```

|

||||||

|

3. 访问 [http://localhost:3000/](http://localhost:3000/) 并登录。初始账号用户名为 `root`,密码为 `123456`。

|

||||||

|

|

||||||

|

更加详细的部署教程[参见此处](https://iamazing.cn/page/how-to-deploy-a-website)。

|

||||||

|

|

||||||

|

### 多机部署

|

||||||

|

1. 所有服务器 `SESSION_SECRET` 设置一样的值。

|

||||||

|

2. 必须设置 `SQL_DSN`,使用 MySQL 数据库而非 SQLite,所有服务器连接同一个数据库。

|

||||||

|

3. 所有从服务器必须设置 `NODE_TYPE` 为 `slave`,不设置则默认为主服务器。

|

||||||

|

4. 设置 `SYNC_FREQUENCY` 后服务器将定期从数据库同步配置,在使用远程数据库的情况下,推荐设置该项并启用 Redis,无论主从。

|

||||||

|

5. 从服务器可以选择设置 `FRONTEND_BASE_URL`,以重定向页面请求到主服务器。

|

||||||

|

6. 从服务器上**分别**装好 Redis,设置好 `REDIS_CONN_STRING`,这样可以做到在缓存未过期的情况下数据库零访问,可以减少延迟。

|

||||||

|

7. 如果主服务器访问数据库延迟也比较高,则也需要启用 Redis,并设置 `SYNC_FREQUENCY`,以定期从数据库同步配置。

|

||||||

|

|

||||||

|

环境变量的具体使用方法详见[此处](#环境变量)。

|

||||||

|

|

||||||

|

### 宝塔部署教程

|

||||||

|

|

||||||

|

详见 [#175](https://github.com/songquanpeng/one-api/issues/175)。

|

||||||

|

|

||||||

|

如果部署后访问出现空白页面,详见 [#97](https://github.com/songquanpeng/one-api/issues/97)。

|

||||||

|

|

||||||

|

### 部署第三方服务配合 One API 使用

|

||||||

|

> 欢迎 PR 添加更多示例。

|

||||||

|

|

||||||

|

#### ChatGPT Next Web

|

||||||

|

项目主页:https://github.com/Yidadaa/ChatGPT-Next-Web

|

||||||

|

|

||||||

|

```bash

|

||||||

|

docker run --name chat-next-web -d -p 3001:3000 yidadaa/chatgpt-next-web

|

||||||

|

```

|

||||||

|

|

||||||

|

注意修改端口号,之后在页面上设置接口地址(例如:https://openai.justsong.cn/ )和 API Key 即可。

|

||||||

|

|

||||||

|

#### ChatGPT Web

|

||||||

|

项目主页:https://github.com/Chanzhaoyu/chatgpt-web

|

||||||

|

|

||||||

|

```bash

|

||||||

|

docker run --name chatgpt-web -d -p 3002:3002 -e OPENAI_API_BASE_URL=https://openai.justsong.cn -e OPENAI_API_KEY=sk-xxx chenzhaoyu94/chatgpt-web

|

||||||

|

```

|

||||||

|

|

||||||

|

注意修改端口号、`OPENAI_API_BASE_URL` 和 `OPENAI_API_KEY`。

|

||||||

|

|

||||||

|

#### QChatGPT - QQ机器人

|

||||||

|

项目主页:https://github.com/RockChinQ/QChatGPT

|

||||||

|

|

||||||

|

根据文档完成部署后,在`config.py`设置配置项`openai_config`的`reverse_proxy`为 One API 后端地址,设置`api_key`为 One API 生成的key,并在配置项`completion_api_params`的`model`参数设置为 One API 支持的模型名称。

|

||||||

|

|

||||||

|

可安装 [Switcher 插件](https://github.com/RockChinQ/Switcher)在运行时切换所使用的模型。

|

||||||

|

|

||||||

|

### 部署到第三方平台

|

||||||

|

<details>

|

||||||

|

<summary><strong>部署到 Sealos </strong></summary>

|

||||||

|

<div>

|

||||||

|

|

||||||

|

> Sealos 的服务器在国外,不需要额外处理网络问题,支持高并发 & 动态伸缩。

|

||||||

|

|

||||||

|

点击以下按钮一键部署(部署后访问出现 404 请等待 3~5 分钟):

|

||||||

|

|

||||||

|

[](https://cloud.sealos.io/?openapp=system-fastdeploy?templateName=one-api)

|

||||||

|

|

||||||

|

</div>

|

||||||

|

</details>

|

||||||

|

|

||||||

|

<details>

|

||||||

|

<summary><strong>部署到 Zeabur</strong></summary>

|

||||||

|

<div>

|

||||||

|

|

||||||

|

> Zeabur 的服务器在国外,自动解决了网络的问题,同时免费的额度也足够个人使用

|

||||||

|

|

||||||

|

[](https://zeabur.com/templates/7Q0KO3)

|

||||||

|

|

||||||

|

1. 首先 fork 一份代码。

|

||||||

|

2. 进入 [Zeabur](https://zeabur.com?referralCode=songquanpeng),登录,进入控制台。

|

||||||

|

3. 新建一个 Project,在 Service -> Add Service 选择 Marketplace,选择 MySQL,并记下连接参数(用户名、密码、地址、端口)。

|

||||||

|

4. 复制链接参数,运行 ```create database `one-api` ``` 创建数据库。

|

||||||

|

5. 然后在 Service -> Add Service,选择 Git(第一次使用需要先授权),选择你 fork 的仓库。

|

||||||

|

6. Deploy 会自动开始,先取消。进入下方 Variable,添加一个 `PORT`,值为 `3000`,再添加一个 `SQL_DSN`,值为 `<username>:<password>@tcp(<addr>:<port>)/one-api` ,然后保存。 注意如果不填写 `SQL_DSN`,数据将无法持久化,重新部署后数据会丢失。

|

||||||

|

7. 选择 Redeploy。

|

||||||

|

8. 进入下方 Domains,选择一个合适的域名前缀,如 "my-one-api",最终域名为 "my-one-api.zeabur.app",也可以 CNAME 自己的域名。

|

||||||

|

9. 等待部署完成,点击生成的域名进入 One API。

|

||||||

|

|

||||||

|

</div>

|

||||||

|

</details>

|

||||||

|

|

||||||

|

<details>

|

||||||

|

<summary><strong>部署到 Render</strong></summary>

|

||||||

|

<div>

|

||||||

|

|

||||||

|

> Render 提供免费额度,绑卡后可以进一步提升额度

|

||||||

|

|

||||||

|

Render 可以直接部署 docker 镜像,不需要 fork 仓库:https://dashboard.render.com

|

||||||

|

|

||||||

|

</div>

|

||||||

|

</details>

|

||||||

|

|

||||||

|

## 配置

|

||||||

|

系统本身开箱即用。

|

||||||

|

|

||||||

|

你可以通过设置环境变量或者命令行参数进行配置。

|

||||||

|

|

||||||

|

等到系统启动后,使用 `root` 用户登录系统并做进一步的配置。

|

||||||

|

|

||||||

|

**Note**:如果你不知道某个配置项的含义,可以临时删掉值以看到进一步的提示文字。

|

||||||

|

|

||||||

|

## 使用方法

|

||||||

|

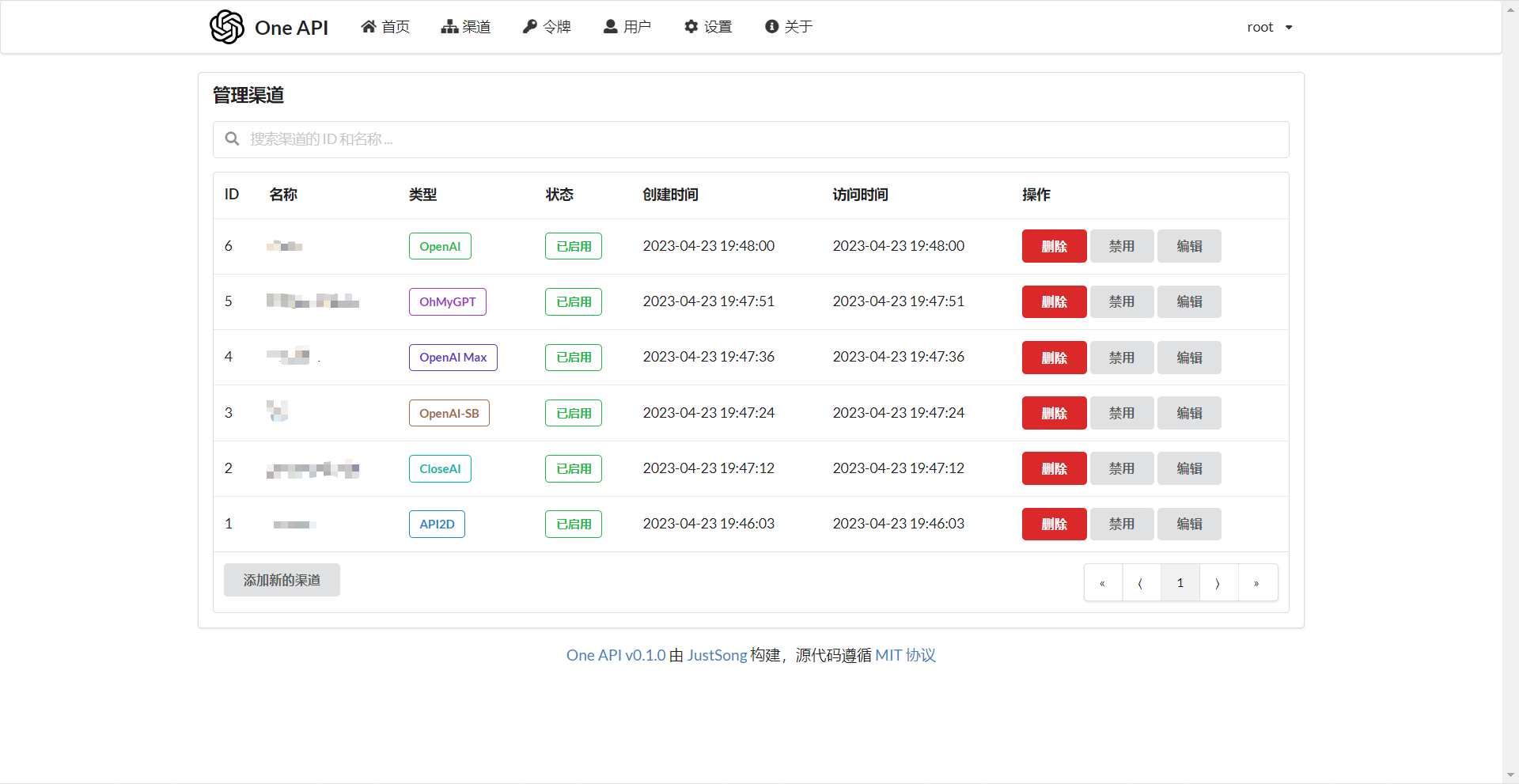

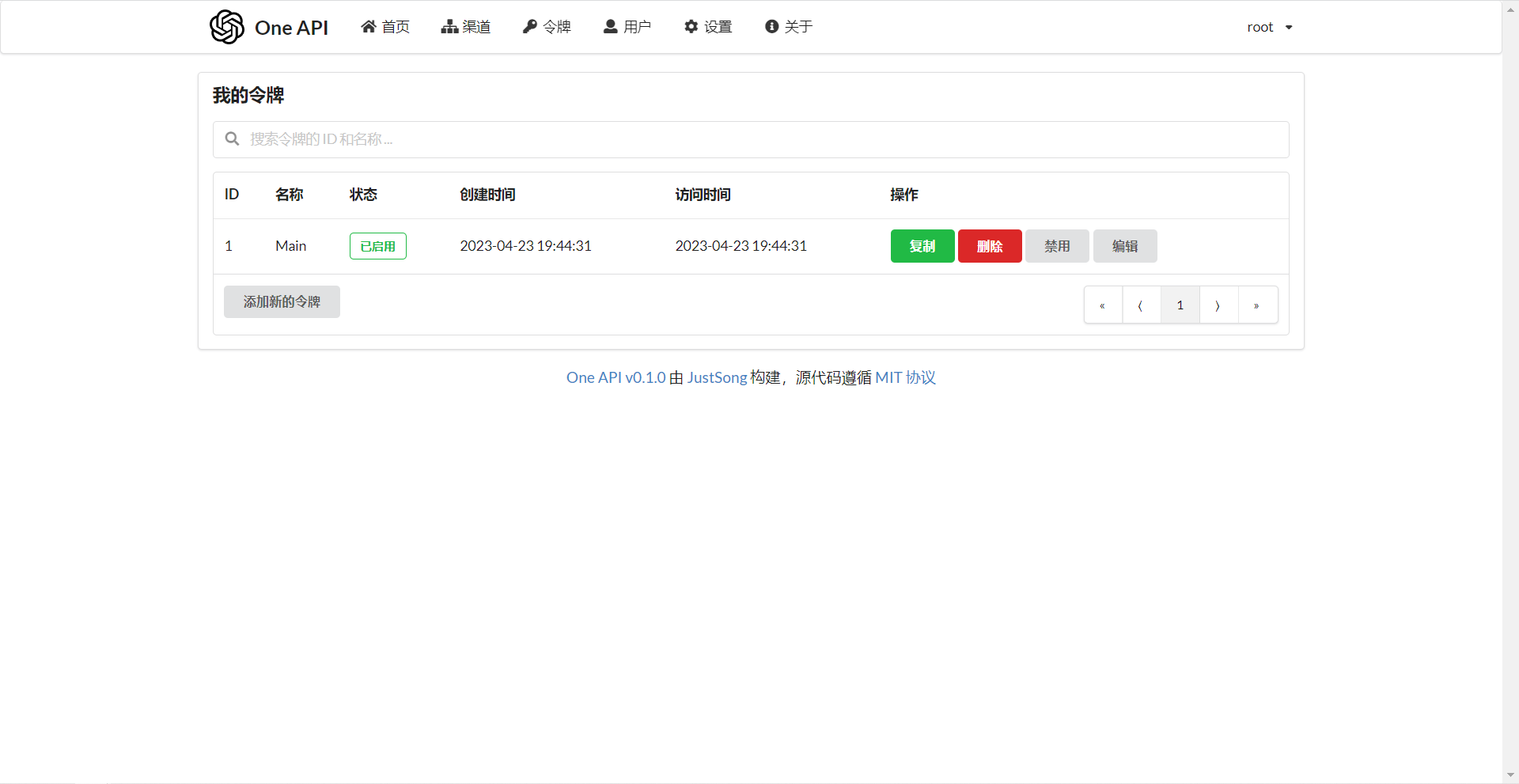

在`渠道`页面中添加你的 API Key,之后在`令牌`页面中新增访问令牌。

|

||||||

|

|

||||||

|

之后就可以使用你的令牌访问 One API 了,使用方式与 [OpenAI API](https://platform.openai.com/docs/api-reference/introduction) 一致。

|

||||||

|

|

||||||

|

你需要在各种用到 OpenAI API 的地方设置 API Base 为你的 One API 的部署地址,例如:`https://openai.justsong.cn`,API Key 则为你在 One API 中生成的令牌。

|

||||||

|

|

||||||

|

注意,具体的 API Base 的格式取决于你所使用的客户端。

|

||||||

|

|

||||||

|

例如对于 OpenAI 的官方库:

|

||||||

|

```bash

|

||||||

|

OPENAI_API_KEY="sk-xxxxxx"

|

||||||

|

OPENAI_API_BASE="https://<HOST>:<PORT>/v1"

|

||||||

|

```

|

||||||

|

|

||||||

|

```mermaid

|

||||||

|

graph LR

|

||||||

|

A(用户)

|

||||||

|

A --->|使用 One API 分发的 key 进行请求| B(One API)

|

||||||

|

B -->|中继请求| C(OpenAI)

|

||||||

|

B -->|中继请求| D(Azure)

|

||||||

|

B -->|中继请求| E(其他 OpenAI API 格式下游渠道)

|

||||||

|

B -->|中继并修改请求体和返回体| F(非 OpenAI API 格式下游渠道)

|

||||||

|

```

|

||||||

|

|

||||||

|

可以通过在令牌后面添加渠道 ID 的方式指定使用哪一个渠道处理本次请求,例如:`Authorization: Bearer ONE_API_KEY-CHANNEL_ID`。

|

||||||

|

注意,需要是管理员用户创建的令牌才能指定渠道 ID。

|

||||||

|

|

||||||

|

不加的话将会使用负载均衡的方式使用多个渠道。

|

||||||

|

|

||||||

|

### 环境变量

|

||||||

|

1. `REDIS_CONN_STRING`:设置之后将使用 Redis 作为缓存使用。

|

||||||

|

+ 例子:`REDIS_CONN_STRING=redis://default:redispw@localhost:49153`

|

||||||

|

+ 如果数据库访问延迟很低,没有必要启用 Redis,启用后反而会出现数据滞后的问题。

|

||||||

|

2. `SESSION_SECRET`:设置之后将使用固定的会话密钥,这样系统重新启动后已登录用户的 cookie 将依旧有效。

|

||||||

|

+ 例子:`SESSION_SECRET=random_string`

|

||||||

|

3. `SQL_DSN`:设置之后将使用指定数据库而非 SQLite,请使用 MySQL 或 PostgreSQL。

|

||||||

|

+ 例子:

|

||||||

|

+ MySQL:`SQL_DSN=root:123456@tcp(localhost:3306)/oneapi`

|

||||||

|

+ PostgreSQL:`SQL_DSN=postgres://postgres:123456@localhost:5432/oneapi`(适配中,欢迎反馈)

|

||||||

|

+ 注意需要提前建立数据库 `oneapi`,无需手动建表,程序将自动建表。

|

||||||

|

+ 如果使用本地数据库:部署命令可添加 `--network="host"` 以使得容器内的程序可以访问到宿主机上的 MySQL。

|

||||||

|

+ 如果使用云数据库:如果云服务器需要验证身份,需要在连接参数中添加 `?tls=skip-verify`。

|

||||||

|

+ 请根据你的数据库配置修改下列参数(或者保持默认值):

|

||||||

|

+ `SQL_MAX_IDLE_CONNS`:最大空闲连接数,默认为 `100`。

|

||||||

|

+ `SQL_MAX_OPEN_CONNS`:最大打开连接数,默认为 `1000`。

|

||||||

|

+ 如果报错 `Error 1040: Too many connections`,请适当减小该值。

|

||||||

|

+ `SQL_CONN_MAX_LIFETIME`:连接的最大生命周期,默认为 `60`,单位分钟。

|

||||||

|

4. `FRONTEND_BASE_URL`:设置之后将重定向页面请求到指定的地址,仅限从服务器设置。

|

||||||

|

+ 例子:`FRONTEND_BASE_URL=https://openai.justsong.cn`

|

||||||

|

5. `MEMORY_CACHE_ENABLED`:启用内存缓存,会导致用户额度的更新存在一定的延迟,可选值为 `true` 和 `false`,未设置则默认为 `false`。

|

||||||

|

+ 例子:`MEMORY_CACHE_ENABLED=true`

|

||||||

|

6. `SYNC_FREQUENCY`:在启用缓存的情况下与数据库同步配置的频率,单位为秒,默认为 `600` 秒。

|

||||||

|

+ 例子:`SYNC_FREQUENCY=60`

|

||||||

|

7. `NODE_TYPE`:设置之后将指定节点类型,可选值为 `master` 和 `slave`,未设置则默认为 `master`。

|

||||||

|

+ 例子:`NODE_TYPE=slave`

|

||||||

|

8. `CHANNEL_UPDATE_FREQUENCY`:设置之后将定期更新渠道余额,单位为分钟,未设置则不进行更新。

|

||||||

|

+ 例子:`CHANNEL_UPDATE_FREQUENCY=1440`

|

||||||

|

9. `CHANNEL_TEST_FREQUENCY`:设置之后将定期检查渠道,单位为分钟,未设置则不进行检查。

|

||||||

|

+ 例子:`CHANNEL_TEST_FREQUENCY=1440`

|

||||||

|

10. `POLLING_INTERVAL`:批量更新渠道余额以及测试可用性时的请求间隔,单位为秒,默认无间隔。

|

||||||

|

+ 例子:`POLLING_INTERVAL=5`

|

||||||

|

11. `BATCH_UPDATE_ENABLED`:启用数据库批量更新聚合,会导致用户额度的更新存在一定的延迟可选值为 `true` 和 `false`,未设置则默认为 `false`。

|

||||||

|

+ 例子:`BATCH_UPDATE_ENABLED=true`

|

||||||

|

+ 如果你遇到了数据库连接数过多的问题,可以尝试启用该选项。

|

||||||

|

12. `BATCH_UPDATE_INTERVAL=5`:批量更新聚合的时间间隔,单位为秒,默认为 `5`。

|

||||||

|

+ 例子:`BATCH_UPDATE_INTERVAL=5`

|

||||||

|

13. 请求频率限制:

|

||||||

|

+ `GLOBAL_API_RATE_LIMIT`:全局 API 速率限制(除中继请求外),单 ip 三分钟内的最大请求数,默认为 `180`。

|

||||||

|

+ `GLOBAL_WEB_RATE_LIMIT`:全局 Web 速率限制,单 ip 三分钟内的最大请求数,默认为 `60`。

|

||||||

|

14. 编码器缓存设置:

|

||||||

|

+ `TIKTOKEN_CACHE_DIR`:默认程序启动时会联网下载一些通用的词元的编码,如:`gpt-3.5-turbo`,在一些网络环境不稳定,或者离线情况,可能会导致启动有问题,可以配置此目录缓存数据,可迁移到离线环境。

|

||||||

|

+ `DATA_GYM_CACHE_DIR`:目前该配置作用与 `TIKTOKEN_CACHE_DIR` 一致,但是优先级没有它高。

|

||||||

|

15. `RELAY_TIMEOUT`:中继超时设置,单位为秒,默认不设置超时时间。

|

||||||

|

|

||||||

|

### 命令行参数

|

||||||

|

1. `--port <port_number>`: 指定服务器监听的端口号,默认为 `3000`。

|

||||||

|

+ 例子:`--port 3000`

|

||||||

|

2. `--log-dir <log_dir>`: 指定日志文件夹,如果没有设置,默认保存至工作目录的 `logs` 文件夹下。

|

||||||

|

+ 例子:`--log-dir ./logs`

|

||||||

|

3. `--version`: 打印系统版本号并退出。

|

||||||

|

4. `--help`: 查看命令的使用帮助和参数说明。

|

||||||

|

|

||||||

|

## 演示

|

||||||

|

### 在线演示

|

||||||

|

注意,该演示站不提供对外服务:

|

||||||

|

https://openai.justsong.cn

|

||||||

|

|

||||||

|

### 截图展示

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

## 常见问题

|

||||||

|

1. 额度是什么?怎么计算的?One API 的额度计算有问题?

|

||||||

|

+ 额度 = 分组倍率 * 模型倍率 * (提示 token 数 + 补全 token 数 * 补全倍率)

|

||||||

|

+ 其中补全倍率对于 GPT3.5 固定为 1.33,GPT4 为 2,与官方保持一致。

|

||||||

|

+ 如果是非流模式,官方接口会返回消耗的总 token,但是你要注意提示和补全的消耗倍率不一样。

|

||||||

|

+ 注意,One API 的默认倍率就是官方倍率,是已经调整过的。

|

||||||

|

2. 账户额度足够为什么提示额度不足?

|

||||||

|

+ 请检查你的令牌额度是否足够,这个和账户额度是分开的。

|

||||||

|

+ 令牌额度仅供用户设置最大使用量,用户可自由设置。

|

||||||

|

3. 提示无可用渠道?

|

||||||

|

+ 请检查的用户分组和渠道分组设置。

|

||||||

|

+ 以及渠道的模型设置。

|

||||||

|

4. 渠道测试报错:`invalid character '<' looking for beginning of value`

|

||||||

|

+ 这是因为返回值不是合法的 JSON,而是一个 HTML 页面。

|

||||||

|

+ 大概率是你的部署站的 IP 或代理的节点被 CloudFlare 封禁了。

|

||||||

|

5. ChatGPT Next Web 报错:`Failed to fetch`

|

||||||

|

+ 部署的时候不要设置 `BASE_URL`。

|

||||||

|

+ 检查你的接口地址和 API Key 有没有填对。

|

||||||

|

+ 检查是否启用了 HTTPS,浏览器会拦截 HTTPS 域名下的 HTTP 请求。

|

||||||

|

6. 报错:`当前分组负载已饱和,请稍后再试`

|

||||||

|

+ 上游通道 429 了。

|

||||||

|

7. 升级之后我的数据会丢失吗?

|

||||||

|

+ 如果使用 MySQL,不会。

|

||||||

|

+ 如果使用 SQLite,需要按照我所给的部署命令挂载 volume 持久化 one-api.db 数据库文件,否则容器重启后数据会丢失。

|

||||||

|

8. 升级之前数据库需要做变更吗?

|

||||||

|

+ 一般情况下不需要,系统将在初始化的时候自动调整。

|

||||||

|

+ 如果需要的话,我会在更新日志中说明,并给出脚本。

|

||||||

|

|

||||||

|

## 相关项目

|

||||||

|

* [FastGPT](https://github.com/labring/FastGPT): 基于 LLM 大语言模型的知识库问答系统

|

||||||

|

* [ChatGPT Next Web](https://github.com/Yidadaa/ChatGPT-Next-Web): 一键拥有你自己的跨平台 ChatGPT 应用

|

||||||

|

|

||||||

|

## 注意

|

||||||

|

|

||||||

|

本项目使用 MIT 协议进行开源,**在此基础上**,必须在页面底部保留署名以及指向本项目的链接。如果不想保留署名,必须首先获得授权。

|

||||||

|

|

||||||

|

同样适用于基于本项目的二开项目。

|

||||||

|

|

||||||

|

依据 MIT 协议,使用者需自行承担使用本项目的风险与责任,本开源项目开发者与此无关。

|

||||||

|

>>>>>>> origin/upstream/main

|

||||||

|

|||||||

@@ -78,6 +78,7 @@ var QuotaForInviter = 0

|

|||||||

var QuotaForInvitee = 0

|

var QuotaForInvitee = 0

|

||||||

var ChannelDisableThreshold = 5.0

|

var ChannelDisableThreshold = 5.0

|

||||||

var AutomaticDisableChannelEnabled = false

|

var AutomaticDisableChannelEnabled = false

|

||||||

|

var AutomaticEnableChannelEnabled = false

|

||||||

var QuotaRemindThreshold = 1000

|

var QuotaRemindThreshold = 1000

|

||||||

var PreConsumedQuota = 500

|

var PreConsumedQuota = 500

|

||||||

var ApproximateTokenEnabled = false

|

var ApproximateTokenEnabled = false

|

||||||

|

|||||||

@@ -1,11 +1,13 @@

|

|||||||

package common

|

package common

|

||||||

|

|

||||||

import (

|

import (

|

||||||

|

"crypto/rand"

|

||||||

"crypto/tls"

|

"crypto/tls"

|

||||||

"encoding/base64"

|

"encoding/base64"

|

||||||

"fmt"

|

"fmt"

|

||||||

"net/smtp"

|

"net/smtp"

|

||||||

"strings"

|

"strings"

|

||||||

|

"time"

|

||||||

)

|

)

|

||||||

|

|

||||||

func SendEmail(subject string, receiver string, content string) error {

|

func SendEmail(subject string, receiver string, content string) error {

|

||||||

@@ -13,15 +15,32 @@ func SendEmail(subject string, receiver string, content string) error {

|

|||||||

SMTPFrom = SMTPAccount

|

SMTPFrom = SMTPAccount

|

||||||

}

|

}

|

||||||

encodedSubject := fmt.Sprintf("=?UTF-8?B?%s?=", base64.StdEncoding.EncodeToString([]byte(subject)))

|

encodedSubject := fmt.Sprintf("=?UTF-8?B?%s?=", base64.StdEncoding.EncodeToString([]byte(subject)))

|

||||||

|

|

||||||

|

// Extract domain from SMTPFrom

|

||||||

|

parts := strings.Split(SMTPFrom, "@")

|

||||||

|

var domain string

|

||||||

|

if len(parts) > 1 {

|

||||||

|

domain = parts[1]

|

||||||

|

}

|

||||||

|

// Generate a unique Message-ID

|

||||||

|

buf := make([]byte, 16)

|

||||||

|

_, err := rand.Read(buf)

|

||||||

|

if err != nil {

|

||||||

|

return err

|

||||||

|

}

|

||||||

|

messageId := fmt.Sprintf("<%x@%s>", buf, domain)

|

||||||

|

|

||||||

mail := []byte(fmt.Sprintf("To: %s\r\n"+

|

mail := []byte(fmt.Sprintf("To: %s\r\n"+

|

||||||

"From: %s<%s>\r\n"+

|

"From: %s<%s>\r\n"+

|

||||||

"Subject: %s\r\n"+

|

"Subject: %s\r\n"+

|

||||||

|

"Message-ID: %s\r\n"+ // add Message-ID header to avoid being treated as spam, RFC 5322

|

||||||

|

"Date: %s\r\n"+

|

||||||

"Content-Type: text/html; charset=UTF-8\r\n\r\n%s\r\n",

|

"Content-Type: text/html; charset=UTF-8\r\n\r\n%s\r\n",

|

||||||

receiver, SystemName, SMTPFrom, encodedSubject, content))

|

receiver, SystemName, SMTPFrom, encodedSubject, messageId, time.Now().Format(time.RFC1123Z), content))

|

||||||

auth := smtp.PlainAuth("", SMTPAccount, SMTPToken, SMTPServer)

|

auth := smtp.PlainAuth("", SMTPAccount, SMTPToken, SMTPServer)

|

||||||

addr := fmt.Sprintf("%s:%d", SMTPServer, SMTPPort)

|

addr := fmt.Sprintf("%s:%d", SMTPServer, SMTPPort)

|

||||||

to := strings.Split(receiver, ";")

|

to := strings.Split(receiver, ";")

|

||||||

var err error

|

|

||||||

if SMTPPort == 465 {

|

if SMTPPort == 465 {

|

||||||

tlsConfig := &tls.Config{

|

tlsConfig := &tls.Config{

|

||||||

// InsecureSkipVerify: true,

|

// InsecureSkipVerify: true,

|

||||||

|

|||||||

@@ -5,6 +5,7 @@ import (

|

|||||||

"encoding/json"

|

"encoding/json"

|

||||||

"github.com/gin-gonic/gin"

|

"github.com/gin-gonic/gin"

|

||||||

"io"

|

"io"

|

||||||

|

"strings"

|

||||||

)

|

)

|

||||||

|

|

||||||

func UnmarshalBodyReusable(c *gin.Context, v any) error {

|

func UnmarshalBodyReusable(c *gin.Context, v any) error {

|

||||||

@@ -16,7 +17,13 @@ func UnmarshalBodyReusable(c *gin.Context, v any) error {

|

|||||||

if err != nil {

|

if err != nil {

|

||||||

return err

|

return err

|

||||||

}

|

}

|

||||||

|

contentType := c.Request.Header.Get("Content-Type")

|

||||||

|

if strings.HasPrefix(contentType, "application/json") {

|

||||||

err = json.Unmarshal(requestBody, &v)

|

err = json.Unmarshal(requestBody, &v)

|

||||||

|

} else {

|

||||||

|

// skip for now

|

||||||

|

// TODO: someday non json request have variant model, we will need to implementation this

|

||||||

|

}

|

||||||

if err != nil {

|

if err != nil {

|

||||||

return err

|

return err

|

||||||

}

|

}

|

||||||

|

|||||||

47

common/image/image.go

Normal file

47

common/image/image.go

Normal file

@@ -0,0 +1,47 @@

|

|||||||

|

package image

|

||||||

|

|

||||||

|

import (

|

||||||

|

"image"

|

||||||

|

_ "image/gif"

|

||||||

|

_ "image/jpeg"

|

||||||

|

_ "image/png"

|

||||||

|

"net/http"

|

||||||

|

"regexp"

|

||||||

|

"strings"

|

||||||

|

|

||||||

|

_ "golang.org/x/image/webp"

|

||||||

|

)

|

||||||

|

|

||||||

|

func GetImageSizeFromUrl(url string) (width int, height int, err error) {

|

||||||

|

resp, err := http.Get(url)

|

||||||

|

if err != nil {

|

||||||

|

return

|

||||||

|

}

|

||||||

|

defer resp.Body.Close()

|

||||||

|

img, _, err := image.DecodeConfig(resp.Body)

|

||||||

|

if err != nil {

|

||||||

|

return

|

||||||

|

}

|

||||||

|

return img.Width, img.Height, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

var (

|

||||||

|

reg = regexp.MustCompile(`data:image/([^;]+);base64,`)

|

||||||

|

)

|

||||||

|

|

||||||

|

func GetImageSizeFromBase64(encoded string) (width int, height int, err error) {

|

||||||

|

encoded = strings.TrimPrefix(encoded, "data:image/png;base64,")

|

||||||

|

base64 := strings.NewReader(reg.ReplaceAllString(encoded, ""))

|

||||||

|

img, _, err := image.DecodeConfig(base64)

|

||||||

|

if err != nil {

|

||||||

|

return

|

||||||

|

}

|

||||||

|

return img.Width, img.Height, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

func GetImageSize(image string) (width int, height int, err error) {

|

||||||

|

if strings.HasPrefix(image, "data:image/") {

|

||||||

|

return GetImageSizeFromBase64(image)

|

||||||

|

}

|

||||||

|

return GetImageSizeFromUrl(image)

|

||||||

|

}

|

||||||

154

common/image/image_test.go

Normal file

154

common/image/image_test.go

Normal file

@@ -0,0 +1,154 @@

|

|||||||

|

package image_test

|

||||||

|

|

||||||

|

import (

|

||||||

|

"encoding/base64"

|

||||||

|

"image"

|

||||||

|

_ "image/gif"

|

||||||

|

_ "image/jpeg"

|

||||||

|

_ "image/png"

|

||||||

|

"io"

|

||||||

|

"net/http"

|

||||||

|

"strconv"

|

||||||

|

"strings"

|

||||||

|

"testing"

|

||||||

|

|

||||||

|

img "one-api/common/image"

|

||||||

|

|

||||||

|

"github.com/stretchr/testify/assert"

|

||||||

|

_ "golang.org/x/image/webp"

|

||||||

|

)

|

||||||

|

|

||||||

|

type CountingReader struct {

|

||||||

|

reader io.Reader

|

||||||

|

BytesRead int

|

||||||

|

}

|

||||||

|

|

||||||

|

func (r *CountingReader) Read(p []byte) (n int, err error) {

|

||||||

|

n, err = r.reader.Read(p)

|

||||||

|

r.BytesRead += n

|

||||||

|

return n, err

|

||||||

|

}

|

||||||

|

|

||||||

|

var (

|

||||||

|

cases = []struct {

|

||||||

|

url string

|

||||||

|

format string

|

||||||

|

width int

|

||||||

|

height int

|

||||||

|

}{

|

||||||

|

{"https://upload.wikimedia.org/wikipedia/commons/thumb/d/dd/Gfp-wisconsin-madison-the-nature-boardwalk.jpg/2560px-Gfp-wisconsin-madison-the-nature-boardwalk.jpg", "jpeg", 2560, 1669},

|

||||||

|

{"https://upload.wikimedia.org/wikipedia/commons/9/97/Basshunter_live_performances.png", "png", 4500, 2592},

|

||||||

|

{"https://upload.wikimedia.org/wikipedia/commons/c/c6/TO_THE_ONE_SOMETHINGNESS.webp", "webp", 984, 985},

|

||||||

|

{"https://upload.wikimedia.org/wikipedia/commons/d/d0/01_Das_Sandberg-Modell.gif", "gif", 1917, 1533},

|

||||||

|

{"https://upload.wikimedia.org/wikipedia/commons/6/62/102Cervus.jpg", "jpeg", 270, 230},

|

||||||

|

}

|

||||||

|

)

|

||||||

|

|

||||||

|

func TestDecode(t *testing.T) {

|

||||||

|

// Bytes read: varies sometimes

|

||||||

|

// jpeg: 1063892

|

||||||

|

// png: 294462

|

||||||

|

// webp: 99529

|

||||||

|

// gif: 956153

|

||||||

|

// jpeg#01: 32805

|

||||||

|

for _, c := range cases {

|

||||||

|

t.Run("Decode:"+c.format, func(t *testing.T) {

|

||||||

|

resp, err := http.Get(c.url)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

defer resp.Body.Close()

|

||||||

|

reader := &CountingReader{reader: resp.Body}

|

||||||

|

img, format, err := image.Decode(reader)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

size := img.Bounds().Size()

|

||||||

|

assert.Equal(t, c.format, format)

|

||||||

|

assert.Equal(t, c.width, size.X)

|

||||||

|

assert.Equal(t, c.height, size.Y)

|

||||||

|

t.Logf("Bytes read: %d", reader.BytesRead)

|

||||||

|

})

|

||||||

|

}

|

||||||

|

|

||||||

|

// Bytes read:

|

||||||

|

// jpeg: 4096

|

||||||

|

// png: 4096

|

||||||

|

// webp: 4096

|

||||||

|

// gif: 4096

|

||||||

|

// jpeg#01: 4096

|

||||||

|

for _, c := range cases {

|

||||||

|

t.Run("DecodeConfig:"+c.format, func(t *testing.T) {

|

||||||

|

resp, err := http.Get(c.url)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

defer resp.Body.Close()

|

||||||

|

reader := &CountingReader{reader: resp.Body}

|

||||||

|

config, format, err := image.DecodeConfig(reader)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

assert.Equal(t, c.format, format)

|

||||||

|

assert.Equal(t, c.width, config.Width)

|

||||||

|

assert.Equal(t, c.height, config.Height)

|

||||||

|

t.Logf("Bytes read: %d", reader.BytesRead)

|

||||||

|

})

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

func TestBase64(t *testing.T) {

|

||||||

|

// Bytes read:

|

||||||

|

// jpeg: 1063892

|

||||||

|

// png: 294462

|

||||||

|

// webp: 99072

|

||||||

|

// gif: 953856

|

||||||

|

// jpeg#01: 32805

|

||||||

|

for _, c := range cases {

|

||||||

|

t.Run("Decode:"+c.format, func(t *testing.T) {

|

||||||

|

resp, err := http.Get(c.url)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

defer resp.Body.Close()

|

||||||

|

data, err := io.ReadAll(resp.Body)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

encoded := base64.StdEncoding.EncodeToString(data)

|

||||||

|

body := base64.NewDecoder(base64.StdEncoding, strings.NewReader(encoded))

|

||||||

|

reader := &CountingReader{reader: body}

|

||||||

|

img, format, err := image.Decode(reader)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

size := img.Bounds().Size()

|

||||||

|

assert.Equal(t, c.format, format)

|

||||||

|

assert.Equal(t, c.width, size.X)

|

||||||

|

assert.Equal(t, c.height, size.Y)

|

||||||

|

t.Logf("Bytes read: %d", reader.BytesRead)

|

||||||

|

})

|

||||||

|

}

|

||||||

|

|

||||||

|

// Bytes read:

|

||||||

|

// jpeg: 1536

|

||||||

|

// png: 768

|

||||||

|

// webp: 768

|

||||||

|

// gif: 1536

|

||||||

|

// jpeg#01: 3840

|

||||||

|

for _, c := range cases {

|

||||||

|

t.Run("DecodeConfig:"+c.format, func(t *testing.T) {

|

||||||

|

resp, err := http.Get(c.url)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

defer resp.Body.Close()

|

||||||

|

data, err := io.ReadAll(resp.Body)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

encoded := base64.StdEncoding.EncodeToString(data)

|

||||||

|

body := base64.NewDecoder(base64.StdEncoding, strings.NewReader(encoded))

|

||||||

|

reader := &CountingReader{reader: body}

|

||||||

|

config, format, err := image.DecodeConfig(reader)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

assert.Equal(t, c.format, format)

|

||||||

|

assert.Equal(t, c.width, config.Width)

|

||||||

|

assert.Equal(t, c.height, config.Height)

|

||||||

|

t.Logf("Bytes read: %d", reader.BytesRead)

|

||||||

|

})

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

func TestGetImageSize(t *testing.T) {

|

||||||

|

for i, c := range cases {

|

||||||

|

t.Run("Decode:"+strconv.Itoa(i), func(t *testing.T) {

|

||||||

|

width, height, err := img.GetImageSize(c.url)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

assert.Equal(t, c.width, width)

|

||||||

|

assert.Equal(t, c.height, height)

|

||||||

|

})

|

||||||

|

}

|

||||||

|

}

|

||||||

@@ -6,6 +6,29 @@ import (

|

|||||||

"time"

|

"time"

|

||||||

)

|

)

|

||||||

|

|

||||||

|

var DalleSizeRatios = map[string]map[string]float64{

|

||||||

|

"dall-e-2": {

|

||||||

|

"256x256": 1,

|

||||||

|

"512x512": 1.125,

|

||||||

|

"1024x1024": 1.25,

|

||||||

|

},

|

||||||

|

"dall-e-3": {

|

||||||

|

"1024x1024": 1,

|

||||||

|

"1024x1792": 2,

|

||||||

|

"1792x1024": 2,

|

||||||

|

},

|

||||||

|

}

|

||||||

|

|

||||||

|

var DalleGenerationImageAmounts = map[string][2]int{

|

||||||

|

"dall-e-2": {1, 10},

|

||||||

|

"dall-e-3": {1, 1}, // OpenAI allows n=1 currently.

|

||||||

|

}

|

||||||

|

|

||||||

|

var DalleImagePromptLengthLimitations = map[string]int{

|

||||||

|

"dall-e-2": 1000,

|

||||||

|

"dall-e-3": 4000,

|

||||||

|

}

|

||||||

|

|

||||||

// ModelRatio

|

// ModelRatio

|

||||||

// https://platform.openai.com/docs/models/model-endpoint-compatibility

|

// https://platform.openai.com/docs/models/model-endpoint-compatibility

|

||||||

// https://cloud.baidu.com/doc/WENXINWORKSHOP/s/Blfmc9dlf

|

// https://cloud.baidu.com/doc/WENXINWORKSHOP/s/Blfmc9dlf

|

||||||

@@ -37,6 +60,10 @@ var ModelRatio = map[string]float64{

|

|||||||

"text-davinci-edit-001": 10,

|

"text-davinci-edit-001": 10,

|

||||||

"code-davinci-edit-001": 10,

|

"code-davinci-edit-001": 10,

|

||||||

"whisper-1": 15, // $0.006 / minute -> $0.006 / 150 words -> $0.006 / 200 tokens -> $0.03 / 1k tokens

|

"whisper-1": 15, // $0.006 / minute -> $0.006 / 150 words -> $0.006 / 200 tokens -> $0.03 / 1k tokens

|

||||||

|

"tts-1": 7.5, // $0.015 / 1K characters

|

||||||

|

"tts-1-1106": 7.5,

|

||||||

|

"tts-1-hd": 15, // $0.030 / 1K characters

|

||||||

|

"tts-1-hd-1106": 15,

|

||||||

"davinci": 10,

|

"davinci": 10,

|

||||||

"curie": 10,

|

"curie": 10,

|

||||||

"babbage": 10,

|

"babbage": 10,

|

||||||

@@ -45,9 +72,12 @@ var ModelRatio = map[string]float64{

|

|||||||

"text-search-ada-doc-001": 10,

|

"text-search-ada-doc-001": 10,

|

||||||

"text-moderation-stable": 0.1,

|

"text-moderation-stable": 0.1,

|

||||||

"text-moderation-latest": 0.1,

|

"text-moderation-latest": 0.1,

|

||||||

"dall-e": 8,

|

"dall-e-2": 8, // $0.016 - $0.020 / image

|

||||||

|

"dall-e-3": 20, // $0.040 - $0.120 / image

|

||||||

"claude-instant-1": 0.815, // $1.63 / 1M tokens

|

"claude-instant-1": 0.815, // $1.63 / 1M tokens

|

||||||

"claude-2": 5.51, // $11.02 / 1M tokens

|

"claude-2": 5.51, // $11.02 / 1M tokens

|

||||||

|

"claude-2.0": 5.51, // $11.02 / 1M tokens

|

||||||

|

"claude-2.1": 5.51, // $11.02 / 1M tokens

|

||||||

"ERNIE-Bot": 0.8572, // ¥0.012 / 1k tokens

|

"ERNIE-Bot": 0.8572, // ¥0.012 / 1k tokens

|

||||||

"ERNIE-Bot-turbo": 0.5715, // ¥0.008 / 1k tokens

|

"ERNIE-Bot-turbo": 0.5715, // ¥0.008 / 1k tokens

|

||||||

"ERNIE-Bot-4": 8.572, // ¥0.12 / 1k tokens

|

"ERNIE-Bot-4": 8.572, // ¥0.12 / 1k tokens

|

||||||

|

|||||||

@@ -5,14 +5,15 @@ import (

|

|||||||

"encoding/json"

|

"encoding/json"

|

||||||

"errors"

|

"errors"

|

||||||

"fmt"

|

"fmt"

|

||||||

"github.com/gin-gonic/gin"

|

"io"

|

||||||

"net/http"

|

"net/http"

|

||||||

"one-api/common"

|

"one-api/common"

|

||||||

"one-api/model"

|

"one-api/model"

|

||||||

"strconv"

|

"strconv"

|

||||||

"strings"

|

|

||||||

"sync"

|

"sync"

|

||||||

"time"

|

"time"

|

||||||

|

|

||||||

|

"github.com/gin-gonic/gin"

|

||||||

)

|

)

|

||||||

|

|

||||||

func testChannel(channel *model.Channel, request ChatRequest) (err error, openaiErr *OpenAIError) {

|

func testChannel(channel *model.Channel, request ChatRequest) (err error, openaiErr *OpenAIError) {

|

||||||

@@ -43,16 +44,14 @@ func testChannel(channel *model.Channel, request ChatRequest) (err error, openai

|

|||||||

}

|

}

|

||||||

requestURL := common.ChannelBaseURLs[channel.Type]

|

requestURL := common.ChannelBaseURLs[channel.Type]

|

||||||

if channel.Type == common.ChannelTypeAzure {

|

if channel.Type == common.ChannelTypeAzure {

|

||||||

requestURL = fmt.Sprintf("%s/openai/deployments/%s/chat/completions?api-version=2023-03-15-preview", channel.GetBaseURL(), request.Model)

|

requestURL = getFullRequestURL(channel.GetBaseURL(), fmt.Sprintf("/openai/deployments/%s/chat/completions?api-version=2023-03-15-preview", request.Model), channel.Type)

|

||||||

} else {

|

} else {

|

||||||

if channel.GetBaseURL() != "" {

|

if baseURL := channel.GetBaseURL(); len(baseURL) > 0 {

|

||||||

requestURL = channel.GetBaseURL()

|

requestURL = baseURL

|

||||||

}

|

}

|

||||||

requestURL += "/v1/chat/completions"

|

|

||||||

}

|

|

||||||

// for Cloudflare AI gateway: https://github.com/songquanpeng/one-api/pull/639

|

|

||||||

requestURL = strings.Replace(requestURL, "/v1/v1", "/v1", 1)

|

|

||||||

|

|

||||||

|

requestURL = getFullRequestURL(requestURL, "/v1/chat/completions", channel.Type)

|

||||||

|

}

|

||||||

jsonData, err := json.Marshal(request)

|

jsonData, err := json.Marshal(request)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

return err, nil

|

return err, nil

|

||||||

@@ -73,11 +72,18 @@ func testChannel(channel *model.Channel, request ChatRequest) (err error, openai

|

|||||||

}

|

}

|

||||||

defer resp.Body.Close()

|

defer resp.Body.Close()

|

||||||

var response TextResponse

|

var response TextResponse

|

||||||

err = json.NewDecoder(resp.Body).Decode(&response)

|

body, err := io.ReadAll(resp.Body)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

return err, nil

|

return err, nil

|

||||||

}

|

}

|

||||||

|

err = json.Unmarshal(body, &response)

|

||||||

|

if err != nil {

|

||||||

|

return fmt.Errorf("Error: %s\nResp body: %s", err, body), nil

|

||||||

|

}

|

||||||

if response.Usage.CompletionTokens == 0 {

|

if response.Usage.CompletionTokens == 0 {

|

||||||

|

if response.Error.Message == "" {

|

||||||

|

response.Error.Message = "补全 tokens 非预期返回 0"

|

||||||

|

}

|

||||||

return errors.New(fmt.Sprintf("type %s, code %v, message %s", response.Error.Type, response.Error.Code, response.Error.Message)), &response.Error

|

return errors.New(fmt.Sprintf("type %s, code %v, message %s", response.Error.Type, response.Error.Code, response.Error.Message)), &response.Error

|

||||||

}

|

}

|

||||||

return nil, nil

|

return nil, nil

|

||||||

@@ -139,20 +145,32 @@ func TestChannel(c *gin.Context) {

|

|||||||

var testAllChannelsLock sync.Mutex

|

var testAllChannelsLock sync.Mutex

|

||||||

var testAllChannelsRunning bool = false

|

var testAllChannelsRunning bool = false

|

||||||

|

|

||||||

// disable & notify

|

func notifyRootUser(subject string, content string) {

|

||||||

func disableChannel(channelId int, channelName string, reason string) {

|

|

||||||

if common.RootUserEmail == "" {

|

if common.RootUserEmail == "" {

|

||||||

common.RootUserEmail = model.GetRootUserEmail()

|

common.RootUserEmail = model.GetRootUserEmail()

|

||||||

}

|

}

|

||||||

model.UpdateChannelStatusById(channelId, common.ChannelStatusAutoDisabled)

|

|

||||||

subject := fmt.Sprintf("通道「%s」(#%d)已被禁用", channelName, channelId)

|

|

||||||

content := fmt.Sprintf("通道「%s」(#%d)已被禁用,原因:%s", channelName, channelId, reason)

|

|

||||||

err := common.SendEmail(subject, common.RootUserEmail, content)

|

err := common.SendEmail(subject, common.RootUserEmail, content)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

common.SysError(fmt.Sprintf("failed to send email: %s", err.Error()))

|

common.SysError(fmt.Sprintf("failed to send email: %s", err.Error()))

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

// disable & notify

|

||||||

|

func disableChannel(channelId int, channelName string, reason string) {

|

||||||

|

model.UpdateChannelStatusById(channelId, common.ChannelStatusAutoDisabled)

|

||||||

|

subject := fmt.Sprintf("通道「%s」(#%d)已被禁用", channelName, channelId)

|

||||||

|

content := fmt.Sprintf("通道「%s」(#%d)已被禁用,原因:%s", channelName, channelId, reason)

|

||||||

|

notifyRootUser(subject, content)

|

||||||

|

}

|

||||||

|

|

||||||

|

// enable & notify

|

||||||

|

func enableChannel(channelId int, channelName string) {

|

||||||

|

model.UpdateChannelStatusById(channelId, common.ChannelStatusEnabled)

|

||||||

|

subject := fmt.Sprintf("通道「%s」(#%d)已被启用", channelName, channelId)

|

||||||

|

content := fmt.Sprintf("通道「%s」(#%d)已被启用", channelName, channelId)

|

||||||

|

notifyRootUser(subject, content)

|

||||||

|

}

|

||||||

|

|

||||||

func testAllChannels(notify bool) error {

|

func testAllChannels(notify bool) error {

|

||||||

if common.RootUserEmail == "" {

|

if common.RootUserEmail == "" {

|

||||||

common.RootUserEmail = model.GetRootUserEmail()

|

common.RootUserEmail = model.GetRootUserEmail()

|

||||||

@@ -175,20 +193,21 @@ func testAllChannels(notify bool) error {

|

|||||||

}

|

}

|

||||||

go func() {

|

go func() {

|

||||||

for _, channel := range channels {

|

for _, channel := range channels {

|

||||||

if channel.Status != common.ChannelStatusEnabled {

|

isChannelEnabled := channel.Status == common.ChannelStatusEnabled

|

||||||

continue

|

|

||||||

}

|

|

||||||

tik := time.Now()

|

tik := time.Now()

|

||||||

err, openaiErr := testChannel(channel, *testRequest)

|

err, openaiErr := testChannel(channel, *testRequest)

|

||||||

tok := time.Now()

|

tok := time.Now()

|

||||||

milliseconds := tok.Sub(tik).Milliseconds()

|

milliseconds := tok.Sub(tik).Milliseconds()

|

||||||

if milliseconds > disableThreshold {

|

if isChannelEnabled && milliseconds > disableThreshold {

|

||||||

err = errors.New(fmt.Sprintf("响应时间 %.2fs 超过阈值 %.2fs", float64(milliseconds)/1000.0, float64(disableThreshold)/1000.0))

|

err = errors.New(fmt.Sprintf("响应时间 %.2fs 超过阈值 %.2fs", float64(milliseconds)/1000.0, float64(disableThreshold)/1000.0))

|

||||||

disableChannel(channel.Id, channel.Name, err.Error())

|

disableChannel(channel.Id, channel.Name, err.Error())

|

||||||

}

|

}

|

||||||

if shouldDisableChannel(openaiErr, -1) {

|

if isChannelEnabled && shouldDisableChannel(openaiErr, -1) {

|

||||||

disableChannel(channel.Id, channel.Name, err.Error())

|

disableChannel(channel.Id, channel.Name, err.Error())

|

||||||

}

|

}

|

||||||

|

if !isChannelEnabled && shouldEnableChannel(err, openaiErr) {

|

||||||

|

enableChannel(channel.Id, channel.Name)

|

||||||

|

}

|

||||||

channel.UpdateResponseTime(milliseconds)

|

channel.UpdateResponseTime(milliseconds)

|

||||||

time.Sleep(common.RequestInterval)

|

time.Sleep(common.RequestInterval)

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -55,12 +55,21 @@ func init() {

|

|||||||

// https://platform.openai.com/docs/models/model-endpoint-compatibility

|

// https://platform.openai.com/docs/models/model-endpoint-compatibility

|

||||||

openAIModels = []OpenAIModels{

|

openAIModels = []OpenAIModels{

|

||||||

{

|

{

|

||||||

Id: "dall-e",

|

Id: "dall-e-2",

|

||||||

Object: "model",

|

Object: "model",

|

||||||

Created: 1677649963,

|

Created: 1677649963,

|

||||||

OwnedBy: "openai",

|

OwnedBy: "openai",

|

||||||

Permission: permission,

|

Permission: permission,

|

||||||

Root: "dall-e",

|

Root: "dall-e-2",

|

||||||

|

Parent: nil,

|

||||||

|

},

|

||||||

|

{

|

||||||

|

Id: "dall-e-3",

|

||||||

|

Object: "model",

|

||||||

|

Created: 1677649963,

|

||||||

|

OwnedBy: "openai",

|

||||||

|

Permission: permission,

|

||||||

|

Root: "dall-e-3",

|

||||||

Parent: nil,

|

Parent: nil,

|

||||||

},

|

},

|

||||||

{

|

{

|

||||||

@@ -72,6 +81,42 @@ func init() {

|

|||||||

Root: "whisper-1",

|

Root: "whisper-1",

|

||||||

Parent: nil,

|

Parent: nil,

|

||||||

},

|

},

|

||||||

|

{

|

||||||

|

Id: "tts-1",

|

||||||

|

Object: "model",

|

||||||

|

Created: 1677649963,

|

||||||

|

OwnedBy: "openai",

|

||||||

|

Permission: permission,

|

||||||

|

Root: "tts-1",

|

||||||

|

Parent: nil,

|

||||||

|

},

|

||||||

|

{

|

||||||

|

Id: "tts-1-1106",

|

||||||

|

Object: "model",

|

||||||

|

Created: 1677649963,

|

||||||

|

OwnedBy: "openai",

|

||||||

|

Permission: permission,

|

||||||

|

Root: "tts-1-1106",

|

||||||

|

Parent: nil,

|

||||||

|

},

|

||||||

|

{

|

||||||

|

Id: "tts-1-hd",

|

||||||

|

Object: "model",

|

||||||

|

Created: 1677649963,

|

||||||

|

OwnedBy: "openai",

|

||||||

|

Permission: permission,

|

||||||

|

Root: "tts-1-hd",

|

||||||

|

Parent: nil,

|

||||||

|

},

|

||||||

|

{

|

||||||

|

Id: "tts-1-hd-1106",

|

||||||

|

Object: "model",

|

||||||

|

Created: 1677649963,

|

||||||

|

OwnedBy: "openai",

|

||||||

|

Permission: permission,

|

||||||

|

Root: "tts-1-hd-1106",

|

||||||

|

Parent: nil,

|

||||||

|

},

|

||||||

{

|

{

|

||||||

Id: "gpt-3.5-turbo",

|

Id: "gpt-3.5-turbo",

|

||||||

Object: "model",

|

Object: "model",

|

||||||

@@ -315,6 +360,24 @@ func init() {

|

|||||||

Root: "claude-2",

|

Root: "claude-2",

|

||||||

Parent: nil,

|

Parent: nil,

|

||||||

},

|

},

|

||||||

|

{

|

||||||

|

Id: "claude-2.1",

|

||||||

|

Object: "model",

|

||||||

|

Created: 1677649963,

|

||||||

|

OwnedBy: "anthropic",

|

||||||

|

Permission: permission,

|

||||||

|

Root: "claude-2.1",

|

||||||

|

Parent: nil,

|

||||||

|

},

|

||||||

|

{

|

||||||

|

Id: "claude-2.0",

|

||||||

|

Object: "model",

|

||||||

|

Created: 1677649963,

|

||||||

|

OwnedBy: "anthropic",

|

||||||

|

Permission: permission,

|

||||||

|

Root: "claude-2.0",

|

||||||

|

Parent: nil,

|

||||||

|

},

|

||||||

{

|

{

|

||||||

Id: "ERNIE-Bot",

|

Id: "ERNIE-Bot",

|

||||||

Object: "model",

|

Object: "model",

|

||||||

|

|||||||

@@ -5,7 +5,6 @@ import (

|

|||||||

"encoding/json"

|

"encoding/json"

|

||||||

"fmt"

|

"fmt"

|

||||||

"io"

|

"io"

|

||||||

"log"

|

|

||||||

"net/http"

|

"net/http"

|

||||||

"one-api/common"

|

"one-api/common"

|

||||||

"strconv"

|

"strconv"

|

||||||

@@ -49,23 +48,8 @@ type AIProxyLibraryStreamResponse struct {

|

|||||||

|

|

||||||

func requestOpenAI2AIProxyLibrary(request GeneralOpenAIRequest) *AIProxyLibraryRequest {

|

func requestOpenAI2AIProxyLibrary(request GeneralOpenAIRequest) *AIProxyLibraryRequest {

|

||||||

query := ""

|

query := ""

|

||||||

|

if len(request.Messages) != 0 {

|

||||||

if request.MessagesLen() != 0 {

|

query = request.Messages[len(request.Messages)-1].StringContent()

|

||||||

if msgs, err := request.TextMessages(); err == nil {

|

|

||||||

query = msgs[len(msgs)-1].Content

|

|

||||||

} else if msgs, err := request.VisionMessages(); err == nil {

|

|

||||||

lastMsg := msgs[len(msgs)-1]

|

|

||||||

if len(lastMsg.Content) != 0 {

|

|

||||||

for i := range lastMsg.Content {

|

|

||||||

if lastMsg.Content[i].Type == OpenaiVisionMessageContentTypeText {

|

|

||||||

query = lastMsg.Content[i].Text

|

|

||||||

break

|

|

||||||

}

|

|

||||||

}

|

|

||||||

}

|

|

||||||

} else {

|

|

||||||

log.Panicf("unknown message type: %T", msgs)

|

|

||||||

}

|

|

||||||

}

|

}

|

||||||

|

|

||||||

return &AIProxyLibraryRequest{

|

return &AIProxyLibraryRequest{

|

||||||

|

|||||||

@@ -1,6 +1,7 @@

|

|||||||

package controller

|

package controller

|

||||||

|

|

||||||

import (

|

import (

|

||||||

|

"bufio"

|

||||||

"bytes"

|

"bytes"

|

||||||

"context"

|

"context"

|

||||||

"encoding/json"

|

"encoding/json"

|

||||||

@@ -11,6 +12,7 @@ import (

|

|||||||

"net/http"

|

"net/http"

|

||||||

"one-api/common"

|

"one-api/common"

|

||||||

"one-api/model"

|

"one-api/model"

|

||||||

|

"strings"

|

||||||

)

|

)

|

||||||

|

|

||||||

func relayAudioHelper(c *gin.Context, relayMode int) *OpenAIErrorWithStatusCode {

|

func relayAudioHelper(c *gin.Context, relayMode int) *OpenAIErrorWithStatusCode {

|

||||||

@@ -21,16 +23,41 @@ func relayAudioHelper(c *gin.Context, relayMode int) *OpenAIErrorWithStatusCode

|

|||||||

channelId := c.GetInt("channel_id")

|

channelId := c.GetInt("channel_id")

|

||||||

userId := c.GetInt("id")

|

userId := c.GetInt("id")

|

||||||

group := c.GetString("group")

|

group := c.GetString("group")

|

||||||

|

tokenName := c.GetString("token_name")

|

||||||

|

|

||||||

|

var ttsRequest TextToSpeechRequest

|

||||||

|

if relayMode == RelayModeAudioSpeech {

|

||||||

|

// Read JSON

|

||||||

|

err := common.UnmarshalBodyReusable(c, &ttsRequest)

|

||||||

|

// Check if JSON is valid

|

||||||

|

if err != nil {

|

||||||

|

return errorWrapper(err, "invalid_json", http.StatusBadRequest)

|

||||||

|

}

|

||||||

|

audioModel = ttsRequest.Model

|

||||||

|

// Check if text is too long 4096

|

||||||

|

if len(ttsRequest.Input) > 4096 {

|

||||||

|

return errorWrapper(errors.New("input is too long (over 4096 characters)"), "text_too_long", http.StatusBadRequest)

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

preConsumedTokens := common.PreConsumedQuota

|

|

||||||

modelRatio := common.GetModelRatio(audioModel)

|

modelRatio := common.GetModelRatio(audioModel)

|

||||||

groupRatio := common.GetGroupRatio(group)

|

groupRatio := common.GetGroupRatio(group)

|

||||||

ratio := modelRatio * groupRatio

|

ratio := modelRatio * groupRatio

|

||||||

preConsumedQuota := int(float64(preConsumedTokens) * ratio)

|

var quota int

|

||||||

|

var preConsumedQuota int

|

||||||

|

switch relayMode {

|

||||||

|

case RelayModeAudioSpeech:

|

||||||

|

preConsumedQuota = int(float64(len(ttsRequest.Input)) * ratio)

|

||||||

|

quota = preConsumedQuota

|

||||||

|

default:

|

||||||

|

preConsumedQuota = int(float64(common.PreConsumedQuota) * ratio)

|

||||||

|

}

|

||||||

userQuota, err := model.CacheGetUserQuota(userId)

|

userQuota, err := model.CacheGetUserQuota(userId)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

return errorWrapper(err, "get_user_quota_failed", http.StatusInternalServerError)

|

return errorWrapper(err, "get_user_quota_failed", http.StatusInternalServerError)

|

||||||

}

|

}

|

||||||

|

|

||||||

|

// Check if user quota is enough

|

||||||

if userQuota-preConsumedQuota < 0 {

|

if userQuota-preConsumedQuota < 0 {

|

||||||

return errorWrapper(errors.New("user quota is not enough"), "insufficient_user_quota", http.StatusForbidden)

|

return errorWrapper(errors.New("user quota is not enough"), "insufficient_user_quota", http.StatusForbidden)

|

||||||

}

|

}

|

||||||

@@ -70,13 +97,34 @@ func relayAudioHelper(c *gin.Context, relayMode int) *OpenAIErrorWithStatusCode

|

|||||||

}

|

}

|

||||||

|

|

||||||

fullRequestURL := getFullRequestURL(baseURL, requestURL, channelType)

|

fullRequestURL := getFullRequestURL(baseURL, requestURL, channelType)

|

||||||

requestBody := c.Request.Body

|

if relayMode == RelayModeAudioTranscription && channelType == common.ChannelTypeAzure {

|

||||||

|

// https://learn.microsoft.com/en-us/azure/ai-services/openai/whisper-quickstart?tabs=command-line#rest-api

|

||||||

|

apiVersion := GetAPIVersion(c)

|

||||||

|

fullRequestURL = fmt.Sprintf("%s/openai/deployments/%s/audio/transcriptions?api-version=%s", baseURL, audioModel, apiVersion)

|

||||||

|

}

|

||||||

|

|

||||||

|

requestBody := &bytes.Buffer{}

|

||||||

|

_, err = io.Copy(requestBody, c.Request.Body)

|

||||||

|

if err != nil {

|

||||||

|

return errorWrapper(err, "new_request_body_failed", http.StatusInternalServerError)

|

||||||

|

}

|

||||||

|