mirror of

https://github.com/songquanpeng/one-api.git

synced 2025-10-24 02:13:42 +08:00

Compare commits

20 Commits

v0.3.1

...

v0.3.3-alp

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

fa79e8b7a3 | ||

|

|

1cc7c20183 | ||

|

|

2eee97e9b6 | ||

|

|

a3a1b612b0 | ||

|

|

61e682ca47 | ||

|

|

b383983106 | ||

|

|

cfd587117e | ||

|

|

ef9dca28f5 | ||

|

|

741c0b9c18 | ||

|

|

3711f4a741 | ||

|

|

7c6bf3e97b | ||

|

|

481ba41fbd | ||

|

|

2779d6629c | ||

|

|

e509899daf | ||

|

|

b53cdbaf05 | ||

|

|

ced89398a5 | ||

|

|

09c2e3bcec | ||

|

|

5cba800fa6 | ||

|

|

2d39a135f2 | ||

|

|

3c6834a79c |

47

README.md

47

README.md

@@ -38,6 +38,8 @@ _✨ All in one 的 OpenAI 接口,整合各种 API 访问方式,开箱即用

|

||||

<a href="https://github.com/songquanpeng/one-api#截图展示">截图展示</a>

|

||||

·

|

||||

<a href="https://openai.justsong.cn/">在线演示</a>

|

||||

·

|

||||

<a href="https://github.com/songquanpeng/one-api#常见问题">常见问题</a>

|

||||

</p>

|

||||

|

||||

> **Warning**:从 `v0.2` 版本升级到 `v0.3` 版本需要手动迁移数据库,请手动执行[数据库迁移脚本](./bin/migration_v0.2-v0.3.sql)。

|

||||

@@ -49,25 +51,28 @@ _✨ All in one 的 OpenAI 接口,整合各种 API 访问方式,开箱即用

|

||||

+ [x] **Azure OpenAI API**

|

||||

+ [x] [API2D](https://api2d.com/r/197971)

|

||||

+ [x] [OhMyGPT](https://aigptx.top?aff=uFpUl2Kf)

|

||||

+ [x] [CloseAI](https://console.openai-asia.com)

|

||||

+ [x] [OpenAI-SB](https://openai-sb.com)

|

||||

+ [x] [AI Proxy](https://aiproxy.io/?i=OneAPI) (邀请码:`OneAPI`)

|

||||

+ [x] [AI.LS](https://ai.ls)

|

||||

+ [x] [OpenAI Max](https://openaimax.com)

|

||||

+ [x] [OpenAI-SB](https://openai-sb.com)

|

||||

+ [x] [CloseAI](https://console.openai-asia.com/r/2412)

|

||||

+ [x] 自定义渠道:例如使用自行搭建的 OpenAI 代理

|

||||

2. 支持通过**负载均衡**的方式访问多个渠道。

|

||||

3. 支持 **stream 模式**,可以通过流式传输实现打字机效果。

|

||||

4. 支持**令牌管理**,设置令牌的过期时间和使用次数。

|

||||

5. 支持**兑换码管理**,支持批量生成和导出兑换码,可使用兑换码为令牌进行充值。

|

||||

6. 支持**通道管理**,批量创建通道。

|

||||

7. 支持发布公告,设置充值链接,设置新用户初始额度。

|

||||

8. 支持丰富的**自定义**设置,

|

||||

4. 支持**多机部署**,[详见此处](#多机部署)。

|

||||

5. 支持**令牌管理**,设置令牌的过期时间和使用次数。

|

||||

6. 支持**兑换码管理**,支持批量生成和导出兑换码,可使用兑换码为账户进行充值。

|

||||

7. 支持**通道管理**,批量创建通道。

|

||||

8. 支持发布公告,设置充值链接,设置新用户初始额度。

|

||||

9. 支持丰富的**自定义**设置,

|

||||

1. 支持自定义系统名称,logo 以及页脚。

|

||||

2. 支持自定义首页和关于页面,可以选择使用 HTML & Markdown 代码进行自定义,或者使用一个单独的网页通过 iframe 嵌入。

|

||||

9. 支持通过系统访问令牌访问管理 API。

|

||||

10. 支持用户管理,支持**多种用户登录注册方式**:

|

||||

10. 支持通过系统访问令牌访问管理 API。

|

||||

11. 支持用户管理,支持**多种用户登录注册方式**:

|

||||

+ 邮箱登录注册以及通过邮箱进行密码重置。

|

||||

+ [GitHub 开放授权](https://github.com/settings/applications/new)。

|

||||

+ 微信公众号授权(需要额外部署 [WeChat Server](https://github.com/songquanpeng/wechat-server))。

|

||||

11. 未来其他大模型开放 API 后,将第一时间支持,并将其封装成同样的 API 访问方式。

|

||||

12. 未来其他大模型开放 API 后,将第一时间支持,并将其封装成同样的 API 访问方式。

|

||||

|

||||

## 部署

|

||||

### 基于 Docker 进行部署

|

||||

@@ -90,13 +95,10 @@ server{

|

||||

proxy_set_header X-Forwarded-For $remote_addr;

|

||||

proxy_cache_bypass $http_upgrade;

|

||||

proxy_set_header Accept-Encoding gzip;

|

||||

proxy_buffering off; # 重要:关闭代理缓冲

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

注意,为了 SSE 正常工作,需要关闭 Nginx 的代理缓冲。

|

||||

|

||||

之后使用 Let's Encrypt 的 certbot 配置 HTTPS:

|

||||

```bash

|

||||

# Ubuntu 安装 certbot:

|

||||

@@ -133,6 +135,14 @@ sudo service nginx restart

|

||||

|

||||

更加详细的部署教程[参见此处](https://iamazing.cn/page/how-to-deploy-a-website)。

|

||||

|

||||

### 多机部署

|

||||

1. 所有服务器 `SESSION_SECRET` 设置一样的值。

|

||||

2. 必须设置 `SQL_DSN`,使用 MySQL 数据库而非 SQLite,请自行配置主备数据库同步。

|

||||

3. 所有从服务器必须设置 `SYNC_FREQUENCY`,以定期从数据库同步配置。

|

||||

4. 从服务器可以选择设置 `FRONTEND_BASE_URL`,以重定向页面请求到主服务器。

|

||||

|

||||

环境变量的具体使用方法详见[此处](#环境变量)。

|

||||

|

||||

## 配置

|

||||

系统本身开箱即用。

|

||||

|

||||

@@ -157,6 +167,10 @@ sudo service nginx restart

|

||||

+ 例子:`SESSION_SECRET=random_string`

|

||||

3. `SQL_DSN`:设置之后将使用指定数据库而非 SQLite。

|

||||

+ 例子:`SQL_DSN=root:123456@tcp(localhost:3306)/one-api`

|

||||

4. `FRONTEND_BASE_URL`:设置之后将使用指定的前端地址,而非后端地址。

|

||||

+ 例子:`FRONTEND_BASE_URL=https://openai.justsong.cn`

|

||||

5. `SYNC_FREQUENCY`:设置之后将定期与数据库同步配置,单位为秒,未设置则不进行同步。

|

||||

+ 例子:`SYNC_FREQUENCY=60`

|

||||

|

||||

### 命令行参数

|

||||

1. `--port <port_number>`: 指定服务器监听的端口号,默认为 `3000`。

|

||||

@@ -174,3 +188,10 @@ https://openai.justsong.cn

|

||||

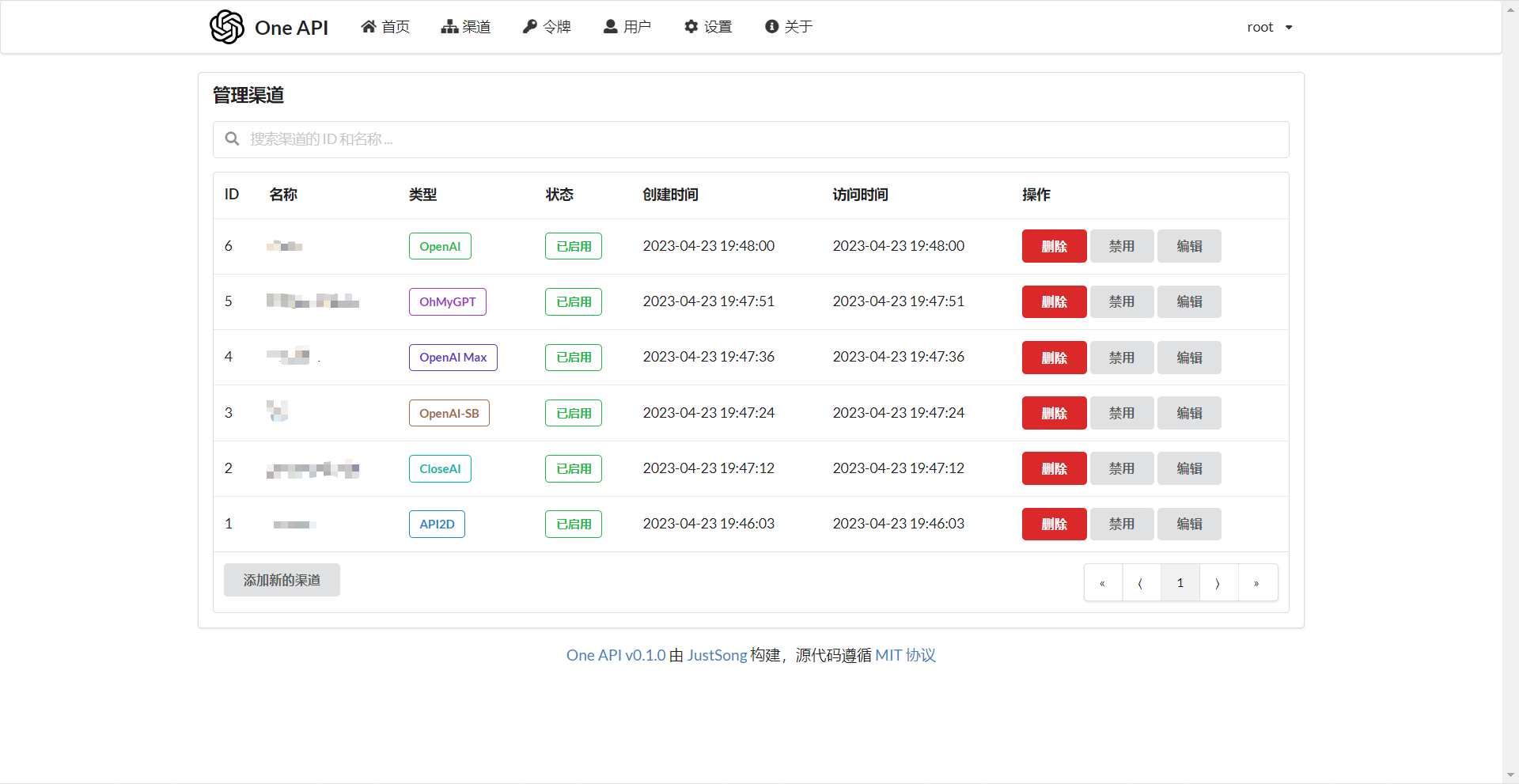

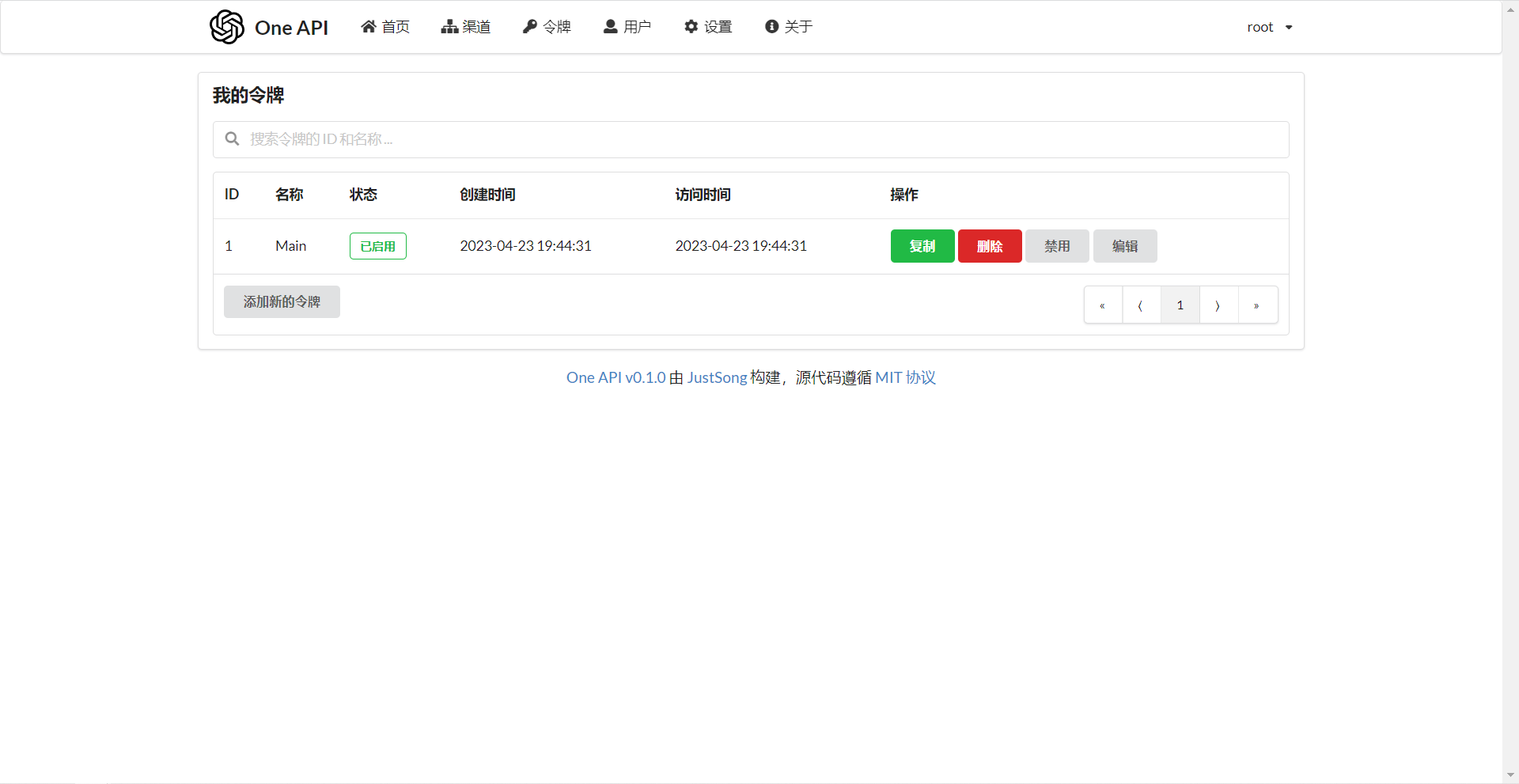

### 截图展示

|

||||

|

||||

|

||||

|

||||

## 常见问题

|

||||

1. 账户额度足够为什么提示额度不足?

|

||||

+ 请检查你的令牌额度是否足够,这个和账户额度是分开的。

|

||||

+ 令牌额度仅供用户设置最大使用量,用户可自由设置。

|

||||

2. 宝塔部署后访问出现空白页面?

|

||||

+ 自动配置的问题,详见[#97](https://github.com/songquanpeng/one-api/issues/97)。

|

||||

@@ -127,6 +127,8 @@ const (

|

||||

ChannelTypeOpenAIMax = 6

|

||||

ChannelTypeOhMyGPT = 7

|

||||

ChannelTypeCustom = 8

|

||||

ChannelTypeAILS = 9

|

||||

ChannelTypeAIProxy = 10

|

||||

)

|

||||

|

||||

var ChannelBaseURLs = []string{

|

||||

@@ -139,4 +141,6 @@ var ChannelBaseURLs = []string{

|

||||

"https://api.openaimax.com", // 6

|

||||

"https://api.ohmygpt.com", // 7

|

||||

"", // 8

|

||||

"https://api.caipacity.com", // 9

|

||||

"https://api.aiproxy.io", // 10

|

||||

}

|

||||

|

||||

65

controller/relay-utils.go

Normal file

65

controller/relay-utils.go

Normal file

@@ -0,0 +1,65 @@

|

||||

package controller

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"github.com/pkoukk/tiktoken-go"

|

||||

"one-api/common"

|

||||

"strings"

|

||||

)

|

||||

|

||||

var tokenEncoderMap = map[string]*tiktoken.Tiktoken{}

|

||||

|

||||

func getTokenEncoder(model string) *tiktoken.Tiktoken {

|

||||

if tokenEncoder, ok := tokenEncoderMap[model]; ok {

|

||||

return tokenEncoder

|

||||

}

|

||||

tokenEncoder, err := tiktoken.EncodingForModel(model)

|

||||

if err != nil {

|

||||

common.SysError(fmt.Sprintf("failed to get token encoder for model %s: %s, using encoder for gpt-3.5-turbo", model, err.Error()))

|

||||

tokenEncoder, err = tiktoken.EncodingForModel("gpt-3.5-turbo")

|

||||

if err != nil {

|

||||

common.FatalLog(fmt.Sprintf("failed to get token encoder for model gpt-3.5-turbo: %s", err.Error()))

|

||||

}

|

||||

}

|

||||

tokenEncoderMap[model] = tokenEncoder

|

||||

return tokenEncoder

|

||||

}

|

||||

|

||||

func countTokenMessages(messages []Message, model string) int {

|

||||

tokenEncoder := getTokenEncoder(model)

|

||||

// Reference:

|

||||

// https://github.com/openai/openai-cookbook/blob/main/examples/How_to_count_tokens_with_tiktoken.ipynb

|

||||

// https://github.com/pkoukk/tiktoken-go/issues/6

|

||||

//

|

||||

// Every message follows <|start|>{role/name}\n{content}<|end|>\n

|

||||

var tokensPerMessage int

|

||||

var tokensPerName int

|

||||

if strings.HasPrefix(model, "gpt-3.5") {

|

||||

tokensPerMessage = 4

|

||||

tokensPerName = -1 // If there's a name, the role is omitted

|

||||

} else if strings.HasPrefix(model, "gpt-4") {

|

||||

tokensPerMessage = 3

|

||||

tokensPerName = 1

|

||||

} else {

|

||||

tokensPerMessage = 3

|

||||

tokensPerName = 1

|

||||

}

|

||||

tokenNum := 0

|

||||

for _, message := range messages {

|

||||

tokenNum += tokensPerMessage

|

||||

tokenNum += len(tokenEncoder.Encode(message.Content, nil, nil))

|

||||

tokenNum += len(tokenEncoder.Encode(message.Role, nil, nil))

|

||||

if message.Name != nil {

|

||||

tokenNum += tokensPerName

|

||||

tokenNum += len(tokenEncoder.Encode(*message.Name, nil, nil))

|

||||

}

|

||||

}

|

||||

tokenNum += 3 // Every reply is primed with <|start|>assistant<|message|>

|

||||

return tokenNum

|

||||

}

|

||||

|

||||

func countTokenText(text string, model string) int {

|

||||

tokenEncoder := getTokenEncoder(model)

|

||||

token := tokenEncoder.Encode(text, nil, nil)

|

||||

return len(token)

|

||||

}

|

||||

@@ -6,7 +6,6 @@ import (

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/pkoukk/tiktoken-go"

|

||||

"io"

|

||||

"net/http"

|

||||

"one-api/common"

|

||||

@@ -15,8 +14,9 @@ import (

|

||||

)

|

||||

|

||||

type Message struct {

|

||||

Role string `json:"role"`

|

||||

Content string `json:"content"`

|

||||

Role string `json:"role"`

|

||||

Content string `json:"content"`

|

||||

Name *string `json:"name,omitempty"`

|

||||

}

|

||||

|

||||

type ChatRequest struct {

|

||||

@@ -65,16 +65,12 @@ type StreamResponse struct {

|

||||

} `json:"choices"`

|

||||

}

|

||||

|

||||

var tokenEncoder, _ = tiktoken.GetEncoding("cl100k_base")

|

||||

|

||||

func countToken(text string) int {

|

||||

token := tokenEncoder.Encode(text, nil, nil)

|

||||

return len(token)

|

||||

}

|

||||

|

||||

func Relay(c *gin.Context) {

|

||||

err := relayHelper(c)

|

||||

if err != nil {

|

||||

if err.StatusCode == http.StatusTooManyRequests {

|

||||

err.OpenAIError.Message = "负载已满,请稍后再试,或升级账户以提升服务质量。"

|

||||

}

|

||||

c.JSON(err.StatusCode, gin.H{

|

||||

"error": err.OpenAIError,

|

||||

})

|

||||

@@ -146,11 +142,8 @@ func relayHelper(c *gin.Context) *OpenAIErrorWithStatusCode {

|

||||

model_ = strings.TrimSuffix(model_, "-0314")

|

||||

fullRequestURL = fmt.Sprintf("%s/openai/deployments/%s/%s", baseURL, model_, task)

|

||||

}

|

||||

var promptText string

|

||||

for _, message := range textRequest.Messages {

|

||||

promptText += fmt.Sprintf("%s: %s\n", message.Role, message.Content)

|

||||

}

|

||||

promptTokens := countToken(promptText) + 3

|

||||

|

||||

promptTokens := countTokenMessages(textRequest.Messages, textRequest.Model)

|

||||

preConsumedTokens := common.PreConsumedQuota

|

||||

if textRequest.MaxTokens != 0 {

|

||||

preConsumedTokens = promptTokens + textRequest.MaxTokens

|

||||

@@ -203,8 +196,8 @@ func relayHelper(c *gin.Context) *OpenAIErrorWithStatusCode {

|

||||

completionRatio = 2

|

||||

}

|

||||

if isStream {

|

||||

completionText := fmt.Sprintf("%s: %s\n", "assistant", streamResponseText)

|

||||

quota = promptTokens + countToken(completionText)*completionRatio

|

||||

responseTokens := countTokenText(streamResponseText, textRequest.Model)

|

||||

quota = promptTokens + responseTokens*completionRatio

|

||||

} else {

|

||||

quota = textResponse.Usage.PromptTokens + textResponse.Usage.CompletionTokens*completionRatio

|

||||

}

|

||||

@@ -239,6 +232,10 @@ func relayHelper(c *gin.Context) *OpenAIErrorWithStatusCode {

|

||||

go func() {

|

||||

for scanner.Scan() {

|

||||

data := scanner.Text()

|

||||

if len(data) < 6 { // must be something wrong!

|

||||

common.SysError("Invalid stream response: " + data)

|

||||

continue

|

||||

}

|

||||

dataChan <- data

|

||||

data = data[6:]

|

||||

if !strings.HasPrefix(data, "[DONE]") {

|

||||

@@ -259,6 +256,7 @@ func relayHelper(c *gin.Context) *OpenAIErrorWithStatusCode {

|

||||

c.Writer.Header().Set("Cache-Control", "no-cache")

|

||||

c.Writer.Header().Set("Connection", "keep-alive")

|

||||

c.Writer.Header().Set("Transfer-Encoding", "chunked")

|

||||

c.Writer.Header().Set("X-Accel-Buffering", "no")

|

||||

c.Stream(func(w io.Writer) bool {

|

||||

select {

|

||||

case data := <-dataChan:

|

||||

|

||||

7

main.go

7

main.go

@@ -47,6 +47,13 @@ func main() {

|

||||

|

||||

// Initialize options

|

||||

model.InitOptionMap()

|

||||

if os.Getenv("SYNC_FREQUENCY") != "" {

|

||||

frequency, err := strconv.Atoi(os.Getenv("SYNC_FREQUENCY"))

|

||||

if err != nil {

|

||||

common.FatalLog(err)

|

||||

}

|

||||

go model.SyncOptions(frequency)

|

||||

}

|

||||

|

||||

// Initialize HTTP server

|

||||

server := gin.Default()

|

||||

|

||||

@@ -26,6 +26,7 @@ func createRootAccountIfNeed() error {

|

||||

Status: common.UserStatusEnabled,

|

||||

DisplayName: "Root User",

|

||||

AccessToken: common.GetUUID(),

|

||||

Quota: 100000000,

|

||||

}

|

||||

DB.Create(&rootUser)

|

||||

}

|

||||

|

||||

@@ -4,6 +4,7 @@ import (

|

||||

"one-api/common"

|

||||

"strconv"

|

||||

"strings"

|

||||

"time"

|

||||

)

|

||||

|

||||

type Option struct {

|

||||

@@ -59,6 +60,10 @@ func InitOptionMap() {

|

||||

common.OptionMap["ModelRatio"] = common.ModelRatio2JSONString()

|

||||

common.OptionMap["TopUpLink"] = common.TopUpLink

|

||||

common.OptionMapRWMutex.Unlock()

|

||||

loadOptionsFromDatabase()

|

||||

}

|

||||

|

||||

func loadOptionsFromDatabase() {

|

||||

options, _ := AllOption()

|

||||

for _, option := range options {

|

||||

err := updateOptionMap(option.Key, option.Value)

|

||||

@@ -68,6 +73,14 @@ func InitOptionMap() {

|

||||

}

|

||||

}

|

||||

|

||||

func SyncOptions(frequency int) {

|

||||

for {

|

||||

time.Sleep(time.Duration(frequency) * time.Second)

|

||||

common.SysLog("Syncing options from database")

|

||||

loadOptionsFromDatabase()

|

||||

}

|

||||

}

|

||||

|

||||

func UpdateOption(key string, value string) error {

|

||||

// Save to database first

|

||||

option := Option{

|

||||

|

||||

@@ -19,8 +19,7 @@ type User struct {

|

||||

Email string `json:"email" gorm:"index" validate:"max=50"`

|

||||

GitHubId string `json:"github_id" gorm:"column:github_id;index"`

|

||||

WeChatId string `json:"wechat_id" gorm:"column:wechat_id;index"`

|

||||

VerificationCode string `json:"verification_code" gorm:"-:all"` // this field is only for Email verification, don't save it to database!

|

||||

Balance int `json:"balance" gorm:"type:int;default:0"`

|

||||

VerificationCode string `json:"verification_code" gorm:"-:all"` // this field is only for Email verification, don't save it to database!

|

||||

AccessToken string `json:"access_token" gorm:"type:char(32);column:access_token;uniqueIndex"` // this token is for system management

|

||||

Quota int `json:"quota" gorm:"type:int;default:0"`

|

||||

}

|

||||

|

||||

@@ -2,12 +2,24 @@ package router

|

||||

|

||||

import (

|

||||

"embed"

|

||||

"fmt"

|

||||

"github.com/gin-gonic/gin"

|

||||

"net/http"

|

||||

"os"

|

||||

"strings"

|

||||

)

|

||||

|

||||

func SetRouter(router *gin.Engine, buildFS embed.FS, indexPage []byte) {

|

||||

SetApiRouter(router)

|

||||

SetDashboardRouter(router)

|

||||

SetRelayRouter(router)

|

||||

setWebRouter(router, buildFS, indexPage)

|

||||

frontendBaseUrl := os.Getenv("FRONTEND_BASE_URL")

|

||||

if frontendBaseUrl == "" {

|

||||

SetWebRouter(router, buildFS, indexPage)

|

||||

} else {

|

||||

frontendBaseUrl = strings.TrimSuffix(frontendBaseUrl, "/")

|

||||

router.NoRoute(func(c *gin.Context) {

|

||||

c.Redirect(http.StatusMovedPermanently, fmt.Sprintf("%s%s", frontendBaseUrl, c.Request.RequestURI))

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

@@ -10,7 +10,7 @@ import (

|

||||

"one-api/middleware"

|

||||

)

|

||||

|

||||

func setWebRouter(router *gin.Engine, buildFS embed.FS, indexPage []byte) {

|

||||

func SetWebRouter(router *gin.Engine, buildFS embed.FS, indexPage []byte) {

|

||||

router.Use(gzip.Gzip(gzip.DefaultCompression))

|

||||

router.Use(middleware.GlobalWebRateLimit())

|

||||

router.Use(middleware.Cache())

|

||||

|

||||

@@ -1,10 +1,12 @@

|

||||

export const CHANNEL_OPTIONS = [

|

||||

{ key: 1, text: 'OpenAI', value: 1, color: 'green' },

|

||||

{ key: 2, text: 'API2D', value: 2, color: 'blue' },

|

||||

{ key: 8, text: '自定义', value: 8, color: 'pink' },

|

||||

{ key: 3, text: 'Azure', value: 3, color: 'olive' },

|

||||

{ key: 2, text: 'API2D', value: 2, color: 'blue' },

|

||||

{ key: 4, text: 'CloseAI', value: 4, color: 'teal' },

|

||||

{ key: 5, text: 'OpenAI-SB', value: 5, color: 'brown' },

|

||||

{ key: 6, text: 'OpenAI Max', value: 6, color: 'violet' },

|

||||

{ key: 7, text: 'OhMyGPT', value: 7, color: 'purple' },

|

||||

{ key: 8, text: '自定义', value: 8, color: 'pink' }

|

||||

{ key: 9, text: 'AI.LS', value: 9, color: 'yellow' },

|

||||

{ key: 10, text: 'AI Proxy', value: 10, color: 'purple' }

|

||||

];

|

||||

|

||||

@@ -46,6 +46,9 @@ const EditChannel = () => {

|

||||

if (localInputs.base_url.endsWith('/')) {

|

||||

localInputs.base_url = localInputs.base_url.slice(0, localInputs.base_url.length - 1);

|

||||

}

|

||||

if (localInputs.type === 3 && localInputs.other === '') {

|

||||

localInputs.other = '2023-03-15-preview';

|

||||

}

|

||||

let res;

|

||||

if (isEdit) {

|

||||

res = await API.put(`/api/channel/`, { ...localInputs, id: parseInt(channelId) });

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

import React, { useEffect, useState } from 'react';

|

||||

import { Button, Form, Grid, Header, Segment, Statistic } from 'semantic-ui-react';

|

||||

import { API, showError, showSuccess } from '../../helpers';

|

||||

import { API, showError, showInfo, showSuccess } from '../../helpers';

|

||||

|

||||

const TopUp = () => {

|

||||

const [redemptionCode, setRedemptionCode] = useState('');

|

||||

@@ -9,6 +9,7 @@ const TopUp = () => {

|

||||

|

||||

const topUp = async () => {

|

||||

if (redemptionCode === '') {

|

||||

showInfo('请输入充值码!')

|

||||

return;

|

||||

}

|

||||

const res = await API.post('/api/user/topup', {

|

||||

@@ -80,7 +81,7 @@ const TopUp = () => {

|

||||

<Grid.Column>

|

||||

<Statistic.Group widths='one'>

|

||||

<Statistic>

|

||||

<Statistic.Value>{userQuota}</Statistic.Value>

|

||||

<Statistic.Value>{userQuota.toLocaleString()}</Statistic.Value>

|

||||

<Statistic.Label>剩余额度</Statistic.Label>

|

||||

</Statistic>

|

||||

</Statistic.Group>

|

||||

|

||||

@@ -14,8 +14,9 @@ const EditUser = () => {

|

||||

github_id: '',

|

||||

wechat_id: '',

|

||||

email: '',

|

||||

quota: 0,

|

||||

});

|

||||

const { username, display_name, password, github_id, wechat_id, email } =

|

||||

const { username, display_name, password, github_id, wechat_id, email, quota } =

|

||||

inputs;

|

||||

const handleInputChange = (e, { name, value }) => {

|

||||

setInputs((inputs) => ({ ...inputs, [name]: value }));

|

||||

@@ -44,7 +45,11 @@ const EditUser = () => {

|

||||

const submit = async () => {

|

||||

let res = undefined;

|

||||

if (userId) {

|

||||

res = await API.put(`/api/user/`, { ...inputs, id: parseInt(userId) });

|

||||

let data = { ...inputs, id: parseInt(userId) };

|

||||

if (typeof data.quota === 'string') {

|

||||

data.quota = parseInt(data.quota);

|

||||

}

|

||||

res = await API.put(`/api/user/`, data);

|

||||

} else {

|

||||

res = await API.put(`/api/user/self`, inputs);

|

||||

}

|

||||

@@ -92,6 +97,21 @@ const EditUser = () => {

|

||||

autoComplete='new-password'

|

||||

/>

|

||||

</Form.Field>

|

||||

{

|

||||

userId && (

|

||||

<Form.Field>

|

||||

<Form.Input

|

||||

label='剩余额度'

|

||||

name='quota'

|

||||

placeholder={'请输入新的剩余额度'}

|

||||

onChange={handleInputChange}

|

||||

value={quota}

|

||||

type={'number'}

|

||||

autoComplete='new-password'

|

||||

/>

|

||||

</Form.Field>

|

||||

)

|

||||

}

|

||||

<Form.Field>

|

||||

<Form.Input

|

||||

label='已绑定的 GitHub 账户'

|

||||

|

||||

Reference in New Issue

Block a user