mirror of

https://github.com/songquanpeng/one-api.git

synced 2025-10-23 01:43:42 +08:00

Compare commits

96 Commits

v0.5.10-1

...

v0.6.2-alp

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

cf16f44970 | ||

|

|

bf2e26a48f | ||

|

|

4fb22ad4ce | ||

|

|

95cfb8e8c9 | ||

|

|

c6ace985c2 | ||

|

|

10a926b8f3 | ||

|

|

2df877a352 | ||

|

|

9d8967f7d3 | ||

|

|

b35f3523d3 | ||

|

|

82e916b5ff | ||

|

|

de18d6fe16 | ||

|

|

1d0b7fb5ae | ||

|

|

f9490bb72e | ||

|

|

76467285e8 | ||

|

|

df1fd9aa81 | ||

|

|

614c2e0442 | ||

|

|

eac6a0b9aa | ||

|

|

b747cdbc6f | ||

|

|

6b27d6659a | ||

|

|

dc5b781191 | ||

|

|

c880b4a9a3 | ||

|

|

565ea58e68 | ||

|

|

f141a37a9e | ||

|

|

5b78886ad3 | ||

|

|

87c7c4f0e6 | ||

|

|

4c4a873890 | ||

|

|

0664bdfda1 | ||

|

|

32387d9c20 | ||

|

|

bd888f2eb7 | ||

|

|

cece77e533 | ||

|

|

2a5468e23c | ||

|

|

d0e415893b | ||

|

|

6cf5ce9a7a | ||

|

|

f598b9df87 | ||

|

|

532c50d212 | ||

|

|

2acc2f5017 | ||

|

|

604ac56305 | ||

|

|

9383b638a6 | ||

|

|

28d512a675 | ||

|

|

de9a58ca0b | ||

|

|

1aa374ccfb | ||

|

|

d548a01c59 | ||

|

|

2cd1a78203 | ||

|

|

b9d3cb0c45 | ||

|

|

ea407f0054 | ||

|

|

26e2e646cb | ||

|

|

4f214c48c6 | ||

|

|

2d760d4a01 | ||

|

|

e2ed0399f0 | ||

|

|

eed9f5fdf0 | ||

|

|

f2c51a494c | ||

|

|

8a4d6f3327 | ||

|

|

cf4e33cb12 | ||

|

|

5d60305570 | ||

|

|

d062bc60e4 | ||

|

|

39c1882970 | ||

|

|

9c42c7dfd9 | ||

|

|

903aaeded0 | ||

|

|

bdd4be562d | ||

|

|

37afb313b5 | ||

|

|

c9ebcab8b8 | ||

|

|

86261cc656 | ||

|

|

8491785c9d | ||

|

|

e848a3f7fa | ||

|

|

318adf5985 | ||

|

|

965d7fc3d2 | ||

|

|

aa3f605894 | ||

|

|

7b8eff1f22 | ||

|

|

e80cd508ba | ||

|

|

d37f836d53 | ||

|

|

e0b2d1ae47 | ||

|

|

797ead686b | ||

|

|

0d22cf9ead | ||

|

|

48989d4a0b | ||

|

|

6227eee5bc | ||

|

|

cbf8f07747 | ||

|

|

4a96031ce6 | ||

|

|

92886093ae | ||

|

|

0c022f17cb | ||

|

|

83f95935de | ||

|

|

aa03c89133 | ||

|

|

505817ca17 | ||

|

|

cb5a3df616 | ||

|

|

7772064d87 | ||

|

|

c50c609565 | ||

|

|

498dea2dbb | ||

|

|

c725cc8842 | ||

|

|

af8908db54 | ||

|

|

d8029550f7 | ||

|

|

f44fbe3fe7 | ||

|

|

1c8922153d | ||

|

|

f3c07e1451 | ||

|

|

40ceb29e54 | ||

|

|

0699ecd0af | ||

|

|

ee9e746520 | ||

|

|

a763681c2e |

46

.air.toml

46

.air.toml

@@ -1,46 +0,0 @@

|

||||

root = "."

|

||||

testdata_dir = "testdata"

|

||||

tmp_dir = "tmp"

|

||||

|

||||

[build]

|

||||

args_bin = []

|

||||

bin = "./tmp/main"

|

||||

cmd = "go build -o ./tmp/main ."

|

||||

delay = 1000

|

||||

exclude_dir = ["assets", "tmp", "vendor", "testdata", "web"]

|

||||

exclude_file = []

|

||||

exclude_regex = ["_test.go"]

|

||||

exclude_unchanged = false

|

||||

follow_symlink = false

|

||||

full_bin = ""

|

||||

include_dir = []

|

||||

include_ext = ["go", "tpl", "tmpl", "html"]

|

||||

include_file = []

|

||||

kill_delay = "0s"

|

||||

log = "build-errors.log"

|

||||

poll = false

|

||||

poll_interval = 0

|

||||

post_cmd = []

|

||||

pre_cmd = []

|

||||

rerun = false

|

||||

rerun_delay = 500

|

||||

send_interrupt = false

|

||||

stop_on_error = false

|

||||

|

||||

[color]

|

||||

app = ""

|

||||

build = "yellow"

|

||||

main = "magenta"

|

||||

runner = "green"

|

||||

watcher = "cyan"

|

||||

|

||||

[log]

|

||||

main_only = false

|

||||

time = false

|

||||

|

||||

[misc]

|

||||

clean_on_exit = false

|

||||

|

||||

[screen]

|

||||

clear_on_rebuild = false

|

||||

keep_scroll = true

|

||||

49

.github/workflows/docker-image-amd64-en.yml

vendored

Normal file

49

.github/workflows/docker-image-amd64-en.yml

vendored

Normal file

@@ -0,0 +1,49 @@

|

||||

name: Publish Docker image (amd64, English)

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*'

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

name:

|

||||

description: 'reason'

|

||||

required: false

|

||||

jobs:

|

||||

push_to_registries:

|

||||

name: Push Docker image to multiple registries

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

steps:

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: Save version info

|

||||

run: |

|

||||

git describe --tags > VERSION

|

||||

|

||||

- name: Translate

|

||||

run: |

|

||||

python ./i18n/translate.py --repository_path . --json_file_path ./i18n/en.json

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

|

||||

- name: Extract metadata (tags, labels) for Docker

|

||||

id: meta

|

||||

uses: docker/metadata-action@v4

|

||||

with:

|

||||

images: |

|

||||

justsong/one-api-en

|

||||

|

||||

- name: Build and push Docker images

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

context: .

|

||||

push: true

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

54

.github/workflows/docker-image-amd64.yml

vendored

Normal file

54

.github/workflows/docker-image-amd64.yml

vendored

Normal file

@@ -0,0 +1,54 @@

|

||||

name: Publish Docker image (amd64)

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*'

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

name:

|

||||

description: 'reason'

|

||||

required: false

|

||||

jobs:

|

||||

push_to_registries:

|

||||

name: Push Docker image to multiple registries

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

steps:

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: Save version info

|

||||

run: |

|

||||

git describe --tags > VERSION

|

||||

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

|

||||

- name: Log in to the Container registry

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.actor }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Extract metadata (tags, labels) for Docker

|

||||

id: meta

|

||||

uses: docker/metadata-action@v4

|

||||

with:

|

||||

images: |

|

||||

justsong/one-api

|

||||

ghcr.io/${{ github.repository }}

|

||||

|

||||

- name: Build and push Docker images

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

context: .

|

||||

push: true

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

62

.github/workflows/docker-image-arm64.yml

vendored

Normal file

62

.github/workflows/docker-image-arm64.yml

vendored

Normal file

@@ -0,0 +1,62 @@

|

||||

name: Publish Docker image (arm64)

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*'

|

||||

- '!*-alpha*'

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

name:

|

||||

description: 'reason'

|

||||

required: false

|

||||

jobs:

|

||||

push_to_registries:

|

||||

name: Push Docker image to multiple registries

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

steps:

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: Save version info

|

||||

run: |

|

||||

git describe --tags > VERSION

|

||||

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v2

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2

|

||||

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

|

||||

- name: Log in to the Container registry

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.actor }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Extract metadata (tags, labels) for Docker

|

||||

id: meta

|

||||

uses: docker/metadata-action@v4

|

||||

with:

|

||||

images: |

|

||||

justsong/one-api

|

||||

ghcr.io/${{ github.repository }}

|

||||

|

||||

- name: Build and push Docker images

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

context: .

|

||||

platforms: linux/amd64,linux/arm64

|

||||

push: true

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

62

.github/workflows/docker-image.yml

vendored

62

.github/workflows/docker-image.yml

vendored

@@ -1,62 +0,0 @@

|

||||

name: one-api docker image

|

||||

|

||||

on:

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

tags:

|

||||

- "v*"

|

||||

|

||||

env:

|

||||

# github.repository as <account>/<repo>

|

||||

IMAGE_NAME: martialbe/one-api

|

||||

|

||||

jobs:

|

||||

build-and-push:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

steps:

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 0

|

||||

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v2

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2

|

||||

|

||||

- name: Login to GHCR

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GT_Token }}

|

||||

|

||||

- name: Docker meta

|

||||

id: meta

|

||||

uses: docker/metadata-action@v4

|

||||

with:

|

||||

# list of Docker images to use as base name for tags

|

||||

images: ghcr.io/${{ env.IMAGE_NAME }}

|

||||

# generate Docker tags based on the following events/attributes

|

||||

tags: |

|

||||

type=raw,value=dev,enable=${{ github.ref == 'refs/heads/main' }}

|

||||

type=raw,value=latest,enable=${{ startsWith(github.ref, 'refs/tags/') }}

|

||||

type=pep440,pattern={{raw}},enable=${{ startsWith(github.ref, 'refs/tags/') }}

|

||||

|

||||

- name: Build and push

|

||||

uses: docker/build-push-action@v4

|

||||

with:

|

||||

context: .

|

||||

platforms: linux/amd64

|

||||

build-args: |

|

||||

COMMIT_SHA=${{ fromJSON(steps.meta.outputs.json).labels['org.opencontainers.image.revision'] }}

|

||||

push: true

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

cache-from: type=gha

|

||||

cache-to: type=gha,mode=max

|

||||

19

.github/workflows/linux-release.yml

vendored

19

.github/workflows/linux-release.yml

vendored

@@ -5,8 +5,13 @@ permissions:

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- "*"

|

||||

- "!*-alpha*"

|

||||

- '*'

|

||||

- '!*-alpha*'

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

name:

|

||||

description: 'reason'

|

||||

required: false

|

||||

jobs:

|

||||

release:

|

||||

runs-on: ubuntu-latest

|

||||

@@ -23,17 +28,17 @@ jobs:

|

||||

CI: ""

|

||||

run: |

|

||||

cd web

|

||||

npm install

|

||||

REACT_APP_VERSION=$(git describe --tags) npm run build

|

||||

git describe --tags > VERSION

|

||||

REACT_APP_VERSION=$(git describe --tags) chmod u+x ./build.sh && ./build.sh

|

||||

cd ..

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v3

|

||||

with:

|

||||

go-version: ">=1.18.0"

|

||||

go-version: '>=1.18.0'

|

||||

- name: Build Backend (amd64)

|

||||

run: |

|

||||

go mod download

|

||||

go build -ldflags "-s -w -X 'one-api/common.Version=$(git describe --tags)' -extldflags '-static'" -o one-api

|

||||

go build -ldflags "-s -w -X 'github.com/songquanpeng/one-api/common.Version=$(git describe --tags)' -extldflags '-static'" -o one-api

|

||||

|

||||

- name: Build Backend (arm64)

|

||||

run: |

|

||||

@@ -51,4 +56,4 @@ jobs:

|

||||

draft: true

|

||||

generate_release_notes: true

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GT_Token }}

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

19

.github/workflows/macos-release.yml

vendored

19

.github/workflows/macos-release.yml

vendored

@@ -5,8 +5,13 @@ permissions:

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- "*"

|

||||

- "!*-alpha*"

|

||||

- '*'

|

||||

- '!*-alpha*'

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

name:

|

||||

description: 'reason'

|

||||

required: false

|

||||

jobs:

|

||||

release:

|

||||

runs-on: macos-latest

|

||||

@@ -23,17 +28,17 @@ jobs:

|

||||

CI: ""

|

||||

run: |

|

||||

cd web

|

||||

npm install

|

||||

REACT_APP_VERSION=$(git describe --tags) npm run build

|

||||

git describe --tags > VERSION

|

||||

REACT_APP_VERSION=$(git describe --tags) chmod u+x ./build.sh && ./build.sh

|

||||

cd ..

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v3

|

||||

with:

|

||||

go-version: ">=1.18.0"

|

||||

go-version: '>=1.18.0'

|

||||

- name: Build Backend

|

||||

run: |

|

||||

go mod download

|

||||

go build -ldflags "-X 'one-api/common.Version=$(git describe --tags)'" -o one-api-macos

|

||||

go build -ldflags "-X 'github.com/songquanpeng/one-api/common.Version=$(git describe --tags)'" -o one-api-macos

|

||||

- name: Release

|

||||

uses: softprops/action-gh-release@v1

|

||||

if: startsWith(github.ref, 'refs/tags/')

|

||||

@@ -42,4 +47,4 @@ jobs:

|

||||

draft: true

|

||||

generate_release_notes: true

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GT_Token }}

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

19

.github/workflows/windows-release.yml

vendored

19

.github/workflows/windows-release.yml

vendored

@@ -5,8 +5,13 @@ permissions:

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- "*"

|

||||

- "!*-alpha*"

|

||||

- '*'

|

||||

- '!*-alpha*'

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

name:

|

||||

description: 'reason'

|

||||

required: false

|

||||

jobs:

|

||||

release:

|

||||

runs-on: windows-latest

|

||||

@@ -25,18 +30,18 @@ jobs:

|

||||

env:

|

||||

CI: ""

|

||||

run: |

|

||||

cd web

|

||||

cd web/default

|

||||

npm install

|

||||

REACT_APP_VERSION=$(git describe --tags) npm run build

|

||||

cd ..

|

||||

cd ../..

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v3

|

||||

with:

|

||||

go-version: ">=1.18.0"

|

||||

go-version: '>=1.18.0'

|

||||

- name: Build Backend

|

||||

run: |

|

||||

go mod download

|

||||

go build -ldflags "-s -w -X 'one-api/common.Version=$(git describe --tags)'" -o one-api.exe

|

||||

go build -ldflags "-s -w -X 'github.com/songquanpeng/one-api/common.Version=$(git describe --tags)'" -o one-api.exe

|

||||

- name: Release

|

||||

uses: softprops/action-gh-release@v1

|

||||

if: startsWith(github.ref, 'refs/tags/')

|

||||

@@ -45,4 +50,4 @@ jobs:

|

||||

draft: true

|

||||

generate_release_notes: true

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GT_Token }}

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

3

.gitignore

vendored

3

.gitignore

vendored

@@ -7,5 +7,4 @@ build

|

||||

*.db-journal

|

||||

logs

|

||||

data

|

||||

tmp/

|

||||

.env

|

||||

/web/node_modules

|

||||

|

||||

19

Dockerfile

19

Dockerfile

@@ -1,10 +1,15 @@

|

||||

FROM node:16 as builder

|

||||

|

||||

WORKDIR /build

|

||||

COPY web/package.json .

|

||||

RUN npm install

|

||||

COPY ./web .

|

||||

WORKDIR /web

|

||||

COPY ./VERSION .

|

||||

COPY ./web .

|

||||

|

||||

WORKDIR /web/default

|

||||

RUN npm install

|

||||

RUN DISABLE_ESLINT_PLUGIN='true' REACT_APP_VERSION=$(cat VERSION) npm run build

|

||||

|

||||

WORKDIR /web/berry

|

||||

RUN npm install

|

||||

RUN DISABLE_ESLINT_PLUGIN='true' REACT_APP_VERSION=$(cat VERSION) npm run build

|

||||

|

||||

FROM golang AS builder2

|

||||

@@ -17,8 +22,8 @@ WORKDIR /build

|

||||

ADD go.mod go.sum ./

|

||||

RUN go mod download

|

||||

COPY . .

|

||||

COPY --from=builder /build/build ./web/build

|

||||

RUN go build -ldflags "-s -w -X 'one-api/common.Version=$(cat VERSION)' -extldflags '-static'" -o one-api

|

||||

COPY --from=builder /web/build ./web/build

|

||||

RUN go build -ldflags "-s -w -X 'github.com/songquanpeng/one-api/common.Version=$(cat VERSION)' -extldflags '-static'" -o one-api

|

||||

|

||||

FROM alpine

|

||||

|

||||

@@ -30,4 +35,4 @@ RUN apk update \

|

||||

COPY --from=builder2 /build/one-api /

|

||||

EXPOSE 3000

|

||||

WORKDIR /data

|

||||

ENTRYPOINT ["/one-api"]

|

||||

ENTRYPOINT ["/one-api"]

|

||||

142

README.en.md

142

README.en.md

@@ -3,45 +3,35 @@

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/songquanpeng/one-api"><img src="https://raw.githubusercontent.com/songquanpeng/one-api/main/web/public/logo.png" width="150" height="150" alt="one-api logo"></a>

|

||||

<a href="https://github.com/songquanpeng/one-api"><img src="https://raw.githubusercontent.com/songquanpeng/one-api/main/web/default/public/logo.png" width="150" height="150" alt="one-api logo"></a>

|

||||

</p>

|

||||

|

||||

<div align="center">

|

||||

|

||||

# One API

|

||||

|

||||

_This project is a derivative of [one-api](https://github.com/songquanpeng/one-api), where the main focus has been on modularizing the module code from the original project and modifying the frontend interface. This project also adheres to the MIT License._

|

||||

|

||||

<p align="center">

|

||||

<a href="https://raw.githubusercontent.com/MartialBE/one-api/main/LICENSE">

|

||||

<img src="https://img.shields.io/github/license/MartialBE/one-api?color=brightgreen" alt="license">

|

||||

</a>

|

||||

<a href="https://github.com/MartialBE/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/v/release/MartialBE/one-api?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://github.com/users/MartialBE/packages/container/package/one-api">

|

||||

<img src="https://img.shields.io/badge/docker-ghcr.io-blue" alt="docker">

|

||||

</a>

|

||||

<a href="https://goreportcard.com/report/github.com/MartialBE/one-api">

|

||||

<img src="https://goreportcard.com/badge/github.com/MartialBE/one-api" alt="GoReportCard">

|

||||

</a>

|

||||

</p>

|

||||

|

||||

**Please do not mix with the original version, as the different channel ID may cause data disorder.**

|

||||

|

||||

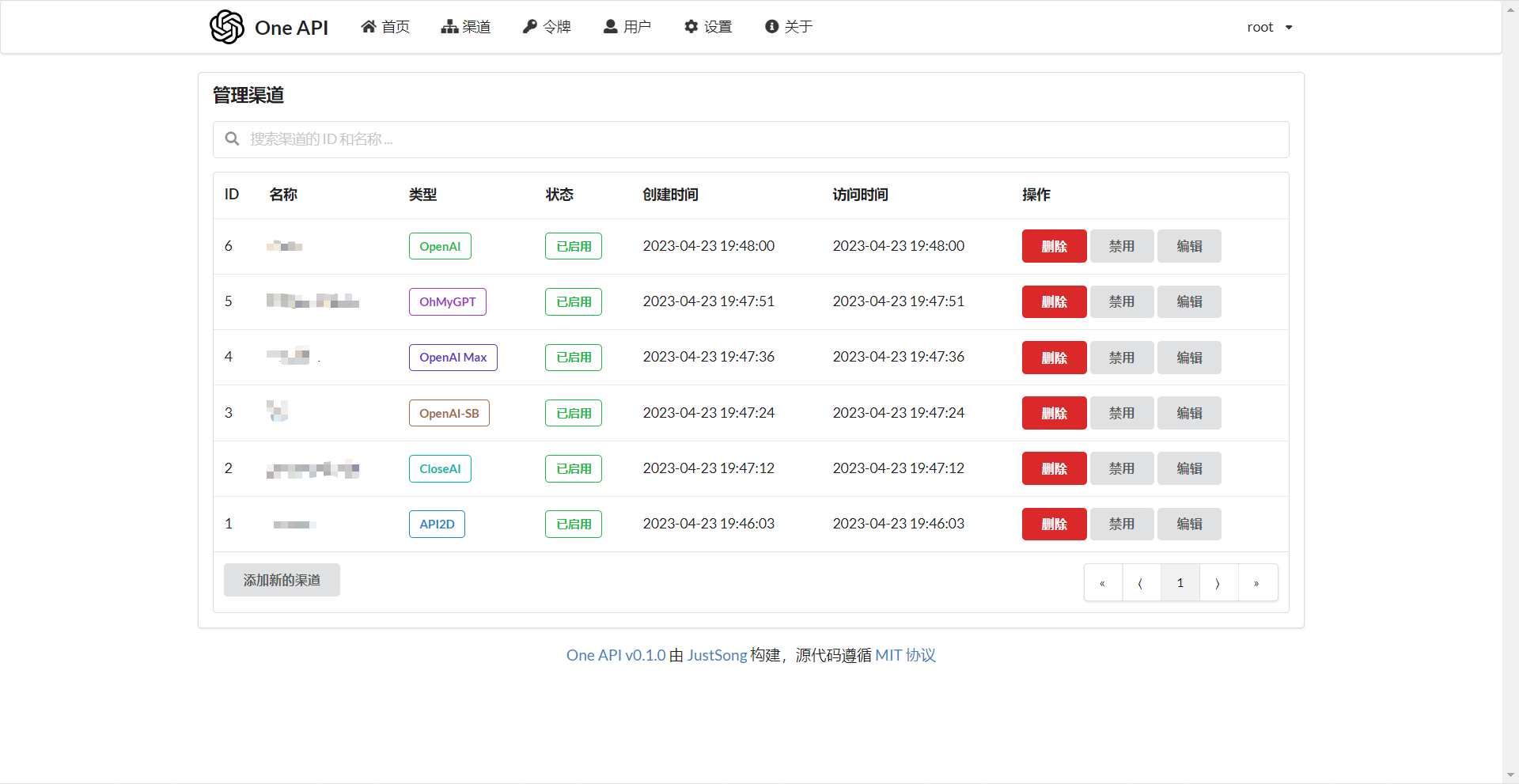

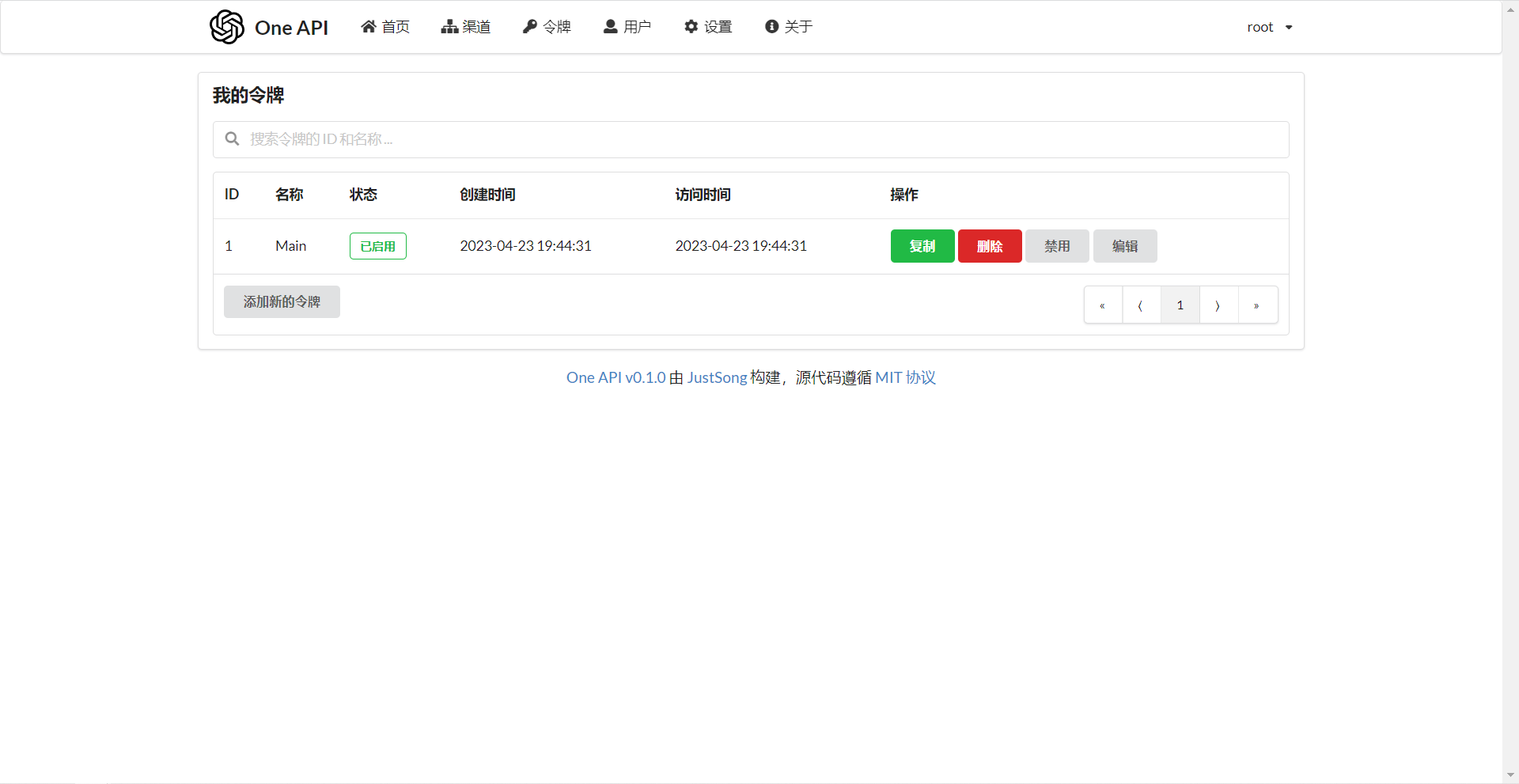

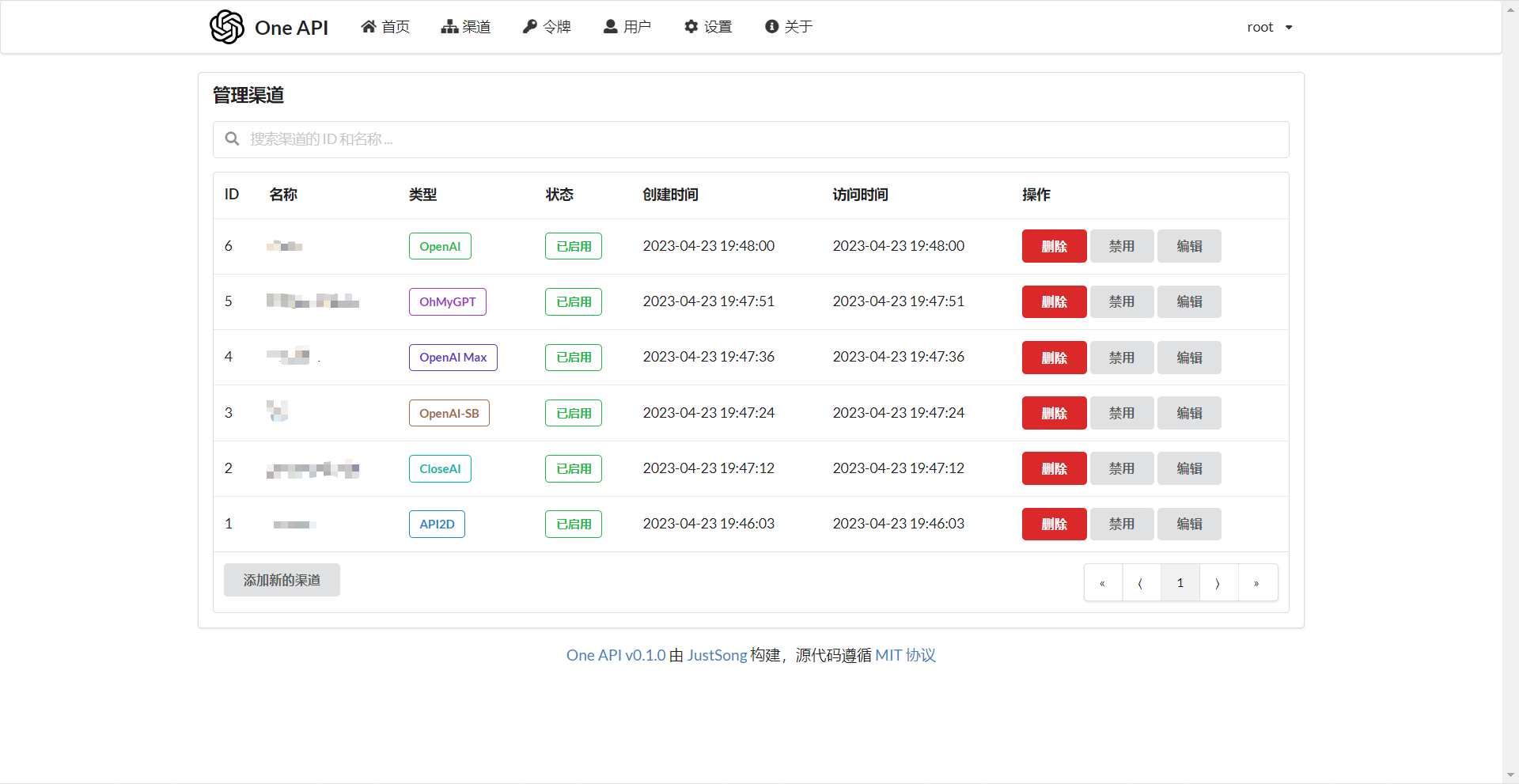

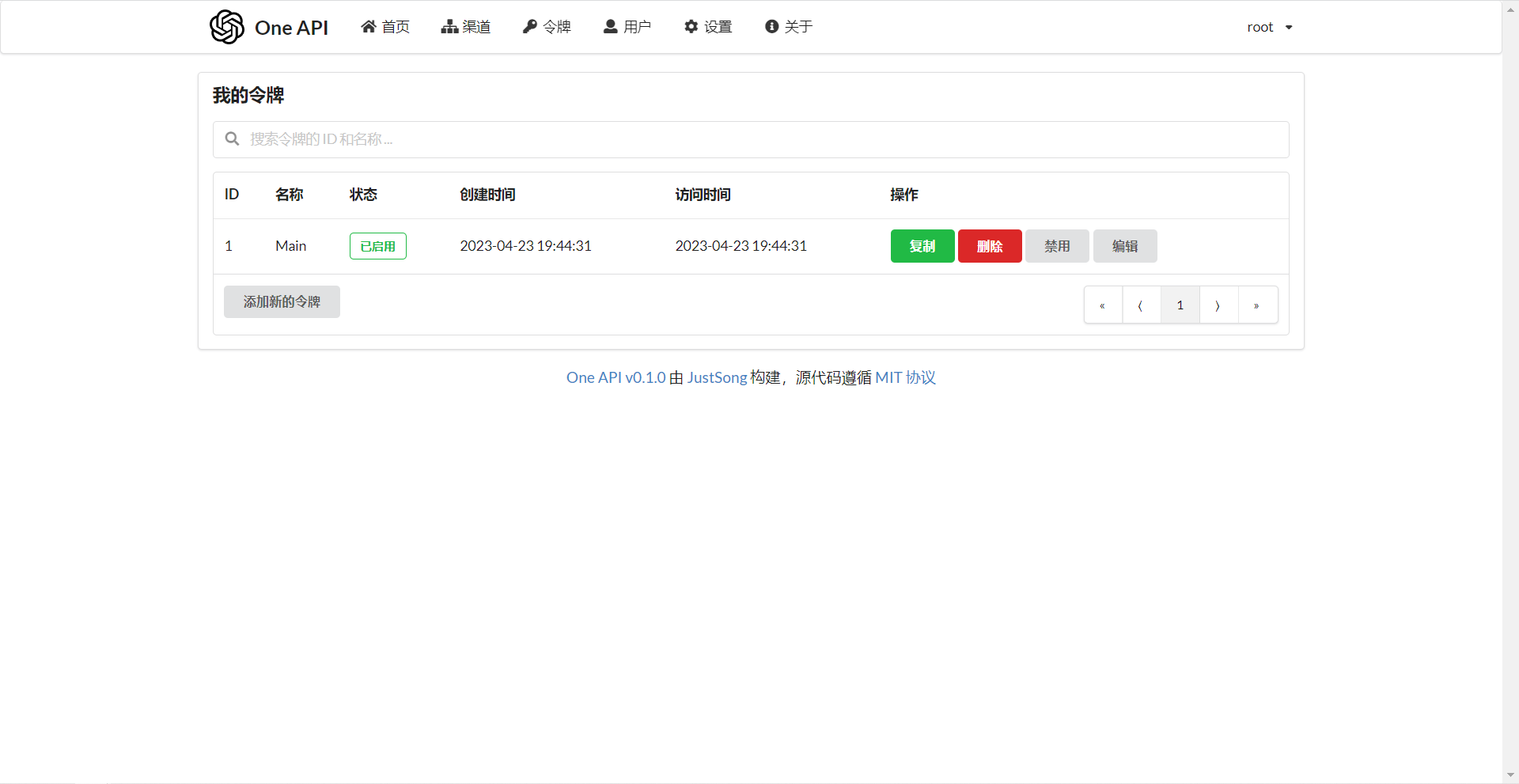

## Screenshots

|

||||

|

||||

|

||||

|

||||

|

||||

_The following is the original project description:_

|

||||

|

||||

---

|

||||

|

||||

_✨ Access all LLM through the standard OpenAI API format, easy to deploy & use ✨_

|

||||

|

||||

</div>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://raw.githubusercontent.com/songquanpeng/one-api/main/LICENSE">

|

||||

<img src="https://img.shields.io/github/license/songquanpeng/one-api?color=brightgreen" alt="license">

|

||||

</a>

|

||||

<a href="https://github.com/songquanpeng/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/v/release/songquanpeng/one-api?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://hub.docker.com/repository/docker/justsong/one-api">

|

||||

<img src="https://img.shields.io/docker/pulls/justsong/one-api?color=brightgreen" alt="docker pull">

|

||||

</a>

|

||||

<a href="https://github.com/songquanpeng/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/downloads/songquanpeng/one-api/total?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://goreportcard.com/report/github.com/songquanpeng/one-api">

|

||||

<img src="https://goreportcard.com/badge/github.com/songquanpeng/one-api" alt="GoReportCard">

|

||||

</a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="#deployment">Deployment Tutorial</a>

|

||||

·

|

||||

@@ -67,14 +57,13 @@ _✨ Access all LLM through the standard OpenAI API format, easy to deploy & use

|

||||

> **Note**: The latest image pulled from Docker may be an `alpha` release. Specify the version manually if you require stability.

|

||||

|

||||

## Features

|

||||

|

||||

1. Support for multiple large models:

|

||||

- [x] [OpenAI ChatGPT Series Models](https://platform.openai.com/docs/guides/gpt/chat-completions-api) (Supports [Azure OpenAI API](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference))

|

||||

- [x] [Anthropic Claude Series Models](https://anthropic.com)

|

||||

- [x] [Google PaLM2 and Gemini Series Models](https://developers.generativeai.google)

|

||||

- [x] [Baidu Wenxin Yiyuan Series Models](https://cloud.baidu.com/doc/WENXINWORKSHOP/index.html)

|

||||

- [x] [Alibaba Tongyi Qianwen Series Models](https://help.aliyun.com/document_detail/2400395.html)

|

||||

- [x] [Zhipu ChatGLM Series Models](https://bigmodel.cn)

|

||||

+ [x] [OpenAI ChatGPT Series Models](https://platform.openai.com/docs/guides/gpt/chat-completions-api) (Supports [Azure OpenAI API](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference))

|

||||

+ [x] [Anthropic Claude Series Models](https://anthropic.com)

|

||||

+ [x] [Google PaLM2 and Gemini Series Models](https://developers.generativeai.google)

|

||||

+ [x] [Baidu Wenxin Yiyuan Series Models](https://cloud.baidu.com/doc/WENXINWORKSHOP/index.html)

|

||||

+ [x] [Alibaba Tongyi Qianwen Series Models](https://help.aliyun.com/document_detail/2400395.html)

|

||||

+ [x] [Zhipu ChatGLM Series Models](https://bigmodel.cn)

|

||||

2. Supports access to multiple channels through **load balancing**.

|

||||

3. Supports **stream mode** that enables typewriter-like effect through stream transmission.

|

||||

4. Supports **multi-machine deployment**. [See here](#multi-machine-deployment) for more details.

|

||||

@@ -93,15 +82,13 @@ _✨ Access all LLM through the standard OpenAI API format, easy to deploy & use

|

||||

15. Supports management API access through system access tokens.

|

||||

16. Supports Cloudflare Turnstile user verification.

|

||||

17. Supports user management and multiple user login/registration methods:

|

||||

- Email login/registration and password reset via email.

|

||||

- [GitHub OAuth](https://github.com/settings/applications/new).

|

||||

- WeChat Official Account authorization (requires additional deployment of [WeChat Server](https://github.com/songquanpeng/wechat-server)).

|

||||

+ Email login/registration and password reset via email.

|

||||

+ [GitHub OAuth](https://github.com/settings/applications/new).

|

||||

+ WeChat Official Account authorization (requires additional deployment of [WeChat Server](https://github.com/songquanpeng/wechat-server)).

|

||||

18. Immediate support and encapsulation of other major model APIs as they become available.

|

||||

|

||||

## Deployment

|

||||

|

||||

### Docker Deployment

|

||||

|

||||

Deployment command: `docker run --name one-api -d --restart always -p 3000:3000 -e TZ=Asia/Shanghai -v /home/ubuntu/data/one-api:/data justsong/one-api-en`

|

||||

|

||||

Update command: `docker run --rm -v /var/run/docker.sock:/var/run/docker.sock containrrr/watchtower -cR`

|

||||

@@ -111,11 +98,10 @@ The first `3000` in `-p 3000:3000` is the port of the host, which can be modifie

|

||||

Data will be saved in the `/home/ubuntu/data/one-api` directory on the host. Ensure that the directory exists and has write permissions, or change it to a suitable directory.

|

||||

|

||||

Nginx reference configuration:

|

||||

|

||||

```

|

||||

server{

|

||||

server_name openai.justsong.cn; # Modify your domain name accordingly

|

||||

|

||||

|

||||

location / {

|

||||

client_max_body_size 64m;

|

||||

proxy_http_version 1.1;

|

||||

@@ -129,7 +115,6 @@ server{

|

||||

```

|

||||

|

||||

Next, configure HTTPS with Let's Encrypt certbot:

|

||||

|

||||

```bash

|

||||

# Install certbot on Ubuntu:

|

||||

sudo snap install --classic certbot

|

||||

@@ -144,23 +129,20 @@ sudo service nginx restart

|

||||

The initial account username is `root` and password is `123456`.

|

||||

|

||||

### Manual Deployment

|

||||

|

||||

1. Download the executable file from [GitHub Releases](https://github.com/songquanpeng/one-api/releases/latest) or compile from source:

|

||||

|

||||

```shell

|

||||

git clone https://github.com/songquanpeng/one-api.git

|

||||

|

||||

|

||||

# Build the frontend

|

||||

cd one-api/web

|

||||

cd one-api/web/default

|

||||

npm install

|

||||

npm run build

|

||||

|

||||

|

||||

# Build the backend

|

||||

cd ..

|

||||

cd ../..

|

||||

go mod download

|

||||

go build -ldflags "-s -w" -o one-api

|

||||

```

|

||||

|

||||

2. Run:

|

||||

```shell

|

||||

chmod u+x one-api

|

||||

@@ -171,7 +153,6 @@ The initial account username is `root` and password is `123456`.

|

||||

For more detailed deployment tutorials, please refer to [this page](https://iamazing.cn/page/how-to-deploy-a-website).

|

||||

|

||||

### Multi-machine Deployment

|

||||

|

||||

1. Set the same `SESSION_SECRET` for all servers.

|

||||

2. Set `SQL_DSN` and use MySQL instead of SQLite. All servers should connect to the same database.

|

||||

3. Set the `NODE_TYPE` for all non-master nodes to `slave`.

|

||||

@@ -183,13 +164,11 @@ For more detailed deployment tutorials, please refer to [this page](https://iama

|

||||

Please refer to the [environment variables](#environment-variables) section for details on using environment variables.

|

||||

|

||||

### Deployment on Control Panels (e.g., Baota)

|

||||

|

||||

Refer to [#175](https://github.com/songquanpeng/one-api/issues/175) for detailed instructions.

|

||||

|

||||

If you encounter a blank page after deployment, refer to [#97](https://github.com/songquanpeng/one-api/issues/97) for possible solutions.

|

||||

|

||||

### Deployment on Third-Party Platforms

|

||||

|

||||

<details>

|

||||

<summary><strong>Deploy on Sealos</strong></summary>

|

||||

<div>

|

||||

@@ -200,6 +179,7 @@ If you encounter a blank page after deployment, refer to [#97](https://github.co

|

||||

|

||||

[](https://cloud.sealos.io/?openapp=system-fastdeploy?templateName=one-api)

|

||||

|

||||

|

||||

</div>

|

||||

</details>

|

||||

|

||||

@@ -214,7 +194,7 @@ If you encounter a blank page after deployment, refer to [#97](https://github.co

|

||||

1. First, fork the code.

|

||||

2. Go to [Zeabur](https://zeabur.com?referralCode=songquanpeng), log in, and enter the console.

|

||||

3. Create a new project. In Service -> Add Service, select Marketplace, and choose MySQL. Note down the connection parameters (username, password, address, and port).

|

||||

4. Copy the connection parameters and run `` create database `one-api` `` to create the database.

|

||||

4. Copy the connection parameters and run ```create database `one-api` ``` to create the database.

|

||||

5. Then, in Service -> Add Service, select Git (authorization is required for the first use) and choose your forked repository.

|

||||

6. Automatic deployment will start, but please cancel it for now. Go to the Variable tab, add a `PORT` with a value of `3000`, and then add a `SQL_DSN` with a value of `<username>:<password>@tcp(<addr>:<port>)/one-api`. Save the changes. Please note that if `SQL_DSN` is not set, data will not be persisted, and the data will be lost after redeployment.

|

||||

7. Select Redeploy.

|

||||

@@ -225,7 +205,6 @@ If you encounter a blank page after deployment, refer to [#97](https://github.co

|

||||

</details>

|

||||

|

||||

## Configuration

|

||||

|

||||

The system is ready to use out of the box.

|

||||

|

||||

You can configure it by setting environment variables or command line parameters.

|

||||

@@ -233,7 +212,6 @@ You can configure it by setting environment variables or command line parameters

|

||||

After the system starts, log in as the `root` user to further configure the system.

|

||||

|

||||

## Usage

|

||||

|

||||

Add your API Key on the `Channels` page, and then add an access token on the `Tokens` page.

|

||||

|

||||

You can then use your access token to access One API. The usage is consistent with the [OpenAI API](https://platform.openai.com/docs/api-reference/introduction).

|

||||

@@ -257,65 +235,59 @@ Note that the token needs to be created by an administrator to specify the chann

|

||||

If the channel ID is not provided, load balancing will be used to distribute the requests to multiple channels.

|

||||

|

||||

### Environment Variables

|

||||

|

||||

1. `REDIS_CONN_STRING`: When set, Redis will be used as the storage for request rate limiting instead of memory.

|

||||

- Example: `REDIS_CONN_STRING=redis://default:redispw@localhost:49153`

|

||||

+ Example: `REDIS_CONN_STRING=redis://default:redispw@localhost:49153`

|

||||

2. `SESSION_SECRET`: When set, a fixed session key will be used to ensure that cookies of logged-in users are still valid after the system restarts.

|

||||

- Example: `SESSION_SECRET=random_string`

|

||||

+ Example: `SESSION_SECRET=random_string`

|

||||

3. `SQL_DSN`: When set, the specified database will be used instead of SQLite. Please use MySQL version 8.0.

|

||||

- Example: `SQL_DSN=root:123456@tcp(localhost:3306)/oneapi`

|

||||

+ Example: `SQL_DSN=root:123456@tcp(localhost:3306)/oneapi`

|

||||

4. `FRONTEND_BASE_URL`: When set, the specified frontend address will be used instead of the backend address.

|

||||

- Example: `FRONTEND_BASE_URL=https://openai.justsong.cn`

|

||||

+ Example: `FRONTEND_BASE_URL=https://openai.justsong.cn`

|

||||

5. `SYNC_FREQUENCY`: When set, the system will periodically sync configurations from the database, with the unit in seconds. If not set, no sync will happen.

|

||||

- Example: `SYNC_FREQUENCY=60`

|

||||

+ Example: `SYNC_FREQUENCY=60`

|

||||

6. `NODE_TYPE`: When set, specifies the node type. Valid values are `master` and `slave`. If not set, it defaults to `master`.

|

||||

- Example: `NODE_TYPE=slave`

|

||||

+ Example: `NODE_TYPE=slave`

|

||||

7. `CHANNEL_UPDATE_FREQUENCY`: When set, it periodically updates the channel balances, with the unit in minutes. If not set, no update will happen.

|

||||

- Example: `CHANNEL_UPDATE_FREQUENCY=1440`

|

||||

+ Example: `CHANNEL_UPDATE_FREQUENCY=1440`

|

||||

8. `CHANNEL_TEST_FREQUENCY`: When set, it periodically tests the channels, with the unit in minutes. If not set, no test will happen.

|

||||

- Example: `CHANNEL_TEST_FREQUENCY=1440`

|

||||

+ Example: `CHANNEL_TEST_FREQUENCY=1440`

|

||||

9. `POLLING_INTERVAL`: The time interval (in seconds) between requests when updating channel balances and testing channel availability. Default is no interval.

|

||||

- Example: `POLLING_INTERVAL=5`

|

||||

+ Example: `POLLING_INTERVAL=5`

|

||||

|

||||

### Command Line Parameters

|

||||

|

||||

1. `--port <port_number>`: Specifies the port number on which the server listens. Defaults to `3000`.

|

||||

- Example: `--port 3000`

|

||||

+ Example: `--port 3000`

|

||||

2. `--log-dir <log_dir>`: Specifies the log directory. If not set, the logs will not be saved.

|

||||

- Example: `--log-dir ./logs`

|

||||

+ Example: `--log-dir ./logs`

|

||||

3. `--version`: Prints the system version number and exits.

|

||||

4. `--help`: Displays the command usage help and parameter descriptions.

|

||||

|

||||

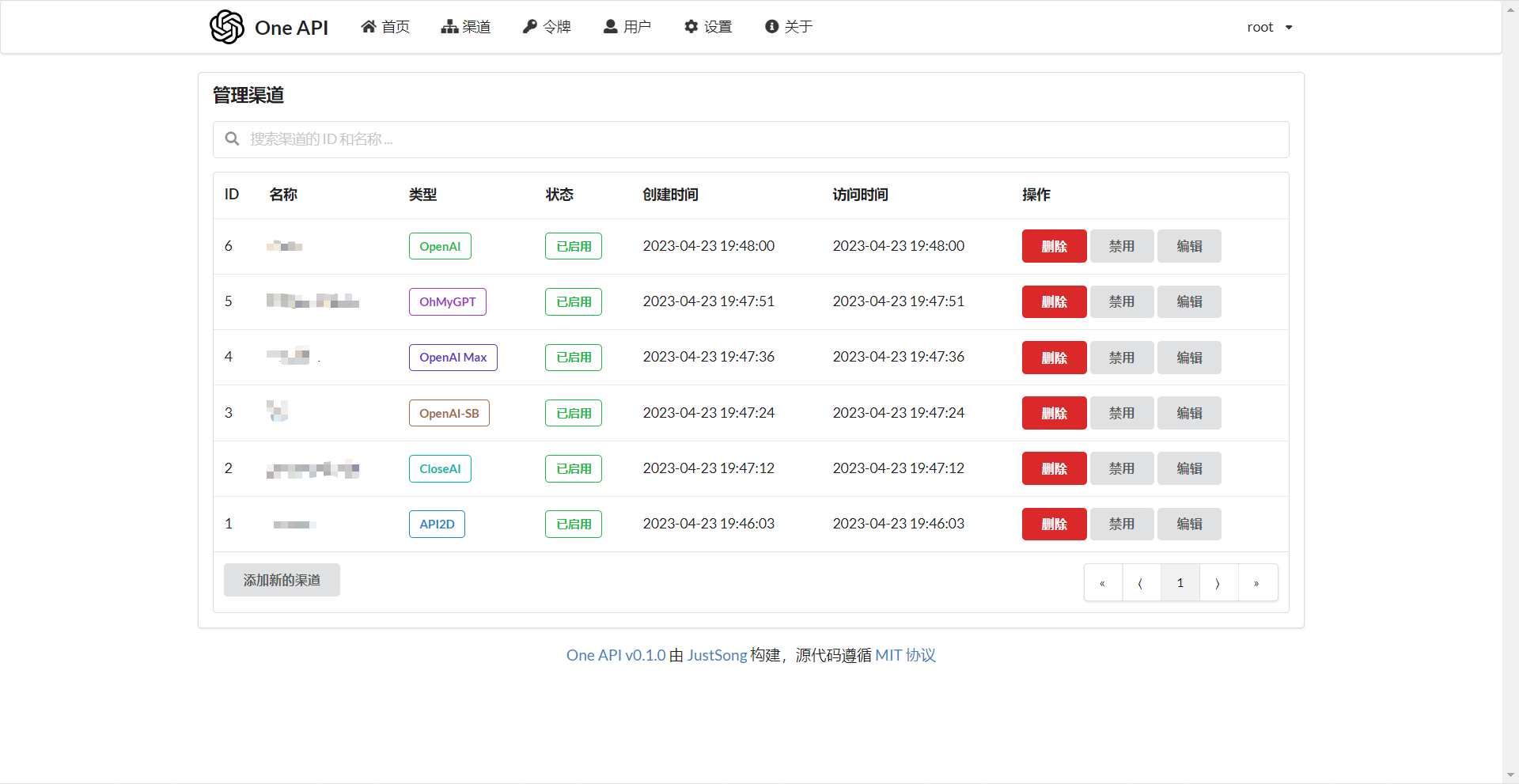

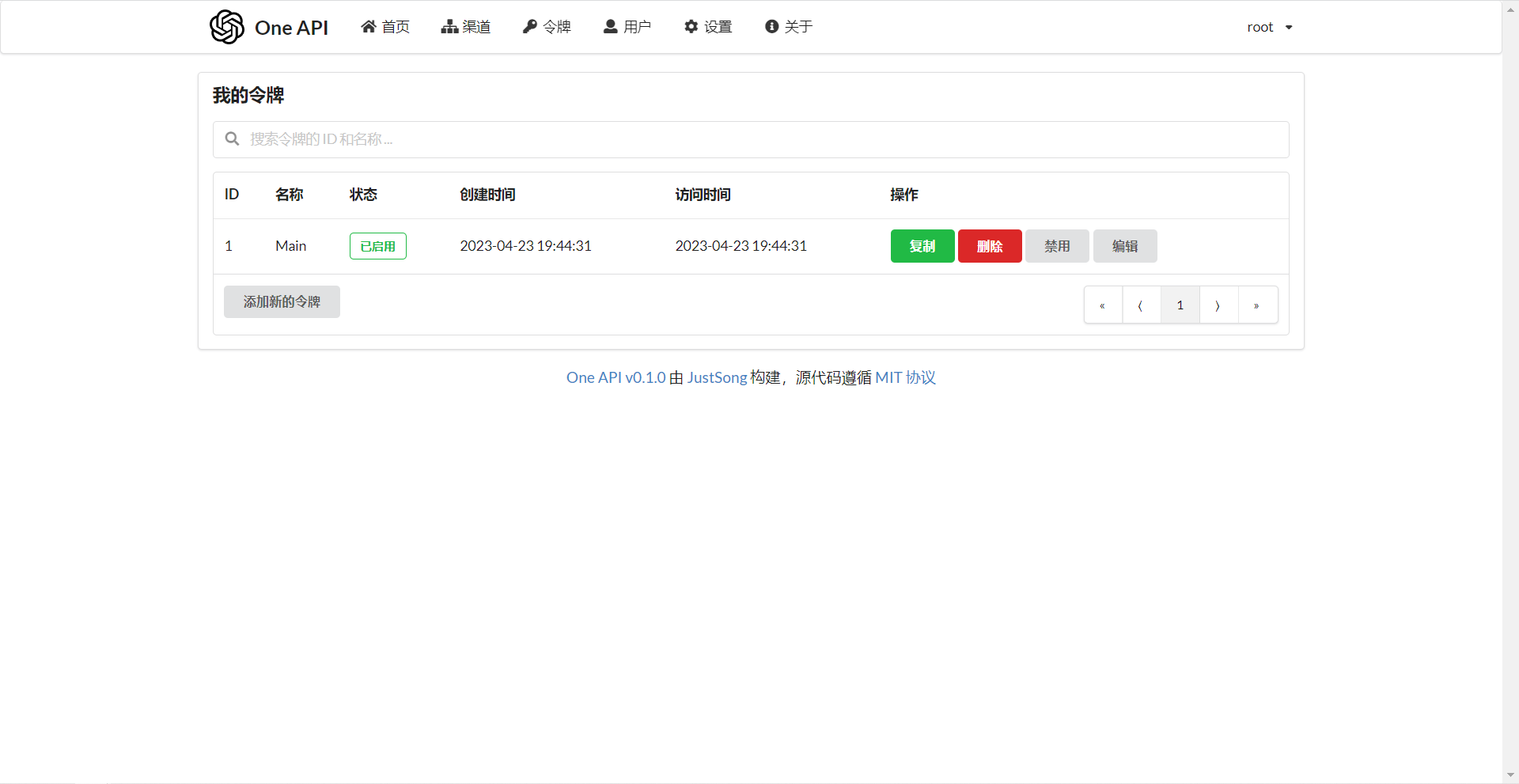

## Screenshots

|

||||

|

||||

|

||||

|

||||

|

||||

## FAQ

|

||||

|

||||

1. What is quota? How is it calculated? Does One API have quota calculation issues?

|

||||

- Quota = Group multiplier _ Model multiplier _ (number of prompt tokens + number of completion tokens \* completion multiplier)

|

||||

- The completion multiplier is fixed at 1.33 for GPT3.5 and 2 for GPT4, consistent with the official definition.

|

||||

- If it is not a stream mode, the official API will return the total number of tokens consumed. However, please note that the consumption multipliers for prompts and completions are different.

|

||||

+ Quota = Group multiplier * Model multiplier * (number of prompt tokens + number of completion tokens * completion multiplier)

|

||||

+ The completion multiplier is fixed at 1.33 for GPT3.5 and 2 for GPT4, consistent with the official definition.

|

||||

+ If it is not a stream mode, the official API will return the total number of tokens consumed. However, please note that the consumption multipliers for prompts and completions are different.

|

||||

2. Why does it prompt "insufficient quota" even though my account balance is sufficient?

|

||||

- Please check if your token quota is sufficient. It is separate from the account balance.

|

||||

- The token quota is used to set the maximum usage and can be freely set by the user.

|

||||

+ Please check if your token quota is sufficient. It is separate from the account balance.

|

||||

+ The token quota is used to set the maximum usage and can be freely set by the user.

|

||||

3. It says "No available channels" when trying to use a channel. What should I do?

|

||||

- Please check the user and channel group settings.

|

||||

- Also check the channel model settings.

|

||||

+ Please check the user and channel group settings.

|

||||

+ Also check the channel model settings.

|

||||

4. Channel testing reports an error: "invalid character '<' looking for beginning of value"

|

||||

- This error occurs when the returned value is not valid JSON but an HTML page.

|

||||

- Most likely, the IP of your deployment site or the node of the proxy has been blocked by CloudFlare.

|

||||

+ This error occurs when the returned value is not valid JSON but an HTML page.

|

||||

+ Most likely, the IP of your deployment site or the node of the proxy has been blocked by CloudFlare.

|

||||

5. ChatGPT Next Web reports an error: "Failed to fetch"

|

||||

- Do not set `BASE_URL` during deployment.

|

||||

- Double-check that your interface address and API Key are correct.

|

||||

+ Do not set `BASE_URL` during deployment.

|

||||

+ Double-check that your interface address and API Key are correct.

|

||||

|

||||

## Related Projects

|

||||

|

||||

[FastGPT](https://github.com/labring/FastGPT): Knowledge question answering system based on the LLM

|

||||

|

||||

## Note

|

||||

|

||||

This project is an open-source project. Please use it in compliance with OpenAI's [Terms of Use](https://openai.com/policies/terms-of-use) and **applicable laws and regulations**. It must not be used for illegal purposes.

|

||||

|

||||

This project is released under the MIT license. Based on this, attribution and a link to this project must be included at the bottom of the page.

|

||||

|

||||

140

README.ja.md

140

README.ja.md

@@ -3,45 +3,35 @@

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/songquanpeng/one-api"><img src="https://raw.githubusercontent.com/songquanpeng/one-api/main/web/public/logo.png" width="150" height="150" alt="one-api logo"></a>

|

||||

<a href="https://github.com/songquanpeng/one-api"><img src="https://raw.githubusercontent.com/songquanpeng/one-api/main/web/default/public/logo.png" width="150" height="150" alt="one-api logo"></a>

|

||||

</p>

|

||||

|

||||

<div align="center">

|

||||

|

||||

# One API

|

||||

|

||||

_このプロジェクトは、[one-api](https://github.com/songquanpeng/one-api)をベースにしており、元のプロジェクトのモジュールコードを分離し、モジュール化し、フロントエンドのインターフェースを変更しました。このプロジェクトも MIT ライセンスに従っています。_

|

||||

|

||||

<p align="center">

|

||||

<a href="https://raw.githubusercontent.com/MartialBE/one-api/main/LICENSE">

|

||||

<img src="https://img.shields.io/github/license/MartialBE/one-api?color=brightgreen" alt="license">

|

||||

</a>

|

||||

<a href="https://github.com/MartialBE/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/v/release/MartialBE/one-api?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://github.com/users/MartialBE/packages/container/package/one-api">

|

||||

<img src="https://img.shields.io/badge/docker-ghcr.io-blue" alt="docker">

|

||||

</a>

|

||||

<a href="https://goreportcard.com/report/github.com/MartialBE/one-api">

|

||||

<img src="https://goreportcard.com/badge/github.com/MartialBE/one-api" alt="GoReportCard">

|

||||

</a>

|

||||

</p>

|

||||

|

||||

**オリジナルバージョンと混合しないでください。チャンネル ID が異なるため、データの混乱を引き起こす可能性があります**

|

||||

|

||||

## スクリーンショット

|

||||

|

||||

|

||||

|

||||

|

||||

_以下は元の項目の説明です:_

|

||||

|

||||

---

|

||||

|

||||

_✨ 標準的な OpenAI API フォーマットを通じてすべての LLM にアクセスでき、導入と利用が容易です ✨_

|

||||

|

||||

</div>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://raw.githubusercontent.com/songquanpeng/one-api/main/LICENSE">

|

||||

<img src="https://img.shields.io/github/license/songquanpeng/one-api?color=brightgreen" alt="license">

|

||||

</a>

|

||||

<a href="https://github.com/songquanpeng/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/v/release/songquanpeng/one-api?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://hub.docker.com/repository/docker/justsong/one-api">

|

||||

<img src="https://img.shields.io/docker/pulls/justsong/one-api?color=brightgreen" alt="docker pull">

|

||||

</a>

|

||||

<a href="https://github.com/songquanpeng/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/downloads/songquanpeng/one-api/total?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://goreportcard.com/report/github.com/songquanpeng/one-api">

|

||||

<img src="https://goreportcard.com/badge/github.com/songquanpeng/one-api" alt="GoReportCard">

|

||||

</a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="#deployment">デプロイチュートリアル</a>

|

||||

·

|

||||

@@ -67,14 +57,13 @@ _✨ 標準的な OpenAI API フォーマットを通じてすべての LLM に

|

||||

> **注**: Docker からプルされた最新のイメージは、`alpha` リリースかもしれません。安定性が必要な場合は、手動でバージョンを指定してください。

|

||||

|

||||

## 特徴

|

||||

|

||||

1. 複数の大型モデルをサポート:

|

||||

- [x] [OpenAI ChatGPT シリーズモデル](https://platform.openai.com/docs/guides/gpt/chat-completions-api) ([Azure OpenAI API](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference) をサポート)

|

||||

- [x] [Anthropic Claude シリーズモデル](https://anthropic.com)

|

||||

- [x] [Google PaLM2/Gemini シリーズモデル](https://developers.generativeai.google)

|

||||

- [x] [Baidu Wenxin Yiyuan シリーズモデル](https://cloud.baidu.com/doc/WENXINWORKSHOP/index.html)

|

||||

- [x] [Alibaba Tongyi Qianwen シリーズモデル](https://help.aliyun.com/document_detail/2400395.html)

|

||||

- [x] [Zhipu ChatGLM シリーズモデル](https://bigmodel.cn)

|

||||

+ [x] [OpenAI ChatGPT シリーズモデル](https://platform.openai.com/docs/guides/gpt/chat-completions-api) ([Azure OpenAI API](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference) をサポート)

|

||||

+ [x] [Anthropic Claude シリーズモデル](https://anthropic.com)

|

||||

+ [x] [Google PaLM2/Gemini シリーズモデル](https://developers.generativeai.google)

|

||||

+ [x] [Baidu Wenxin Yiyuan シリーズモデル](https://cloud.baidu.com/doc/WENXINWORKSHOP/index.html)

|

||||

+ [x] [Alibaba Tongyi Qianwen シリーズモデル](https://help.aliyun.com/document_detail/2400395.html)

|

||||

+ [x] [Zhipu ChatGLM シリーズモデル](https://bigmodel.cn)

|

||||

2. **ロードバランシング**による複数チャンネルへのアクセスをサポート。

|

||||

3. ストリーム伝送によるタイプライター的効果を可能にする**ストリームモード**に対応。

|

||||

4. **マルチマシンデプロイ**に対応。[詳細はこちら](#multi-machine-deployment)を参照。

|

||||

@@ -93,15 +82,13 @@ _✨ 標準的な OpenAI API フォーマットを通じてすべての LLM に

|

||||

15. システム・アクセストークンによる管理 API アクセスをサポートする。

|

||||

16. Cloudflare Turnstile によるユーザー認証に対応。

|

||||

17. ユーザー管理と複数のユーザーログイン/登録方法をサポート:

|

||||

- 電子メールによるログイン/登録とパスワードリセット。

|

||||

- [GitHub OAuth](https://github.com/settings/applications/new)。

|

||||

- WeChat 公式アカウントの認証([WeChat Server](https://github.com/songquanpeng/wechat-server)の追加導入が必要)。

|

||||

+ 電子メールによるログイン/登録とパスワードリセット。

|

||||

+ [GitHub OAuth](https://github.com/settings/applications/new)。

|

||||

+ WeChat 公式アカウントの認証([WeChat Server](https://github.com/songquanpeng/wechat-server)の追加導入が必要)。

|

||||

18. 他の主要なモデル API が利用可能になった場合、即座にサポートし、カプセル化する。

|

||||

|

||||

## デプロイメント

|

||||

|

||||

### Docker デプロイメント

|

||||

|

||||

デプロイコマンド: `docker run --name one-api -d --restart always -p 3000:3000 -e TZ=Asia/Shanghai -v /home/ubuntu/data/one-api:/data justsong/one-api-en`。

|

||||

|

||||

コマンドを更新する: `docker run --rm -v /var/run/docker.sock:/var/run/docker.sock containrr/watchtower -cR`。

|

||||

@@ -110,8 +97,7 @@ _✨ 標準的な OpenAI API フォーマットを通じてすべての LLM に

|

||||

|

||||

データはホストの `/home/ubuntu/data/one-api` ディレクトリに保存される。このディレクトリが存在し、書き込み権限があることを確認する、もしくは適切なディレクトリに変更してください。

|

||||

|

||||

Nginx リファレンス設定:

|

||||

|

||||

Nginxリファレンス設定:

|

||||

```

|

||||

server{

|

||||

server_name openai.justsong.cn; # ドメイン名は適宜変更

|

||||

@@ -130,7 +116,6 @@ server{

|

||||

```

|

||||

|

||||

次に、Let's Encrypt certbot を使って HTTPS を設定します:

|

||||

|

||||

```bash

|

||||

# Ubuntu に certbot をインストール:

|

||||

sudo snap install --classic certbot

|

||||

@@ -145,23 +130,20 @@ sudo service nginx restart

|

||||

初期アカウントのユーザー名は `root` で、パスワードは `123456` です。

|

||||

|

||||

### マニュアルデプロイ

|

||||

|

||||

1. [GitHub Releases](https://github.com/songquanpeng/one-api/releases/latest) から実行ファイルをダウンロードする、もしくはソースからコンパイルする:

|

||||

|

||||

```shell

|

||||

git clone https://github.com/songquanpeng/one-api.git

|

||||

|

||||

# フロントエンドのビルド

|

||||

cd one-api/web

|

||||

cd one-api/web/default

|

||||

npm install

|

||||

npm run build

|

||||

|

||||

# バックエンドのビルド

|

||||

cd ..

|

||||

cd ../..

|

||||

go mod download

|

||||

go build -ldflags "-s -w" -o one-api

|

||||

```

|

||||

|

||||

2. 実行:

|

||||

```shell

|

||||

chmod u+x one-api

|

||||

@@ -172,7 +154,6 @@ sudo service nginx restart

|

||||

より詳細なデプロイのチュートリアルについては、[このページ](https://iamazing.cn/page/how-to-deploy-a-website) を参照してください。

|

||||

|

||||

### マルチマシンデプロイ

|

||||

|

||||

1. すべてのサーバに同じ `SESSION_SECRET` を設定する。

|

||||

2. `SQL_DSN` を設定し、SQLite の代わりに MySQL を使用する。すべてのサーバは同じデータベースに接続する。

|

||||

3. マスターノード以外のノードの `NODE_TYPE` を `slave` に設定する。

|

||||

@@ -184,13 +165,11 @@ sudo service nginx restart

|

||||

Please refer to the [environment variables](#environment-variables) section for details on using environment variables.

|

||||

|

||||

### コントロールパネル(例: Baota)への展開

|

||||

|

||||

詳しい手順は [#175](https://github.com/songquanpeng/one-api/issues/175) を参照してください。

|

||||

|

||||

配置後に空白のページが表示される場合は、[#97](https://github.com/songquanpeng/one-api/issues/97) を参照してください。

|

||||

|

||||

### サードパーティプラットフォームへのデプロイ

|

||||

|

||||

<details>

|

||||

<summary><strong>Sealos へのデプロイ</strong></summary>

|

||||

<div>

|

||||

@@ -201,6 +180,7 @@ Please refer to the [environment variables](#environment-variables) section for

|

||||

|

||||

[](https://cloud.sealos.io/?openapp=system-fastdeploy?templateName=one-api)

|

||||

|

||||

|

||||

</div>

|

||||

</details>

|

||||

|

||||

@@ -214,8 +194,8 @@ Please refer to the [environment variables](#environment-variables) section for

|

||||

|

||||

1. まず、コードをフォークする。

|

||||

2. [Zeabur](https://zeabur.com?referralCode=songquanpeng) にアクセスしてログインし、コンソールに入る。

|

||||

3. 新しいプロジェクトを作成します。Service -> Add Service で Marketplace を選択し、MySQL を選択する。接続パラメータ(ユーザー名、パスワード、アドレス、ポート)をメモします。

|

||||

4. 接続パラメータをコピーし、`` create database `one-api` `` を実行してデータベースを作成する。

|

||||

3. 新しいプロジェクトを作成します。Service -> Add ServiceでMarketplace を選択し、MySQL を選択する。接続パラメータ(ユーザー名、パスワード、アドレス、ポート)をメモします。

|

||||

4. 接続パラメータをコピーし、```create database `one-api` ``` を実行してデータベースを作成する。

|

||||

5. その後、Service -> Add Service で Git を選択し(最初の使用には認証が必要です)、フォークしたリポジトリを選択します。

|

||||

6. 自動デプロイが開始されますが、一旦キャンセルしてください。Variable タブで `PORT` に `3000` を追加し、`SQL_DSN` に `<username>:<password>@tcp(<addr>:<port>)/one-api` を追加します。変更を保存する。SQL_DSN` が設定されていないと、データが永続化されず、再デプロイ後にデータが失われるので注意すること。

|

||||

7. 再デプロイを選択します。

|

||||

@@ -226,7 +206,6 @@ Please refer to the [environment variables](#environment-variables) section for

|

||||

</details>

|

||||

|

||||

## コンフィグ

|

||||

|

||||

システムは箱から出してすぐに使えます。

|

||||

|

||||

環境変数やコマンドラインパラメータを設定することで、システムを構成することができます。

|

||||

@@ -234,7 +213,6 @@ Please refer to the [environment variables](#environment-variables) section for

|

||||

システム起動後、`root` ユーザーとしてログインし、さらにシステムを設定します。

|

||||

|

||||

## 使用方法

|

||||

|

||||

`Channels` ページで API Key を追加し、`Tokens` ページでアクセストークンを追加する。

|

||||

|

||||

アクセストークンを使って One API にアクセスすることができる。使い方は [OpenAI API](https://platform.openai.com/docs/api-reference/introduction) と同じです。

|

||||

@@ -258,65 +236,59 @@ graph LR

|

||||

もしチャネル ID が指定されない場合、ロードバランシングによってリクエストが複数のチャネルに振り分けられます。

|

||||

|

||||

### 環境変数

|

||||

|

||||

1. `REDIS_CONN_STRING`: 設定すると、リクエストレート制限のためのストレージとして、メモリの代わりに Redis が使われる。

|

||||

- 例: `REDIS_CONN_STRING=redis://default:redispw@localhost:49153`

|

||||

+ 例: `REDIS_CONN_STRING=redis://default:redispw@localhost:49153`

|

||||

2. `SESSION_SECRET`: 設定すると、固定セッションキーが使用され、システムの再起動後もログインユーザーのクッキーが有効であることが保証されます。

|

||||

- 例: `SESSION_SECRET=random_string`

|

||||

+ 例: `SESSION_SECRET=random_string`

|

||||

3. `SQL_DSN`: 設定すると、SQLite の代わりに指定したデータベースが使用されます。MySQL バージョン 8.0 を使用してください。

|

||||

- 例: `SQL_DSN=root:123456@tcp(localhost:3306)/oneapi`

|

||||

+ 例: `SQL_DSN=root:123456@tcp(localhost:3306)/oneapi`

|

||||

4. `FRONTEND_BASE_URL`: 設定されると、バックエンドアドレスではなく、指定されたフロントエンドアドレスが使われる。

|

||||

- 例: `FRONTEND_BASE_URL=https://openai.justsong.cn`

|

||||

+ 例: `FRONTEND_BASE_URL=https://openai.justsong.cn`

|

||||

5. `SYNC_FREQUENCY`: 設定された場合、システムは定期的にデータベースからコンフィグを秒単位で同期する。設定されていない場合、同期は行われません。

|

||||

- 例: `SYNC_FREQUENCY=60`

|

||||

+ 例: `SYNC_FREQUENCY=60`

|

||||

6. `NODE_TYPE`: 設定すると、ノードのタイプを指定する。有効な値は `master` と `slave` である。設定されていない場合、デフォルトは `master`。

|

||||

- 例: `NODE_TYPE=slave`

|

||||

+ 例: `NODE_TYPE=slave`

|

||||

7. `CHANNEL_UPDATE_FREQUENCY`: 設定すると、チャンネル残高を分単位で定期的に更新する。設定されていない場合、更新は行われません。

|

||||

- 例: `CHANNEL_UPDATE_FREQUENCY=1440`

|

||||

+ 例: `CHANNEL_UPDATE_FREQUENCY=1440`

|

||||

8. `CHANNEL_TEST_FREQUENCY`: 設定すると、チャンネルを定期的にテストする。設定されていない場合、テストは行われません。

|

||||

- 例: `CHANNEL_TEST_FREQUENCY=1440`

|

||||

+ 例: `CHANNEL_TEST_FREQUENCY=1440`

|

||||

9. `POLLING_INTERVAL`: チャネル残高の更新とチャネルの可用性をテストするときのリクエスト間の時間間隔 (秒)。デフォルトは間隔なし。

|

||||

- 例: `POLLING_INTERVAL=5`

|

||||

+ 例: `POLLING_INTERVAL=5`

|

||||

|

||||

### コマンドラインパラメータ

|

||||

|

||||

1. `--port <port_number>`: サーバがリッスンするポート番号を指定。デフォルトは `3000` です。

|

||||

- 例: `--port 3000`

|

||||

+ 例: `--port 3000`

|

||||

2. `--log-dir <log_dir>`: ログディレクトリを指定。設定しない場合、ログは保存されません。

|

||||

- 例: `--log-dir ./logs`

|

||||

+ 例: `--log-dir ./logs`

|

||||

3. `--version`: システムのバージョン番号を表示して終了する。

|

||||

4. `--help`: コマンドの使用法ヘルプとパラメータの説明を表示。

|

||||

|

||||

## スクリーンショット

|

||||

|

||||

|

||||

|

||||

|

||||

## FAQ

|

||||

|

||||

1. ノルマとは何か?どのように計算されますか?One API にはノルマ計算の問題はありますか?

|

||||

- ノルマ = グループ倍率 _ モデル倍率 _ (プロンプトトークンの数 + 完了トークンの数 \* 完了倍率)

|

||||

- 完了倍率は、公式の定義と一致するように、GPT3.5 では 1.33、GPT4 では 2 に固定されています。

|

||||

- ストリームモードでない場合、公式 API は消費したトークンの総数を返す。ただし、プロンプトとコンプリートの消費倍率は異なるので注意してください。

|

||||

+ ノルマ = グループ倍率 * モデル倍率 * (プロンプトトークンの数 + 完了トークンの数 * 完了倍率)

|

||||

+ 完了倍率は、公式の定義と一致するように、GPT3.5 では 1.33、GPT4 では 2 に固定されています。

|

||||

+ ストリームモードでない場合、公式 API は消費したトークンの総数を返す。ただし、プロンプトとコンプリートの消費倍率は異なるので注意してください。

|

||||

2. アカウント残高は十分なのに、"insufficient quota" と表示されるのはなぜですか?

|

||||

- トークンのクォータが十分かどうかご確認ください。トークンクォータはアカウント残高とは別のものです。

|

||||

- トークンクォータは最大使用量を設定するためのもので、ユーザーが自由に設定できます。

|

||||

+ トークンのクォータが十分かどうかご確認ください。トークンクォータはアカウント残高とは別のものです。

|

||||

+ トークンクォータは最大使用量を設定するためのもので、ユーザーが自由に設定できます。

|

||||

3. チャンネルを使おうとすると "No available channels" と表示されます。どうすればいいですか?

|

||||

- ユーザーとチャンネルグループの設定を確認してください。

|

||||

- チャンネルモデルの設定も確認してください。

|

||||

+ ユーザーとチャンネルグループの設定を確認してください。

|

||||

+ チャンネルモデルの設定も確認してください。

|

||||

4. チャンネルテストがエラーを報告する: "invalid character '<' looking for beginning of value"

|

||||

- このエラーは、返された値が有効な JSON ではなく、HTML ページである場合に発生する。

|

||||

- ほとんどの場合、デプロイサイトの IP かプロキシのノードが CloudFlare によってブロックされています。

|

||||

+ このエラーは、返された値が有効な JSON ではなく、HTML ページである場合に発生する。

|

||||

+ ほとんどの場合、デプロイサイトのIPかプロキシのノードが CloudFlare によってブロックされています。

|

||||

5. ChatGPT Next Web でエラーが発生しました: "Failed to fetch"

|

||||

- デプロイ時に `BASE_URL` を設定しないでください。

|

||||

- インターフェイスアドレスと API Key が正しいか再確認してください。

|

||||

+ デプロイ時に `BASE_URL` を設定しないでください。

|

||||

+ インターフェイスアドレスと API Key が正しいか再確認してください。

|

||||

|

||||

## 関連プロジェクト

|

||||

|

||||

[FastGPT](https://github.com/labring/FastGPT): LLM に基づく知識質問応答システム

|

||||

|

||||

## 注

|

||||

|

||||

本プロジェクトはオープンソースプロジェクトです。OpenAI の[利用規約](https://openai.com/policies/terms-of-use)および**適用される法令**を遵守してご利用ください。違法な目的での利用はご遠慮ください。

|

||||

|

||||

このプロジェクトは MIT ライセンスで公開されています。これに基づき、ページの最下部に帰属表示と本プロジェクトへのリンクを含める必要があります。

|

||||

|

||||

231

README.md

231

README.md

@@ -2,46 +2,37 @@

|

||||

<strong>中文</strong> | <a href="./README.en.md">English</a> | <a href="./README.ja.md">日本語</a>

|

||||

</p>

|

||||

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/MartialBE/one-api"><img src="https://raw.githubusercontent.com/MartialBE/one-api/main/web/src/assets/images/logo.svg" width="150" height="150" alt="one-api logo"></a>

|

||||

<a href="https://github.com/songquanpeng/one-api"><img src="https://raw.githubusercontent.com/songquanpeng/one-api/main/web/default/public/logo.png" width="150" height="150" alt="one-api logo"></a>

|

||||

</p>

|

||||

|

||||

<div align="center">

|

||||

|

||||

# One API

|

||||

|

||||

_本项目是基于[one-api](https://github.com/songquanpeng/one-api)二次开发而来的,主要将原项目中的模块代码分离,模块化,并修改了前端界面。本项目同样遵循 MIT 协议。_

|

||||

|

||||

<p align="center">

|

||||

<a href="https://raw.githubusercontent.com/MartialBE/one-api/main/LICENSE">

|

||||

<img src="https://img.shields.io/github/license/MartialBE/one-api?color=brightgreen" alt="license">

|

||||

</a>

|

||||

<a href="https://github.com/MartialBE/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/v/release/MartialBE/one-api?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://github.com/users/MartialBE/packages/container/package/one-api">

|

||||

<img src="https://img.shields.io/badge/docker-ghcr.io-blue" alt="docker">

|

||||

</a>

|

||||

<a href="https://goreportcard.com/report/github.com/MartialBE/one-api">

|

||||

<img src="https://goreportcard.com/badge/github.com/MartialBE/one-api" alt="GoReportCard">

|

||||

</a>

|

||||

</p>

|

||||

|

||||

**请不要和原版混用,因为 channel id 不同的原因,会导致数据错乱**

|

||||

|

||||

# 截图展示

|

||||

|

||||

|

||||

|

||||

|

||||

_以下为原项目说明:_

|

||||

|

||||

---

|

||||

|

||||

_✨ 通过标准的 OpenAI API 格式访问所有的大模型,开箱即用 ✨_

|

||||

|

||||

</div>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://raw.githubusercontent.com/songquanpeng/one-api/main/LICENSE">

|

||||

<img src="https://img.shields.io/github/license/songquanpeng/one-api?color=brightgreen" alt="license">

|

||||

</a>

|

||||

<a href="https://github.com/songquanpeng/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/v/release/songquanpeng/one-api?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://hub.docker.com/repository/docker/justsong/one-api">

|

||||

<img src="https://img.shields.io/docker/pulls/justsong/one-api?color=brightgreen" alt="docker pull">

|

||||

</a>

|

||||

<a href="https://github.com/songquanpeng/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/downloads/songquanpeng/one-api/total?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://goreportcard.com/report/github.com/songquanpeng/one-api">

|

||||

<img src="https://goreportcard.com/badge/github.com/songquanpeng/one-api" alt="GoReportCard">

|

||||

</a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/songquanpeng/one-api#部署">部署教程</a>

|

||||

·

|

||||

@@ -62,7 +53,7 @@ _✨ 通过标准的 OpenAI API 格式访问所有的大模型,开箱即用

|

||||

|

||||

> [!NOTE]

|

||||

> 本项目为开源项目,使用者必须在遵循 OpenAI 的[使用条款](https://openai.com/policies/terms-of-use)以及**法律法规**的情况下使用,不得用于非法用途。

|

||||

>

|

||||

>

|

||||

> 根据[《生成式人工智能服务管理暂行办法》](http://www.cac.gov.cn/2023-07/13/c_1690898327029107.htm)的要求,请勿对中国地区公众提供一切未经备案的生成式人工智能服务。

|

||||

|

||||

> [!WARNING]

|

||||

@@ -72,17 +63,21 @@ _✨ 通过标准的 OpenAI API 格式访问所有的大模型,开箱即用

|

||||

> 使用 root 用户初次登录系统后,务必修改默认密码 `123456`!

|

||||

|

||||

## 功能

|

||||

|

||||

1. 支持多种大模型:

|

||||

- [x] [OpenAI ChatGPT 系列模型](https://platform.openai.com/docs/guides/gpt/chat-completions-api)(支持 [Azure OpenAI API](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference))

|

||||

- [x] [Anthropic Claude 系列模型](https://anthropic.com)

|

||||

- [x] [Google PaLM2/Gemini 系列模型](https://developers.generativeai.google)

|

||||

- [x] [百度文心一言系列模型](https://cloud.baidu.com/doc/WENXINWORKSHOP/index.html)

|

||||

- [x] [阿里通义千问系列模型](https://help.aliyun.com/document_detail/2400395.html)

|

||||

- [x] [讯飞星火认知大模型](https://www.xfyun.cn/doc/spark/Web.html)

|

||||

- [x] [智谱 ChatGLM 系列模型](https://bigmodel.cn)

|

||||

- [x] [360 智脑](https://ai.360.cn)

|

||||

- [x] [腾讯混元大模型](https://cloud.tencent.com/document/product/1729)

|

||||

+ [x] [OpenAI ChatGPT 系列模型](https://platform.openai.com/docs/guides/gpt/chat-completions-api)(支持 [Azure OpenAI API](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference))

|

||||

+ [x] [Anthropic Claude 系列模型](https://anthropic.com)

|

||||

+ [x] [Google PaLM2/Gemini 系列模型](https://developers.generativeai.google)

|

||||

+ [x] [Mistral 系列模型](https://mistral.ai/)

|

||||

+ [x] [百度文心一言系列模型](https://cloud.baidu.com/doc/WENXINWORKSHOP/index.html)

|

||||

+ [x] [阿里通义千问系列模型](https://help.aliyun.com/document_detail/2400395.html)

|

||||

+ [x] [讯飞星火认知大模型](https://www.xfyun.cn/doc/spark/Web.html)

|

||||

+ [x] [智谱 ChatGLM 系列模型](https://bigmodel.cn)

|

||||

+ [x] [360 智脑](https://ai.360.cn)

|

||||

+ [x] [腾讯混元大模型](https://cloud.tencent.com/document/product/1729)

|

||||

+ [x] [Moonshot AI](https://platform.moonshot.cn/)

|

||||

+ [x] [百川大模型](https://platform.baichuan-ai.com)

|

||||

+ [ ] [字节云雀大模型](https://www.volcengine.com/product/ark) (WIP)

|

||||

+ [x] [MINIMAX](https://api.minimax.chat/)

|

||||

2. 支持配置镜像以及众多[第三方代理服务](https://iamazing.cn/page/openai-api-third-party-services)。

|

||||

3. 支持通过**负载均衡**的方式访问多个渠道。

|

||||

4. 支持 **stream 模式**,可以通过流式传输实现打字机效果。

|

||||

@@ -106,14 +101,13 @@ _✨ 通过标准的 OpenAI API 格式访问所有的大模型,开箱即用

|

||||

20. 支持通过系统访问令牌访问管理 API(bearer token,用以替代 cookie,你可以自行抓包来查看 API 的用法)。

|

||||

21. 支持 Cloudflare Turnstile 用户校验。

|

||||

22. 支持用户管理,支持**多种用户登录注册方式**:

|

||||

- 邮箱登录注册(支持注册邮箱白名单)以及通过邮箱进行密码重置。

|

||||

- [GitHub 开放授权](https://github.com/settings/applications/new)。

|

||||

- 微信公众号授权(需要额外部署 [WeChat Server](https://github.com/songquanpeng/wechat-server))。

|

||||

+ 邮箱登录注册(支持注册邮箱白名单)以及通过邮箱进行密码重置。

|

||||

+ [GitHub 开放授权](https://github.com/settings/applications/new)。

|

||||

+ 微信公众号授权(需要额外部署 [WeChat Server](https://github.com/songquanpeng/wechat-server))。

|

||||

23. 支持主题切换,设置环境变量 `THEME` 即可,默认为 `default`,欢迎 PR 更多主题,具体参考[此处](./web/README.md)。

|

||||

|

||||

## 部署

|

||||

|

||||

### 基于 Docker 进行部署

|

||||

|

||||

```shell

|

||||

# 使用 SQLite 的部署命令:

|

||||

docker run --name one-api -d --restart always -p 3000:3000 -e TZ=Asia/Shanghai -v /home/ubuntu/data/one-api:/data justsong/one-api

|

||||

@@ -135,11 +129,10 @@ docker run --name one-api -d --restart always -p 3000:3000 -e SQL_DSN="root:1234

|

||||

更新命令:`docker run --rm -v /var/run/docker.sock:/var/run/docker.sock containrrr/watchtower -cR`

|

||||

|

||||

Nginx 的参考配置:

|

||||

|

||||

```

|

||||

server{

|

||||

server_name openai.justsong.cn; # 请根据实际情况修改你的域名

|

||||

|

||||

|

||||

location / {

|

||||

client_max_body_size 64m;

|

||||

proxy_http_version 1.1;

|

||||

@@ -154,7 +147,6 @@ server{

|

||||

```

|

||||

|

||||

之后使用 Let's Encrypt 的 certbot 配置 HTTPS:

|

||||

|

||||

```bash

|

||||

# Ubuntu 安装 certbot:

|

||||

sudo snap install --classic certbot

|

||||

@@ -168,6 +160,7 @@ sudo service nginx restart

|

||||

|

||||

初始账号用户名为 `root`,密码为 `123456`。

|

||||

|

||||

|

||||

### 基于 Docker Compose 进行部署

|

||||

|

||||

> 仅启动方式不同,参数设置不变,请参考基于 Docker 部署部分

|

||||

@@ -181,23 +174,20 @@ docker-compose ps

|

||||

```

|

||||

|

||||

### 手动部署

|

||||

|

||||

1. 从 [GitHub Releases](https://github.com/songquanpeng/one-api/releases/latest) 下载可执行文件或者从源码编译:

|

||||

|

||||

```shell

|

||||

git clone https://github.com/songquanpeng/one-api.git

|

||||

|

||||

|

||||

# 构建前端

|

||||

cd one-api/web

|

||||

cd one-api/web/default

|

||||