Compare commits

21 Commits

v0.5.11-de

...

0.0.2

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

7419ca511e | ||

|

|

ccd7a99b68 | ||

|

|

759423d69e | ||

|

|

04110d4b01 | ||

|

|

d6b2131720 | ||

|

|

438daea433 | ||

|

|

c6c070b8bd | ||

|

|

13b3bfee2a | ||

|

|

2b42b4f364 | ||

|

|

f37e41eb1d | ||

|

|

c144c64fff | ||

|

|

8956e2fd60 | ||

|

|

30187cebe8 | ||

|

|

00d3a78bef | ||

|

|

a588241515 | ||

|

|

546f9e1db5 | ||

|

|

4908a9eddc | ||

|

|

15cdaee762 | ||

|

|

395ee121ed | ||

|

|

4cea6279ab | ||

|

|

f50683e75f |

49

.github/workflows/docker-image-amd64-en.yml

vendored

Normal file

@@ -0,0 +1,49 @@

|

||||

name: Publish Docker image (amd64, English)

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*'

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

name:

|

||||

description: 'reason'

|

||||

required: false

|

||||

jobs:

|

||||

push_to_registries:

|

||||

name: Push Docker image to multiple registries

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

steps:

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: Save version info

|

||||

run: |

|

||||

git describe --tags > VERSION

|

||||

|

||||

- name: Translate

|

||||

run: |

|

||||

python ./i18n/translate.py --repository_path . --json_file_path ./i18n/en.json

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

|

||||

- name: Extract metadata (tags, labels) for Docker

|

||||

id: meta

|

||||

uses: docker/metadata-action@v4

|

||||

with:

|

||||

images: |

|

||||

ckt1031/one-api-en

|

||||

|

||||

- name: Build and push Docker images

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

context: .

|

||||

push: true

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

22

.github/workflows/docker-image-amd64.yml

vendored

@@ -1,28 +1,34 @@

|

||||

name: Publish Docker image (amd64)

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*'

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

name:

|

||||

description: 'reason'

|

||||

required: false

|

||||

|

||||

jobs:

|

||||

build-and-push-image:

|

||||

name: Push Docker image to GitHub registry

|

||||

push_to_registries:

|

||||

name: Push Docker image to multiple registries

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

|

||||

steps:

|

||||

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: Save version info

|

||||

run: |

|

||||

git describe --tags > VERSION

|

||||

git describe --tags > VERSION

|

||||

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

|

||||

- name: Log in to the Container registry

|

||||

uses: docker/login-action@v2

|

||||

@@ -35,7 +41,9 @@ jobs:

|

||||

id: meta

|

||||

uses: docker/metadata-action@v4

|

||||

with:

|

||||

images: ghcr.io/${{ github.repository }}

|

||||

images: |

|

||||

ckt1031/one-api

|

||||

ghcr.io/${{ github.repository }}

|

||||

|

||||

- name: Build and push Docker images

|

||||

uses: docker/build-push-action@v3

|

||||

|

||||

62

.github/workflows/docker-image-arm64.yml

vendored

Normal file

@@ -0,0 +1,62 @@

|

||||

name: Publish Docker image (arm64)

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*'

|

||||

- '!*-alpha*'

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

name:

|

||||

description: 'reason'

|

||||

required: false

|

||||

jobs:

|

||||

push_to_registries:

|

||||

name: Push Docker image to multiple registries

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

steps:

|

||||

- name: Check out the repo

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: Save version info

|

||||

run: |

|

||||

git describe --tags > VERSION

|

||||

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v2

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2

|

||||

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

|

||||

- name: Log in to the Container registry

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.actor }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Extract metadata (tags, labels) for Docker

|

||||

id: meta

|

||||

uses: docker/metadata-action@v4

|

||||

with:

|

||||

images: |

|

||||

ckt1031/one-api

|

||||

ghcr.io/${{ github.repository }}

|

||||

|

||||

- name: Build and push Docker images

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

context: .

|

||||

platforms: linux/amd64,linux/arm64

|

||||

push: true

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

54

.github/workflows/linux-release.yml

vendored

Normal file

@@ -0,0 +1,54 @@

|

||||

name: Linux Release

|

||||

permissions:

|

||||

contents: write

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*'

|

||||

- '!*-alpha*'

|

||||

jobs:

|

||||

release:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 0

|

||||

- uses: actions/setup-node@v3

|

||||

with:

|

||||

node-version: 16

|

||||

- name: Build Frontend

|

||||

env:

|

||||

CI: ""

|

||||

run: |

|

||||

cd web

|

||||

npm install

|

||||

REACT_APP_VERSION=$(git describe --tags) npm run build

|

||||

cd ..

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v3

|

||||

with:

|

||||

go-version: '>=1.18.0'

|

||||

- name: Build Backend (amd64)

|

||||

run: |

|

||||

go mod download

|

||||

go build -ldflags "-s -w -X 'one-api/common.Version=$(git describe --tags)' -extldflags '-static'" -o one-api

|

||||

|

||||

- name: Build Backend (arm64)

|

||||

run: |

|

||||

sudo apt-get update

|

||||

sudo apt-get install gcc-aarch64-linux-gnu

|

||||

CC=aarch64-linux-gnu-gcc CGO_ENABLED=1 GOOS=linux GOARCH=arm64 go build -ldflags "-s -w -X 'one-api/common.Version=$(git describe --tags)' -extldflags '-static'" -o one-api-arm64

|

||||

|

||||

- name: Release

|

||||

uses: softprops/action-gh-release@v1

|

||||

if: startsWith(github.ref, 'refs/tags/')

|

||||

with:

|

||||

files: |

|

||||

one-api

|

||||

one-api-arm64

|

||||

draft: true

|

||||

generate_release_notes: true

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

45

.github/workflows/macos-release.yml

vendored

Normal file

@@ -0,0 +1,45 @@

|

||||

name: macOS Release

|

||||

permissions:

|

||||

contents: write

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*'

|

||||

- '!*-alpha*'

|

||||

jobs:

|

||||

release:

|

||||

runs-on: macos-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 0

|

||||

- uses: actions/setup-node@v3

|

||||

with:

|

||||

node-version: 16

|

||||

- name: Build Frontend

|

||||

env:

|

||||

CI: ""

|

||||

run: |

|

||||

cd web

|

||||

npm install

|

||||

REACT_APP_VERSION=$(git describe --tags) npm run build

|

||||

cd ..

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v3

|

||||

with:

|

||||

go-version: '>=1.18.0'

|

||||

- name: Build Backend

|

||||

run: |

|

||||

go mod download

|

||||

go build -ldflags "-X 'one-api/common.Version=$(git describe --tags)'" -o one-api-macos

|

||||

- name: Release

|

||||

uses: softprops/action-gh-release@v1

|

||||

if: startsWith(github.ref, 'refs/tags/')

|

||||

with:

|

||||

files: one-api-macos

|

||||

draft: true

|

||||

generate_release_notes: true

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

48

.github/workflows/windows-release.yml

vendored

Normal file

@@ -0,0 +1,48 @@

|

||||

name: Windows Release

|

||||

permissions:

|

||||

contents: write

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- '*'

|

||||

- '!*-alpha*'

|

||||

jobs:

|

||||

release:

|

||||

runs-on: windows-latest

|

||||

defaults:

|

||||

run:

|

||||

shell: bash

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 0

|

||||

- uses: actions/setup-node@v3

|

||||

with:

|

||||

node-version: 16

|

||||

- name: Build Frontend

|

||||

env:

|

||||

CI: ""

|

||||

run: |

|

||||

cd web

|

||||

npm install

|

||||

REACT_APP_VERSION=$(git describe --tags) npm run build

|

||||

cd ..

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v3

|

||||

with:

|

||||

go-version: '>=1.18.0'

|

||||

- name: Build Backend

|

||||

run: |

|

||||

go mod download

|

||||

go build -ldflags "-s -w -X 'one-api/common.Version=$(git describe --tags)'" -o one-api.exe

|

||||

- name: Release

|

||||

uses: softprops/action-gh-release@v1

|

||||

if: startsWith(github.ref, 'refs/tags/')

|

||||

with:

|

||||

files: one-api.exe

|

||||

draft: true

|

||||

generate_release_notes: true

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

4

.gitignore

vendored

@@ -4,6 +4,4 @@ upload

|

||||

*.exe

|

||||

*.db

|

||||

build

|

||||

*.db-journal

|

||||

logs

|

||||

data

|

||||

*.db-journal

|

||||

19

Dockerfile

@@ -1,16 +1,10 @@

|

||||

FROM node:16 as builder

|

||||

|

||||

WORKDIR /web

|

||||

COPY ./VERSION .

|

||||

WORKDIR /build

|

||||

COPY ./web .

|

||||

|

||||

WORKDIR /web/default

|

||||

COPY ./VERSION .

|

||||

RUN npm install

|

||||

RUN DISABLE_ESLINT_PLUGIN='true' REACT_APP_VERSION=$(cat VERSION) npm run build

|

||||

|

||||

WORKDIR /web/berry

|

||||

RUN npm install

|

||||

RUN DISABLE_ESLINT_PLUGIN='true' REACT_APP_VERSION=$(cat VERSION) npm run build

|

||||

RUN REACT_APP_VERSION=$(cat VERSION) npm run build

|

||||

|

||||

FROM golang AS builder2

|

||||

|

||||

@@ -19,10 +13,9 @@ ENV GO111MODULE=on \

|

||||

GOOS=linux

|

||||

|

||||

WORKDIR /build

|

||||

ADD go.mod go.sum ./

|

||||

RUN go mod download

|

||||

COPY . .

|

||||

COPY --from=builder /web/build ./web/build

|

||||

COPY --from=builder /build/build ./web/build

|

||||

RUN go mod download

|

||||

RUN go build -ldflags "-s -w -X 'one-api/common.Version=$(cat VERSION)' -extldflags '-static'" -o one-api

|

||||

|

||||

FROM alpine

|

||||

@@ -35,4 +28,4 @@ RUN apk update \

|

||||

COPY --from=builder2 /build/one-api /

|

||||

EXPOSE 3000

|

||||

WORKDIR /data

|

||||

ENTRYPOINT ["/one-api"]

|

||||

ENTRYPOINT ["/one-api"]

|

||||

|

||||

35

README.en.md

@@ -1,9 +1,9 @@

|

||||

<p align="right">

|

||||

<a href="./README.md">中文</a> | <strong>English</strong> | <a href="./README.ja.md">日本語</a>

|

||||

<a href="./README.md">中文</a> | <strong>English</strong>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/songquanpeng/one-api"><img src="https://raw.githubusercontent.com/songquanpeng/one-api/main/web/default/public/logo.png" width="150" height="150" alt="one-api logo"></a>

|

||||

<a href="https://github.com/songquanpeng/one-api"><img src="https://raw.githubusercontent.com/songquanpeng/one-api/main/web/public/logo.png" width="150" height="150" alt="one-api logo"></a>

|

||||

</p>

|

||||

|

||||

<div align="center">

|

||||

@@ -57,13 +57,15 @@ _✨ Access all LLM through the standard OpenAI API format, easy to deploy & use

|

||||

> **Note**: The latest image pulled from Docker may be an `alpha` release. Specify the version manually if you require stability.

|

||||

|

||||

## Features

|

||||

1. Support for multiple large models:

|

||||

+ [x] [OpenAI ChatGPT Series Models](https://platform.openai.com/docs/guides/gpt/chat-completions-api) (Supports [Azure OpenAI API](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference))

|

||||

+ [x] [Anthropic Claude Series Models](https://anthropic.com)

|

||||

+ [x] [Google PaLM2 and Gemini Series Models](https://developers.generativeai.google)

|

||||

+ [x] [Baidu Wenxin Yiyuan Series Models](https://cloud.baidu.com/doc/WENXINWORKSHOP/index.html)

|

||||

+ [x] [Alibaba Tongyi Qianwen Series Models](https://help.aliyun.com/document_detail/2400395.html)

|

||||

+ [x] [Zhipu ChatGLM Series Models](https://bigmodel.cn)

|

||||

1. Supports multiple API access channels:

|

||||

+ [x] Official OpenAI channel (support proxy configuration)

|

||||

+ [x] **Azure OpenAI API**

|

||||

+ [x] [API Distribute](https://api.gptjk.top/register?aff=QGxj)

|

||||

+ [x] [OpenAI-SB](https://openai-sb.com)

|

||||

+ [x] [API2D](https://api2d.com/r/197971)

|

||||

+ [x] [OhMyGPT](https://aigptx.top?aff=uFpUl2Kf)

|

||||

+ [x] [AI Proxy](https://aiproxy.io/?i=OneAPI) (invitation code: `OneAPI`)

|

||||

+ [x] Custom channel: Various third-party proxy services not included in the list

|

||||

2. Supports access to multiple channels through **load balancing**.

|

||||

3. Supports **stream mode** that enables typewriter-like effect through stream transmission.

|

||||

4. Supports **multi-machine deployment**. [See here](#multi-machine-deployment) for more details.

|

||||

@@ -137,7 +139,7 @@ The initial account username is `root` and password is `123456`.

|

||||

cd one-api/web

|

||||

npm install

|

||||

npm run build

|

||||

|

||||

|

||||

# Build the backend

|

||||

cd ..

|

||||

go mod download

|

||||

@@ -173,12 +175,7 @@ If you encounter a blank page after deployment, refer to [#97](https://github.co

|

||||

<summary><strong>Deploy on Sealos</strong></summary>

|

||||

<div>

|

||||

|

||||

> Sealos supports high concurrency, dynamic scaling, and stable operations for millions of users.

|

||||

|

||||

> Click the button below to deploy with one click.👇

|

||||

|

||||

[](https://cloud.sealos.io/?openapp=system-fastdeploy?templateName=one-api)

|

||||

|

||||

Please refer to [this tutorial](https://github.com/c121914yu/FastGPT/blob/main/docs/deploy/one-api/sealos.md).

|

||||

|

||||

</div>

|

||||

</details>

|

||||

@@ -189,10 +186,8 @@ If you encounter a blank page after deployment, refer to [#97](https://github.co

|

||||

|

||||

> Zeabur's servers are located overseas, automatically solving network issues, and the free quota is sufficient for personal usage.

|

||||

|

||||

[](https://zeabur.com/templates/7Q0KO3)

|

||||

|

||||

1. First, fork the code.

|

||||

2. Go to [Zeabur](https://zeabur.com?referralCode=songquanpeng), log in, and enter the console.

|

||||

2. Go to [Zeabur](https://zeabur.com/), log in, and enter the console.

|

||||

3. Create a new project. In Service -> Add Service, select Marketplace, and choose MySQL. Note down the connection parameters (username, password, address, and port).

|

||||

4. Copy the connection parameters and run ```create database `one-api` ``` to create the database.

|

||||

5. Then, in Service -> Add Service, select Git (authorization is required for the first use) and choose your forked repository.

|

||||

@@ -285,7 +280,7 @@ If the channel ID is not provided, load balancing will be used to distribute the

|

||||

+ Double-check that your interface address and API Key are correct.

|

||||

|

||||

## Related Projects

|

||||

[FastGPT](https://github.com/labring/FastGPT): Knowledge question answering system based on the LLM

|

||||

[FastGPT](https://github.com/c121914yu/FastGPT): Build an AI knowledge base in three minutes

|

||||

|

||||

## Note

|

||||

This project is an open-source project. Please use it in compliance with OpenAI's [Terms of Use](https://openai.com/policies/terms-of-use) and **applicable laws and regulations**. It must not be used for illegal purposes.

|

||||

|

||||

300

README.ja.md

@@ -1,300 +0,0 @@

|

||||

<p align="right">

|

||||

<a href="./README.md">中文</a> | <a href="./README.en.md">English</a> | <strong>日本語</strong>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/songquanpeng/one-api"><img src="https://raw.githubusercontent.com/songquanpeng/one-api/main/web/default/public/logo.png" width="150" height="150" alt="one-api logo"></a>

|

||||

</p>

|

||||

|

||||

<div align="center">

|

||||

|

||||

# One API

|

||||

|

||||

_✨ 標準的な OpenAI API フォーマットを通じてすべての LLM にアクセスでき、導入と利用が容易です ✨_

|

||||

|

||||

</div>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://raw.githubusercontent.com/songquanpeng/one-api/main/LICENSE">

|

||||

<img src="https://img.shields.io/github/license/songquanpeng/one-api?color=brightgreen" alt="license">

|

||||

</a>

|

||||

<a href="https://github.com/songquanpeng/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/v/release/songquanpeng/one-api?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://hub.docker.com/repository/docker/justsong/one-api">

|

||||

<img src="https://img.shields.io/docker/pulls/justsong/one-api?color=brightgreen" alt="docker pull">

|

||||

</a>

|

||||

<a href="https://github.com/songquanpeng/one-api/releases/latest">

|

||||

<img src="https://img.shields.io/github/downloads/songquanpeng/one-api/total?color=brightgreen&include_prereleases" alt="release">

|

||||

</a>

|

||||

<a href="https://goreportcard.com/report/github.com/songquanpeng/one-api">

|

||||

<img src="https://goreportcard.com/badge/github.com/songquanpeng/one-api" alt="GoReportCard">

|

||||

</a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="#deployment">デプロイチュートリアル</a>

|

||||

·

|

||||

<a href="#usage">使用方法</a>

|

||||

·

|

||||

<a href="https://github.com/songquanpeng/one-api/issues">フィードバック</a>

|

||||

·

|

||||

<a href="#screenshots">スクリーンショット</a>

|

||||

·

|

||||

<a href="https://openai.justsong.cn/">ライブデモ</a>

|

||||

·

|

||||

<a href="#faq">FAQ</a>

|

||||

·

|

||||

<a href="#related-projects">関連プロジェクト</a>

|

||||

·

|

||||

<a href="https://iamazing.cn/page/reward">寄付</a>

|

||||

</p>

|

||||

|

||||

> **警告**: この README は ChatGPT によって翻訳されています。翻訳ミスを発見した場合は遠慮なく PR を投稿してください。

|

||||

|

||||

> **警告**: 英語版の Docker イメージは `justsong/one-api-en` です。

|

||||

|

||||

> **注**: Docker からプルされた最新のイメージは、`alpha` リリースかもしれません。安定性が必要な場合は、手動でバージョンを指定してください。

|

||||

|

||||

## 特徴

|

||||

1. 複数の大型モデルをサポート:

|

||||

+ [x] [OpenAI ChatGPT シリーズモデル](https://platform.openai.com/docs/guides/gpt/chat-completions-api) ([Azure OpenAI API](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference) をサポート)

|

||||

+ [x] [Anthropic Claude シリーズモデル](https://anthropic.com)

|

||||

+ [x] [Google PaLM2/Gemini シリーズモデル](https://developers.generativeai.google)

|

||||

+ [x] [Baidu Wenxin Yiyuan シリーズモデル](https://cloud.baidu.com/doc/WENXINWORKSHOP/index.html)

|

||||

+ [x] [Alibaba Tongyi Qianwen シリーズモデル](https://help.aliyun.com/document_detail/2400395.html)

|

||||

+ [x] [Zhipu ChatGLM シリーズモデル](https://bigmodel.cn)

|

||||

2. **ロードバランシング**による複数チャンネルへのアクセスをサポート。

|

||||

3. ストリーム伝送によるタイプライター的効果を可能にする**ストリームモード**に対応。

|

||||

4. **マルチマシンデプロイ**に対応。[詳細はこちら](#multi-machine-deployment)を参照。

|

||||

5. トークンの有効期限や使用回数を設定できる**トークン管理**に対応しています。

|

||||

6. **バウチャー管理**に対応しており、バウチャーの一括生成やエクスポートが可能です。バウチャーは口座残高の補充に利用できます。

|

||||

7. **チャンネル管理**に対応し、チャンネルの一括作成が可能。

|

||||

8. グループごとに異なるレートを設定するための**ユーザーグループ**と**チャンネルグループ**をサポートしています。

|

||||

9. チャンネル**モデルリスト設定**に対応。

|

||||

10. **クォータ詳細チェック**をサポート。

|

||||

11. **ユーザー招待報酬**をサポートします。

|

||||

12. 米ドルでの残高表示が可能。

|

||||

13. 新規ユーザー向けのお知らせ公開、リチャージリンク設定、初期残高設定に対応。

|

||||

14. 豊富な**カスタマイズ**オプションを提供します:

|

||||

1. システム名、ロゴ、フッターのカスタマイズが可能。

|

||||

2. HTML と Markdown コードを使用したホームページとアバウトページのカスタマイズ、または iframe を介したスタンドアロンウェブページの埋め込みをサポートしています。

|

||||

15. システム・アクセストークンによる管理 API アクセスをサポートする。

|

||||

16. Cloudflare Turnstile によるユーザー認証に対応。

|

||||

17. ユーザー管理と複数のユーザーログイン/登録方法をサポート:

|

||||

+ 電子メールによるログイン/登録とパスワードリセット。

|

||||

+ [GitHub OAuth](https://github.com/settings/applications/new)。

|

||||

+ WeChat 公式アカウントの認証([WeChat Server](https://github.com/songquanpeng/wechat-server)の追加導入が必要)。

|

||||

18. 他の主要なモデル API が利用可能になった場合、即座にサポートし、カプセル化する。

|

||||

|

||||

## デプロイメント

|

||||

### Docker デプロイメント

|

||||

デプロイコマンド: `docker run --name one-api -d --restart always -p 3000:3000 -e TZ=Asia/Shanghai -v /home/ubuntu/data/one-api:/data justsong/one-api-en`。

|

||||

|

||||

コマンドを更新する: `docker run --rm -v /var/run/docker.sock:/var/run/docker.sock containrr/watchtower -cR`。

|

||||

|

||||

`-p 3000:3000` の最初の `3000` はホストのポートで、必要に応じて変更できます。

|

||||

|

||||

データはホストの `/home/ubuntu/data/one-api` ディレクトリに保存される。このディレクトリが存在し、書き込み権限があることを確認する、もしくは適切なディレクトリに変更してください。

|

||||

|

||||

Nginxリファレンス設定:

|

||||

```

|

||||

server{

|

||||

server_name openai.justsong.cn; # ドメイン名は適宜変更

|

||||

|

||||

location / {

|

||||

client_max_body_size 64m;

|

||||

proxy_http_version 1.1;

|

||||

proxy_pass http://localhost:3000; # それに応じてポートを変更

|

||||

proxy_set_header Host $host;

|

||||

proxy_set_header X-Forwarded-For $remote_addr;

|

||||

proxy_cache_bypass $http_upgrade;

|

||||

proxy_set_header Accept-Encoding gzip;

|

||||

proxy_read_timeout 300s; # GPT-4 はより長いタイムアウトが必要

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

次に、Let's Encrypt certbot を使って HTTPS を設定します:

|

||||

```bash

|

||||

# Ubuntu に certbot をインストール:

|

||||

sudo snap install --classic certbot

|

||||

sudo ln -s /snap/bin/certbot /usr/bin/certbot

|

||||

# 証明書の生成と Nginx 設定の変更

|

||||

sudo certbot --nginx

|

||||

# プロンプトに従う

|

||||

# Nginx を再起動

|

||||

sudo service nginx restart

|

||||

```

|

||||

|

||||

初期アカウントのユーザー名は `root` で、パスワードは `123456` です。

|

||||

|

||||

### マニュアルデプロイ

|

||||

1. [GitHub Releases](https://github.com/songquanpeng/one-api/releases/latest) から実行ファイルをダウンロードする、もしくはソースからコンパイルする:

|

||||

```shell

|

||||

git clone https://github.com/songquanpeng/one-api.git

|

||||

|

||||

# フロントエンドのビルド

|

||||

cd one-api/web

|

||||

npm install

|

||||

npm run build

|

||||

|

||||

# バックエンドのビルド

|

||||

cd ..

|

||||

go mod download

|

||||

go build -ldflags "-s -w" -o one-api

|

||||

```

|

||||

2. 実行:

|

||||

```shell

|

||||

chmod u+x one-api

|

||||

./one-api --port 3000 --log-dir ./logs

|

||||

```

|

||||

3. [http://localhost:3000/](http://localhost:3000/) にアクセスし、ログインする。初期アカウントのユーザー名は `root`、パスワードは `123456` である。

|

||||

|

||||

より詳細なデプロイのチュートリアルについては、[このページ](https://iamazing.cn/page/how-to-deploy-a-website) を参照してください。

|

||||

|

||||

### マルチマシンデプロイ

|

||||

1. すべてのサーバに同じ `SESSION_SECRET` を設定する。

|

||||

2. `SQL_DSN` を設定し、SQLite の代わりに MySQL を使用する。すべてのサーバは同じデータベースに接続する。

|

||||

3. マスターノード以外のノードの `NODE_TYPE` を `slave` に設定する。

|

||||

4. データベースから定期的に設定を同期するサーバーには `SYNC_FREQUENCY` を設定する。

|

||||

5. マスター以外のノードでは、オプションで `FRONTEND_BASE_URL` を設定して、ページ要求をマスターサーバーにリダイレクトすることができます。

|

||||

6. マスター以外のノードには Redis を個別にインストールし、`REDIS_CONN_STRING` を設定して、キャッシュの有効期限が切れていないときにデータベースにゼロレイテンシーでアクセスできるようにする。

|

||||

7. メインサーバーでもデータベースへのアクセスが高レイテンシになる場合は、Redis を有効にし、`SYNC_FREQUENCY` を設定してデータベースから定期的に設定を同期する必要がある。

|

||||

|

||||

Please refer to the [environment variables](#environment-variables) section for details on using environment variables.

|

||||

|

||||

### コントロールパネル(例: Baota)への展開

|

||||

詳しい手順は [#175](https://github.com/songquanpeng/one-api/issues/175) を参照してください。

|

||||

|

||||

配置後に空白のページが表示される場合は、[#97](https://github.com/songquanpeng/one-api/issues/97) を参照してください。

|

||||

|

||||

### サードパーティプラットフォームへのデプロイ

|

||||

<details>

|

||||

<summary><strong>Sealos へのデプロイ</strong></summary>

|

||||

<div>

|

||||

|

||||

> Sealos は、高い同時実行性、ダイナミックなスケーリング、数百万人のユーザーに対する安定した運用をサポートしています。

|

||||

|

||||

> 下のボタンをクリックすると、ワンクリックで展開できます。👇

|

||||

|

||||

[](https://cloud.sealos.io/?openapp=system-fastdeploy?templateName=one-api)

|

||||

|

||||

|

||||

</div>

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Zeabur へのデプロイ</strong></summary>

|

||||

<div>

|

||||

|

||||

> Zeabur のサーバーは海外にあるため、ネットワークの問題は自動的に解決されます。

|

||||

|

||||

[](https://zeabur.com/templates/7Q0KO3)

|

||||

|

||||

1. まず、コードをフォークする。

|

||||

2. [Zeabur](https://zeabur.com?referralCode=songquanpeng) にアクセスしてログインし、コンソールに入る。

|

||||

3. 新しいプロジェクトを作成します。Service -> Add ServiceでMarketplace を選択し、MySQL を選択する。接続パラメータ(ユーザー名、パスワード、アドレス、ポート)をメモします。

|

||||

4. 接続パラメータをコピーし、```create database `one-api` ``` を実行してデータベースを作成する。

|

||||

5. その後、Service -> Add Service で Git を選択し(最初の使用には認証が必要です)、フォークしたリポジトリを選択します。

|

||||

6. 自動デプロイが開始されますが、一旦キャンセルしてください。Variable タブで `PORT` に `3000` を追加し、`SQL_DSN` に `<username>:<password>@tcp(<addr>:<port>)/one-api` を追加します。変更を保存する。SQL_DSN` が設定されていないと、データが永続化されず、再デプロイ後にデータが失われるので注意すること。

|

||||

7. 再デプロイを選択します。

|

||||

8. Domains タブで、"my-one-api" のような適切なドメイン名の接頭辞を選択する。最終的なドメイン名は "my-one-api.zeabur.app" となります。独自のドメイン名を CNAME することもできます。

|

||||

9. デプロイが完了するのを待ち、生成されたドメイン名をクリックして One API にアクセスします。

|

||||

|

||||

</div>

|

||||

</details>

|

||||

|

||||

## コンフィグ

|

||||

システムは箱から出してすぐに使えます。

|

||||

|

||||

環境変数やコマンドラインパラメータを設定することで、システムを構成することができます。

|

||||

|

||||

システム起動後、`root` ユーザーとしてログインし、さらにシステムを設定します。

|

||||

|

||||

## 使用方法

|

||||

`Channels` ページで API Key を追加し、`Tokens` ページでアクセストークンを追加する。

|

||||

|

||||

アクセストークンを使って One API にアクセスすることができる。使い方は [OpenAI API](https://platform.openai.com/docs/api-reference/introduction) と同じです。

|

||||

|

||||

OpenAI API が使用されている場所では、API Base に One API のデプロイアドレスを設定することを忘れないでください(例: `https://openai.justsong.cn`)。API Key は One API で生成されたトークンでなければなりません。

|

||||

|

||||

具体的な API Base のフォーマットは、使用しているクライアントに依存することに注意してください。

|

||||

|

||||

```mermaid

|

||||

graph LR

|

||||

A(ユーザ)

|

||||

A --->|リクエスト| B(One API)

|

||||

B -->|中継リクエスト| C(OpenAI)

|

||||

B -->|中継リクエスト| D(Azure)

|

||||

B -->|中継リクエスト| E(その他のダウンストリームチャンネル)

|

||||

```

|

||||

|

||||

現在のリクエストにどのチャネルを使うかを指定するには、トークンの後に チャネル ID を追加します: 例えば、`Authorization: Bearer ONE_API_KEY-CHANNEL_ID` のようにします。

|

||||

チャンネル ID を指定するためには、トークンは管理者によって作成される必要があることに注意してください。

|

||||

|

||||

もしチャネル ID が指定されない場合、ロードバランシングによってリクエストが複数のチャネルに振り分けられます。

|

||||

|

||||

### 環境変数

|

||||

1. `REDIS_CONN_STRING`: 設定すると、リクエストレート制限のためのストレージとして、メモリの代わりに Redis が使われる。

|

||||

+ 例: `REDIS_CONN_STRING=redis://default:redispw@localhost:49153`

|

||||

2. `SESSION_SECRET`: 設定すると、固定セッションキーが使用され、システムの再起動後もログインユーザーのクッキーが有効であることが保証されます。

|

||||

+ 例: `SESSION_SECRET=random_string`

|

||||

3. `SQL_DSN`: 設定すると、SQLite の代わりに指定したデータベースが使用されます。MySQL バージョン 8.0 を使用してください。

|

||||

+ 例: `SQL_DSN=root:123456@tcp(localhost:3306)/oneapi`

|

||||

4. `FRONTEND_BASE_URL`: 設定されると、バックエンドアドレスではなく、指定されたフロントエンドアドレスが使われる。

|

||||

+ 例: `FRONTEND_BASE_URL=https://openai.justsong.cn`

|

||||

5. `SYNC_FREQUENCY`: 設定された場合、システムは定期的にデータベースからコンフィグを秒単位で同期する。設定されていない場合、同期は行われません。

|

||||

+ 例: `SYNC_FREQUENCY=60`

|

||||

6. `NODE_TYPE`: 設定すると、ノードのタイプを指定する。有効な値は `master` と `slave` である。設定されていない場合、デフォルトは `master`。

|

||||

+ 例: `NODE_TYPE=slave`

|

||||

7. `CHANNEL_UPDATE_FREQUENCY`: 設定すると、チャンネル残高を分単位で定期的に更新する。設定されていない場合、更新は行われません。

|

||||

+ 例: `CHANNEL_UPDATE_FREQUENCY=1440`

|

||||

8. `CHANNEL_TEST_FREQUENCY`: 設定すると、チャンネルを定期的にテストする。設定されていない場合、テストは行われません。

|

||||

+ 例: `CHANNEL_TEST_FREQUENCY=1440`

|

||||

9. `POLLING_INTERVAL`: チャネル残高の更新とチャネルの可用性をテストするときのリクエスト間の時間間隔 (秒)。デフォルトは間隔なし。

|

||||

+ 例: `POLLING_INTERVAL=5`

|

||||

|

||||

### コマンドラインパラメータ

|

||||

1. `--port <port_number>`: サーバがリッスンするポート番号を指定。デフォルトは `3000` です。

|

||||

+ 例: `--port 3000`

|

||||

2. `--log-dir <log_dir>`: ログディレクトリを指定。設定しない場合、ログは保存されません。

|

||||

+ 例: `--log-dir ./logs`

|

||||

3. `--version`: システムのバージョン番号を表示して終了する。

|

||||

4. `--help`: コマンドの使用法ヘルプとパラメータの説明を表示。

|

||||

|

||||

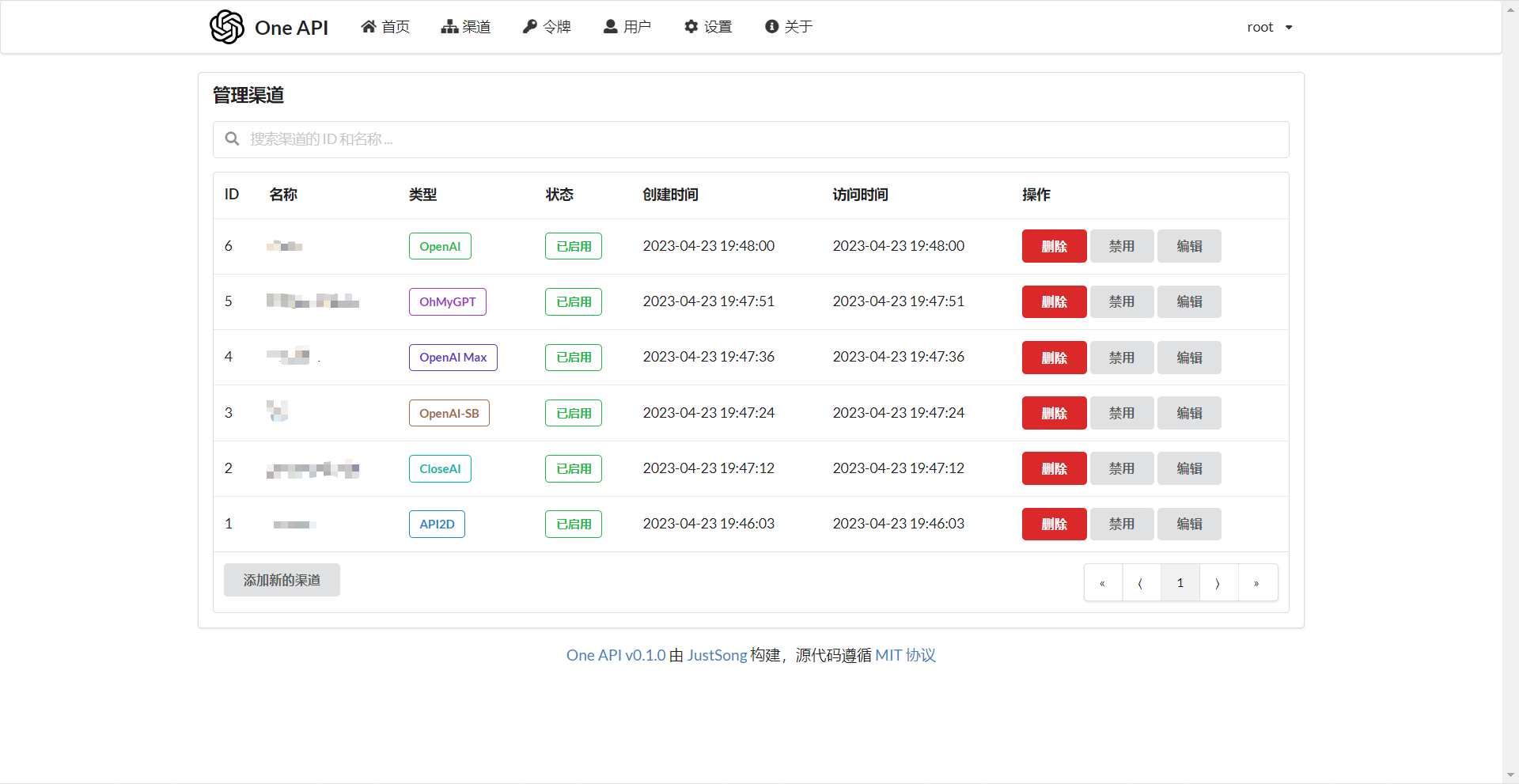

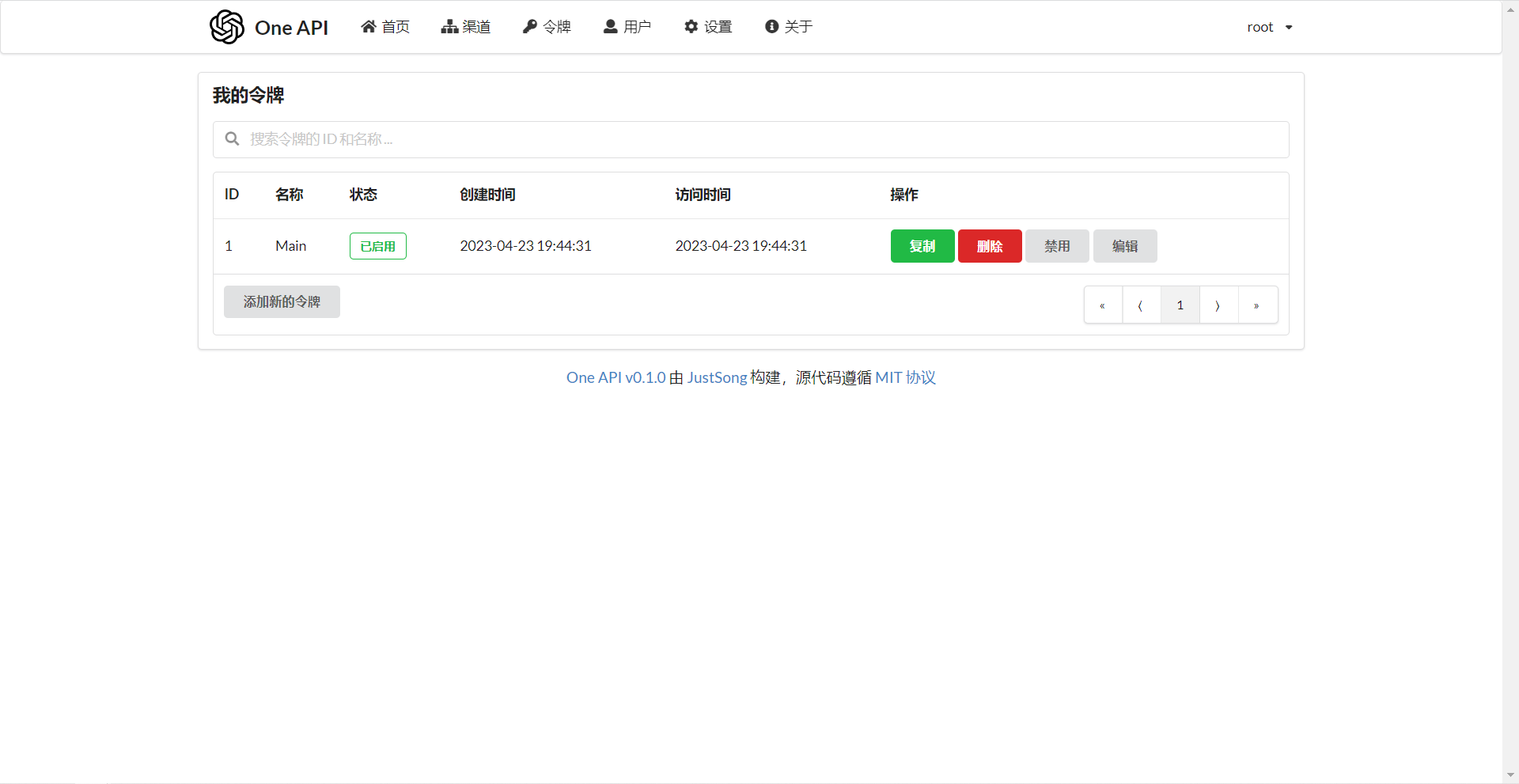

## スクリーンショット

|

||||

|

||||

|

||||

|

||||

## FAQ

|

||||

1. ノルマとは何か?どのように計算されますか?One API にはノルマ計算の問題はありますか?

|

||||

+ ノルマ = グループ倍率 * モデル倍率 * (プロンプトトークンの数 + 完了トークンの数 * 完了倍率)

|

||||

+ 完了倍率は、公式の定義と一致するように、GPT3.5 では 1.33、GPT4 では 2 に固定されています。

|

||||

+ ストリームモードでない場合、公式 API は消費したトークンの総数を返す。ただし、プロンプトとコンプリートの消費倍率は異なるので注意してください。

|

||||

2. アカウント残高は十分なのに、"insufficient quota" と表示されるのはなぜですか?

|

||||

+ トークンのクォータが十分かどうかご確認ください。トークンクォータはアカウント残高とは別のものです。

|

||||

+ トークンクォータは最大使用量を設定するためのもので、ユーザーが自由に設定できます。

|

||||

3. チャンネルを使おうとすると "No available channels" と表示されます。どうすればいいですか?

|

||||

+ ユーザーとチャンネルグループの設定を確認してください。

|

||||

+ チャンネルモデルの設定も確認してください。

|

||||

4. チャンネルテストがエラーを報告する: "invalid character '<' looking for beginning of value"

|

||||

+ このエラーは、返された値が有効な JSON ではなく、HTML ページである場合に発生する。

|

||||

+ ほとんどの場合、デプロイサイトのIPかプロキシのノードが CloudFlare によってブロックされています。

|

||||

5. ChatGPT Next Web でエラーが発生しました: "Failed to fetch"

|

||||

+ デプロイ時に `BASE_URL` を設定しないでください。

|

||||

+ インターフェイスアドレスと API Key が正しいか再確認してください。

|

||||

|

||||

## 関連プロジェクト

|

||||

[FastGPT](https://github.com/labring/FastGPT): LLM に基づく知識質問応答システム

|

||||

|

||||

## 注

|

||||

本プロジェクトはオープンソースプロジェクトです。OpenAI の[利用規約](https://openai.com/policies/terms-of-use)および**適用される法令**を遵守してご利用ください。違法な目的での利用はご遠慮ください。

|

||||

|

||||

このプロジェクトは MIT ライセンスで公開されています。これに基づき、ページの最下部に帰属表示と本プロジェクトへのリンクを含める必要があります。

|

||||

|

||||

このプロジェクトを基にした派生プロジェクトについても同様です。

|

||||

|

||||

帰属表示を含めたくない場合は、事前に許可を得なければなりません。

|

||||

|

||||

MIT ライセンスによると、このプロジェクトを利用するリスクと責任は利用者が負うべきであり、このオープンソースプロジェクトの開発者は責任を負いません。

|

||||

178

README.md

@@ -1,10 +1,10 @@

|

||||

<p align="right">

|

||||

<strong>中文</strong> | <a href="./README.en.md">English</a> | <a href="./README.ja.md">日本語</a>

|

||||

<strong>中文</strong> | <a href="./README.en.md">English</a>

|

||||

</p>

|

||||

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/songquanpeng/one-api"><img src="https://raw.githubusercontent.com/songquanpeng/one-api/main/web/default/public/logo.png" width="150" height="150" alt="one-api logo"></a>

|

||||

<a href="https://github.com/songquanpeng/one-api"><img src="https://raw.githubusercontent.com/songquanpeng/one-api/main/web/public/logo.png" width="150" height="150" alt="one-api logo"></a>

|

||||

</p>

|

||||

|

||||

<div align="center">

|

||||

@@ -51,29 +51,27 @@ _✨ 通过标准的 OpenAI API 格式访问所有的大模型,开箱即用

|

||||

<a href="https://iamazing.cn/page/reward">赞赏支持</a>

|

||||

</p>

|

||||

|

||||

> [!NOTE]

|

||||

> 本项目为开源项目,使用者必须在遵循 OpenAI 的[使用条款](https://openai.com/policies/terms-of-use)以及**法律法规**的情况下使用,不得用于非法用途。

|

||||

>

|

||||

> 根据[《生成式人工智能服务管理暂行办法》](http://www.cac.gov.cn/2023-07/13/c_1690898327029107.htm)的要求,请勿对中国地区公众提供一切未经备案的生成式人工智能服务。

|

||||

> **Note**:本项目为开源项目,使用者必须在遵循 OpenAI 的[使用条款](https://openai.com/policies/terms-of-use)以及**法律法规**的情况下使用,不得用于非法用途。

|

||||

|

||||

> [!WARNING]

|

||||

> 使用 Docker 拉取的最新镜像可能是 `alpha` 版本,如果追求稳定性请手动指定版本。

|

||||

> **Note**:使用 Docker 拉取的最新镜像可能是 `alpha` 版本,如果追求稳定性请手动指定版本。

|

||||

|

||||

> [!WARNING]

|

||||

> 使用 root 用户初次登录系统后,务必修改默认密码 `123456`!

|

||||

> **Warning**:从 `v0.3` 版本升级到 `v0.4` 版本需要手动迁移数据库,请手动执行[数据库迁移脚本](./bin/migration_v0.3-v0.4.sql)。

|

||||

|

||||

## 功能

|

||||

1. 支持多种大模型:

|

||||

+ [x] [OpenAI ChatGPT 系列模型](https://platform.openai.com/docs/guides/gpt/chat-completions-api)(支持 [Azure OpenAI API](https://learn.microsoft.com/en-us/azure/ai-services/openai/reference))

|

||||

+ [x] [Anthropic Claude 系列模型](https://anthropic.com)

|

||||

+ [x] [Google PaLM2/Gemini 系列模型](https://developers.generativeai.google)

|

||||

+ [x] [Google PaLM2 系列模型](https://developers.generativeai.google)

|

||||

+ [x] [百度文心一言系列模型](https://cloud.baidu.com/doc/WENXINWORKSHOP/index.html)

|

||||

+ [x] [阿里通义千问系列模型](https://help.aliyun.com/document_detail/2400395.html)

|

||||

+ [x] [讯飞星火认知大模型](https://www.xfyun.cn/doc/spark/Web.html)

|

||||

+ [x] [智谱 ChatGLM 系列模型](https://bigmodel.cn)

|

||||

+ [x] [360 智脑](https://ai.360.cn)

|

||||

+ [x] [腾讯混元大模型](https://cloud.tencent.com/document/product/1729)

|

||||

2. 支持配置镜像以及众多[第三方代理服务](https://iamazing.cn/page/openai-api-third-party-services)。

|

||||

2. 支持配置镜像以及众多第三方代理服务:

|

||||

+ [x] [API Distribute](https://api.gptjk.top/register?aff=QGxj)

|

||||

+ [x] [OpenAI-SB](https://openai-sb.com)

|

||||

+ [x] [API2D](https://api2d.com/r/197971)

|

||||

+ [x] [OhMyGPT](https://aigptx.top?aff=uFpUl2Kf)

|

||||

+ [x] [AI Proxy](https://aiproxy.io/?i=OneAPI) (邀请码:`OneAPI`)

|

||||

+ [x] [CloseAI](https://console.closeai-asia.com/r/2412)

|

||||

+ [x] 自定义渠道:例如各种未收录的第三方代理服务

|

||||

3. 支持通过**负载均衡**的方式访问多个渠道。

|

||||

4. 支持 **stream 模式**,可以通过流式传输实现打字机效果。

|

||||

5. 支持**多机部署**,[详见此处](#多机部署)。

|

||||

@@ -86,43 +84,33 @@ _✨ 通过标准的 OpenAI API 格式访问所有的大模型,开箱即用

|

||||

12. 支持**用户邀请奖励**。

|

||||

13. 支持以美元为单位显示额度。

|

||||

14. 支持发布公告,设置充值链接,设置新用户初始额度。

|

||||

15. 支持模型映射,重定向用户的请求模型,如无必要请不要设置,设置之后会导致请求体被重新构造而非直接透传,会导致部分还未正式支持的字段无法传递成功。

|

||||

15. 支持模型映射,重定向用户的请求模型。

|

||||

16. 支持失败自动重试。

|

||||

17. 支持绘图接口。

|

||||

18. 支持 [Cloudflare AI Gateway](https://developers.cloudflare.com/ai-gateway/providers/openai/),渠道设置的代理部分填写 `https://gateway.ai.cloudflare.com/v1/ACCOUNT_TAG/GATEWAY/openai` 即可。

|

||||

19. 支持丰富的**自定义**设置,

|

||||

18. 支持丰富的**自定义**设置,

|

||||

1. 支持自定义系统名称,logo 以及页脚。

|

||||

2. 支持自定义首页和关于页面,可以选择使用 HTML & Markdown 代码进行自定义,或者使用一个单独的网页通过 iframe 嵌入。

|

||||

20. 支持通过系统访问令牌访问管理 API(bearer token,用以替代 cookie,你可以自行抓包来查看 API 的用法)。

|

||||

21. 支持 Cloudflare Turnstile 用户校验。

|

||||

22. 支持用户管理,支持**多种用户登录注册方式**:

|

||||

+ 邮箱登录注册(支持注册邮箱白名单)以及通过邮箱进行密码重置。

|

||||

19. 支持通过系统访问令牌访问管理 API。

|

||||

20. 支持 Cloudflare Turnstile 用户校验。

|

||||

21. 支持用户管理,支持**多种用户登录注册方式**:

|

||||

+ 邮箱登录注册以及通过邮箱进行密码重置。

|

||||

+ [GitHub 开放授权](https://github.com/settings/applications/new)。

|

||||

+ 微信公众号授权(需要额外部署 [WeChat Server](https://github.com/songquanpeng/wechat-server))。

|

||||

23. 支持主题切换,设置环境变量 `THEME` 即可,默认为 `default`,欢迎 PR 更多主题,具体参考[此处](./web/README.md)。

|

||||

|

||||

## 部署

|

||||

### 基于 Docker 进行部署

|

||||

```shell

|

||||

# 使用 SQLite 的部署命令:

|

||||

docker run --name one-api -d --restart always -p 3000:3000 -e TZ=Asia/Shanghai -v /home/ubuntu/data/one-api:/data justsong/one-api

|

||||

# 使用 MySQL 的部署命令,在上面的基础上添加 `-e SQL_DSN="root:123456@tcp(localhost:3306)/oneapi"`,请自行修改数据库连接参数,不清楚如何修改请参见下面环境变量一节。

|

||||

# 例如:

|

||||

docker run --name one-api -d --restart always -p 3000:3000 -e SQL_DSN="root:123456@tcp(localhost:3306)/oneapi" -e TZ=Asia/Shanghai -v /home/ubuntu/data/one-api:/data justsong/one-api

|

||||

```

|

||||

|

||||

其中,`-p 3000:3000` 中的第一个 `3000` 是宿主机的端口,可以根据需要进行修改。

|

||||

|

||||

数据和日志将会保存在宿主机的 `/home/ubuntu/data/one-api` 目录,请确保该目录存在且具有写入权限,或者更改为合适的目录。

|

||||

|

||||

如果启动失败,请添加 `--privileged=true`,具体参考 https://github.com/songquanpeng/one-api/issues/482 。

|

||||

部署命令:`docker run --name one-api -d --restart always -p 3000:3000 -e TZ=Asia/Shanghai -v /home/ubuntu/data/one-api:/data justsong/one-api`

|

||||

|

||||

如果上面的镜像无法拉取,可以尝试使用 GitHub 的 Docker 镜像,将上面的 `justsong/one-api` 替换为 `ghcr.io/songquanpeng/one-api` 即可。

|

||||

|

||||

如果你的并发量较大,**务必**设置 `SQL_DSN`,详见下面[环境变量](#环境变量)一节。

|

||||

如果你的并发量较大,推荐设置 `SQL_DSN`,详见下面[环境变量](#环境变量)一节。

|

||||

|

||||

更新命令:`docker run --rm -v /var/run/docker.sock:/var/run/docker.sock containrrr/watchtower -cR`

|

||||

|

||||

`-p 3000:3000` 中的第一个 `3000` 是宿主机的端口,可以根据需要进行修改。

|

||||

|

||||

数据将会保存在宿主机的 `/home/ubuntu/data/one-api` 目录,请确保该目录存在且具有写入权限,或者更改为合适的目录。

|

||||

|

||||

Nginx 的参考配置:

|

||||

```

|

||||

server{

|

||||

@@ -155,19 +143,6 @@ sudo service nginx restart

|

||||

|

||||

初始账号用户名为 `root`,密码为 `123456`。

|

||||

|

||||

|

||||

### 基于 Docker Compose 进行部署

|

||||

|

||||

> 仅启动方式不同,参数设置不变,请参考基于 Docker 部署部分

|

||||

|

||||

```shell

|

||||

# 目前支持 MySQL 启动,数据存储在 ./data/mysql 文件夹内

|

||||

docker-compose up -d

|

||||

|

||||

# 查看部署状态

|

||||

docker-compose ps

|

||||

```

|

||||

|

||||

### 手动部署

|

||||

1. 从 [GitHub Releases](https://github.com/songquanpeng/one-api/releases/latest) 下载可执行文件或者从源码编译:

|

||||

```shell

|

||||

@@ -177,7 +152,7 @@ docker-compose ps

|

||||

cd one-api/web

|

||||

npm install

|

||||

npm run build

|

||||

|

||||

|

||||

# 构建后端

|

||||

cd ..

|

||||

go mod download

|

||||

@@ -230,23 +205,14 @@ docker run --name chatgpt-web -d -p 3002:3002 -e OPENAI_API_BASE_URL=https://ope

|

||||

|

||||

注意修改端口号、`OPENAI_API_BASE_URL` 和 `OPENAI_API_KEY`。

|

||||

|

||||

#### QChatGPT - QQ机器人

|

||||

项目主页:https://github.com/RockChinQ/QChatGPT

|

||||

|

||||

根据文档完成部署后,在`config.py`设置配置项`openai_config`的`reverse_proxy`为 One API 后端地址,设置`api_key`为 One API 生成的key,并在配置项`completion_api_params`的`model`参数设置为 One API 支持的模型名称。

|

||||

|

||||

可安装 [Switcher 插件](https://github.com/RockChinQ/Switcher)在运行时切换所使用的模型。

|

||||

|

||||

### 部署到第三方平台

|

||||

<details>

|

||||

<summary><strong>部署到 Sealos </strong></summary>

|

||||

<div>

|

||||

|

||||

> Sealos 的服务器在国外,不需要额外处理网络问题,支持高并发 & 动态伸缩。

|

||||

> Sealos 可视化部署,仅需 1 分钟。

|

||||

|

||||

点击以下按钮一键部署(部署后访问出现 404 请等待 3~5 分钟):

|

||||

|

||||

[](https://cloud.sealos.io/?openapp=system-fastdeploy?templateName=one-api)

|

||||

参考这个[教程](https://github.com/c121914yu/FastGPT/blob/main/docs/deploy/one-api/sealos.md)中 1~5 步。

|

||||

|

||||

</div>

|

||||

</details>

|

||||

@@ -255,12 +221,10 @@ docker run --name chatgpt-web -d -p 3002:3002 -e OPENAI_API_BASE_URL=https://ope

|

||||

<summary><strong>部署到 Zeabur</strong></summary>

|

||||

<div>

|

||||

|

||||

> Zeabur 的服务器在国外,自动解决了网络的问题,同时免费的额度也足够个人使用

|

||||

|

||||

[](https://zeabur.com/templates/7Q0KO3)

|

||||

> Zeabur 的服务器在国外,自动解决了网络的问题,同时免费的额度也足够个人使用。

|

||||

|

||||

1. 首先 fork 一份代码。

|

||||

2. 进入 [Zeabur](https://zeabur.com?referralCode=songquanpeng),登录,进入控制台。

|

||||

2. 进入 [Zeabur](https://zeabur.com/),登录,进入控制台。

|

||||

3. 新建一个 Project,在 Service -> Add Service 选择 Marketplace,选择 MySQL,并记下连接参数(用户名、密码、地址、端口)。

|

||||

4. 复制链接参数,运行 ```create database `one-api` ``` 创建数据库。

|

||||

5. 然后在 Service -> Add Service,选择 Git(第一次使用需要先授权),选择你 fork 的仓库。

|

||||

@@ -272,17 +236,6 @@ docker run --name chatgpt-web -d -p 3002:3002 -e OPENAI_API_BASE_URL=https://ope

|

||||

</div>

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>部署到 Render</strong></summary>

|

||||

<div>

|

||||

|

||||

> Render 提供免费额度,绑卡后可以进一步提升额度

|

||||

|

||||

Render 可以直接部署 docker 镜像,不需要 fork 仓库:https://dashboard.render.com

|

||||

|

||||

</div>

|

||||

</details>

|

||||

|

||||

## 配置

|

||||

系统本身开箱即用。

|

||||

|

||||

@@ -301,20 +254,13 @@ Render 可以直接部署 docker 镜像,不需要 fork 仓库:https://dashbo

|

||||

|

||||

注意,具体的 API Base 的格式取决于你所使用的客户端。

|

||||

|

||||

例如对于 OpenAI 的官方库:

|

||||

```bash

|

||||

OPENAI_API_KEY="sk-xxxxxx"

|

||||

OPENAI_API_BASE="https://<HOST>:<PORT>/v1"

|

||||

```

|

||||

|

||||

```mermaid

|

||||

graph LR

|

||||

A(用户)

|

||||

A --->|使用 One API 分发的 key 进行请求| B(One API)

|

||||

A --->|请求| B(One API)

|

||||

B -->|中继请求| C(OpenAI)

|

||||

B -->|中继请求| D(Azure)

|

||||

B -->|中继请求| E(其他 OpenAI API 格式下游渠道)

|

||||

B -->|中继并修改请求体和返回体| F(非 OpenAI API 格式下游渠道)

|

||||

B -->|中继请求| E(其他下游渠道)

|

||||

```

|

||||

|

||||

可以通过在令牌后面添加渠道 ID 的方式指定使用哪一个渠道处理本次请求,例如:`Authorization: Bearer ONE_API_KEY-CHANNEL_ID`。

|

||||

@@ -323,57 +269,32 @@ graph LR

|

||||

不加的话将会使用负载均衡的方式使用多个渠道。

|

||||

|

||||

### 环境变量

|

||||

1. `REDIS_CONN_STRING`:设置之后将使用 Redis 作为缓存使用。

|

||||

1. `REDIS_CONN_STRING`:设置之后将使用 Redis 作为请求频率限制的存储,而非使用内存存储。

|

||||

+ 例子:`REDIS_CONN_STRING=redis://default:redispw@localhost:49153`

|

||||

+ 如果数据库访问延迟很低,没有必要启用 Redis,启用后反而会出现数据滞后的问题。

|

||||

2. `SESSION_SECRET`:设置之后将使用固定的会话密钥,这样系统重新启动后已登录用户的 cookie 将依旧有效。

|

||||

+ 例子:`SESSION_SECRET=random_string`

|

||||

3. `SQL_DSN`:设置之后将使用指定数据库而非 SQLite,请使用 MySQL 或 PostgreSQL。

|

||||

+ 例子:

|

||||

+ MySQL:`SQL_DSN=root:123456@tcp(localhost:3306)/oneapi`

|

||||

+ PostgreSQL:`SQL_DSN=postgres://postgres:123456@localhost:5432/oneapi`(适配中,欢迎反馈)

|

||||

3. `SQL_DSN`:设置之后将使用指定数据库而非 SQLite,请使用 MySQL 8.0 版本。

|

||||

+ 例子:`SQL_DSN=root:123456@tcp(localhost:3306)/oneapi`

|

||||

+ 注意需要提前建立数据库 `oneapi`,无需手动建表,程序将自动建表。

|

||||

+ 如果使用本地数据库:部署命令可添加 `--network="host"` 以使得容器内的程序可以访问到宿主机上的 MySQL。

|

||||

+ 如果使用云数据库:如果云服务器需要验证身份,需要在连接参数中添加 `?tls=skip-verify`。

|

||||

+ 请根据你的数据库配置修改下列参数(或者保持默认值):

|

||||

+ `SQL_MAX_IDLE_CONNS`:最大空闲连接数,默认为 `100`。

|

||||

+ `SQL_MAX_OPEN_CONNS`:最大打开连接数,默认为 `1000`。

|

||||

+ 如果报错 `Error 1040: Too many connections`,请适当减小该值。

|

||||

+ `SQL_CONN_MAX_LIFETIME`:连接的最大生命周期,默认为 `60`,单位分钟。

|

||||

4. `FRONTEND_BASE_URL`:设置之后将重定向页面请求到指定的地址,仅限从服务器设置。

|

||||

+ 例子:`FRONTEND_BASE_URL=https://openai.justsong.cn`

|

||||

5. `MEMORY_CACHE_ENABLED`:启用内存缓存,会导致用户额度的更新存在一定的延迟,可选值为 `true` 和 `false`,未设置则默认为 `false`。

|

||||

+ 例子:`MEMORY_CACHE_ENABLED=true`

|

||||

6. `SYNC_FREQUENCY`:在启用缓存的情况下与数据库同步配置的频率,单位为秒,默认为 `600` 秒。

|

||||

5. `SYNC_FREQUENCY`:设置之后将定期与数据库同步配置,单位为秒,未设置则不进行同步。

|

||||

+ 例子:`SYNC_FREQUENCY=60`

|

||||

7. `NODE_TYPE`:设置之后将指定节点类型,可选值为 `master` 和 `slave`,未设置则默认为 `master`。

|

||||

6. `NODE_TYPE`:设置之后将指定节点类型,可选值为 `master` 和 `slave`,未设置则默认为 `master`。

|

||||

+ 例子:`NODE_TYPE=slave`

|

||||

8. `CHANNEL_UPDATE_FREQUENCY`:设置之后将定期更新渠道余额,单位为分钟,未设置则不进行更新。

|

||||

7. `CHANNEL_UPDATE_FREQUENCY`:设置之后将定期更新渠道余额,单位为分钟,未设置则不进行更新。

|

||||

+ 例子:`CHANNEL_UPDATE_FREQUENCY=1440`

|

||||

9. `CHANNEL_TEST_FREQUENCY`:设置之后将定期检查渠道,单位为分钟,未设置则不进行检查。

|

||||

8. `CHANNEL_TEST_FREQUENCY`:设置之后将定期检查渠道,单位为分钟,未设置则不进行检查。

|

||||

+ 例子:`CHANNEL_TEST_FREQUENCY=1440`

|

||||

10. `POLLING_INTERVAL`:批量更新渠道余额以及测试可用性时的请求间隔,单位为秒,默认无间隔。

|

||||

+ 例子:`POLLING_INTERVAL=5`

|

||||

11. `BATCH_UPDATE_ENABLED`:启用数据库批量更新聚合,会导致用户额度的更新存在一定的延迟可选值为 `true` 和 `false`,未设置则默认为 `false`。

|

||||

+ 例子:`BATCH_UPDATE_ENABLED=true`

|

||||

+ 如果你遇到了数据库连接数过多的问题,可以尝试启用该选项。

|

||||

12. `BATCH_UPDATE_INTERVAL=5`:批量更新聚合的时间间隔,单位为秒,默认为 `5`。

|

||||

+ 例子:`BATCH_UPDATE_INTERVAL=5`

|

||||

13. 请求频率限制:

|

||||

+ `GLOBAL_API_RATE_LIMIT`:全局 API 速率限制(除中继请求外),单 ip 三分钟内的最大请求数,默认为 `180`。

|

||||

+ `GLOBAL_WEB_RATE_LIMIT`:全局 Web 速率限制,单 ip 三分钟内的最大请求数,默认为 `60`。

|

||||

14. 编码器缓存设置:

|

||||

+ `TIKTOKEN_CACHE_DIR`:默认程序启动时会联网下载一些通用的词元的编码,如:`gpt-3.5-turbo`,在一些网络环境不稳定,或者离线情况,可能会导致启动有问题,可以配置此目录缓存数据,可迁移到离线环境。

|

||||

+ `DATA_GYM_CACHE_DIR`:目前该配置作用与 `TIKTOKEN_CACHE_DIR` 一致,但是优先级没有它高。

|

||||

15. `RELAY_TIMEOUT`:中继超时设置,单位为秒,默认不设置超时时间。

|

||||

16. `SQLITE_BUSY_TIMEOUT`:SQLite 锁等待超时设置,单位为毫秒,默认 `3000`。

|

||||

17. `GEMINI_SAFETY_SETTING`:Gemini 的安全设置,默认 `BLOCK_NONE`。

|

||||

18. `THEME`:系统的主题设置,默认为 `default`,具体可选值参考[此处](./web/README.md)。

|

||||

9. `POLLING_INTERVAL`:批量更新渠道余额以及测试可用性时的请求间隔,单位为秒,默认无间隔。

|

||||

+ 例子:`POLLING_INTERVAL=5`

|

||||

|

||||

### 命令行参数

|

||||

1. `--port <port_number>`: 指定服务器监听的端口号,默认为 `3000`。

|

||||

+ 例子:`--port 3000`

|

||||

2. `--log-dir <log_dir>`: 指定日志文件夹,如果没有设置,默认保存至工作目录的 `logs` 文件夹下。

|

||||

2. `--log-dir <log_dir>`: 指定日志文件夹,如果没有设置,日志将不会被保存。

|

||||

+ 例子:`--log-dir ./logs`

|

||||

3. `--version`: 打印系统版本号并退出。

|

||||

4. `--help`: 查看命令的使用帮助和参数说明。

|

||||

@@ -392,7 +313,6 @@ https://openai.justsong.cn

|

||||

+ 额度 = 分组倍率 * 模型倍率 * (提示 token 数 + 补全 token 数 * 补全倍率)

|

||||

+ 其中补全倍率对于 GPT3.5 固定为 1.33,GPT4 为 2,与官方保持一致。

|

||||

+ 如果是非流模式,官方接口会返回消耗的总 token,但是你要注意提示和补全的消耗倍率不一样。

|

||||

+ 注意,One API 的默认倍率就是官方倍率,是已经调整过的。

|

||||

2. 账户额度足够为什么提示额度不足?

|

||||

+ 请检查你的令牌额度是否足够,这个和账户额度是分开的。

|

||||

+ 令牌额度仅供用户设置最大使用量,用户可自由设置。

|

||||

@@ -405,19 +325,11 @@ https://openai.justsong.cn

|

||||

5. ChatGPT Next Web 报错:`Failed to fetch`

|

||||

+ 部署的时候不要设置 `BASE_URL`。

|

||||

+ 检查你的接口地址和 API Key 有没有填对。

|

||||

+ 检查是否启用了 HTTPS,浏览器会拦截 HTTPS 域名下的 HTTP 请求。

|

||||

6. 报错:`当前分组负载已饱和,请稍后再试`

|

||||

+ 上游通道 429 了。

|

||||

7. 升级之后我的数据会丢失吗?

|

||||

+ 如果使用 MySQL,不会。

|

||||

+ 如果使用 SQLite,需要按照我所给的部署命令挂载 volume 持久化 one-api.db 数据库文件,否则容器重启后数据会丢失。

|

||||

8. 升级之前数据库需要做变更吗?

|

||||

+ 一般情况下不需要,系统将在初始化的时候自动调整。

|

||||

+ 如果需要的话,我会在更新日志中说明,并给出脚本。

|

||||

|

||||

## 相关项目

|

||||

* [FastGPT](https://github.com/labring/FastGPT): 基于 LLM 大语言模型的知识库问答系统

|

||||

* [ChatGPT Next Web](https://github.com/Yidadaa/ChatGPT-Next-Web): 一键拥有你自己的跨平台 ChatGPT 应用

|

||||

[FastGPT](https://github.com/c121914yu/FastGPT): 三分钟搭建 AI 知识库

|

||||

|

||||

## 注意

|

||||

|

||||

@@ -425,4 +337,4 @@ https://openai.justsong.cn

|

||||

|

||||

同样适用于基于本项目的二开项目。

|

||||

|

||||

依据 MIT 协议,使用者需自行承担使用本项目的风险与责任,本开源项目开发者与此无关。

|

||||

依据 MIT 协议,使用者需自行承担使用本项目的风险与责任,本开源项目开发者与此无关。

|

||||

@@ -21,9 +21,12 @@ var QuotaPerUnit = 500 * 1000.0 // $0.002 / 1K tokens

|

||||

var DisplayInCurrencyEnabled = true

|

||||

var DisplayTokenStatEnabled = true

|

||||

|

||||

var UsingSQLite = false

|

||||

|

||||

// Any options with "Secret", "Token" in its key won't be return by GetOptions

|

||||

|

||||

var SessionSecret = uuid.New().String()

|

||||

var SQLitePath = "one-api.db"

|

||||

|

||||

var OptionMap map[string]string

|

||||

var OptionMapRWMutex sync.RWMutex

|

||||

@@ -35,26 +38,12 @@ var PasswordLoginEnabled = true

|

||||

var PasswordRegisterEnabled = true

|

||||

var EmailVerificationEnabled = false

|

||||

var GitHubOAuthEnabled = false

|

||||

var DiscordOAuthEnabled = false

|

||||

var WeChatAuthEnabled = false

|

||||

var GoogleOAuthEnabled = false

|

||||

var TurnstileCheckEnabled = false

|

||||

var RegisterEnabled = true

|

||||

|

||||

var EmailDomainRestrictionEnabled = false

|

||||

var EmailDomainWhitelist = []string{

|

||||

"gmail.com",

|

||||

"163.com",

|

||||

"126.com",

|

||||

"qq.com",

|

||||

"outlook.com",

|

||||

"hotmail.com",

|

||||

"icloud.com",

|

||||

"yahoo.com",

|

||||

"foxmail.com",

|

||||

}

|

||||

|

||||

var DebugEnabled = os.Getenv("DEBUG") == "true"

|

||||

var MemoryCacheEnabled = os.Getenv("MEMORY_CACHE_ENABLED") == "true"

|

||||

|

||||

var LogConsumeEnabled = true

|

||||

|

||||

var SMTPServer = ""

|

||||

@@ -62,15 +51,20 @@ var SMTPPort = 587

|

||||

var SMTPAccount = ""

|

||||

var SMTPFrom = ""

|

||||

var SMTPToken = ""

|

||||

var SMTPAuthLoginEnabled = false

|

||||

|

||||

var GitHubClientId = ""

|

||||

var GitHubClientSecret = ""

|

||||

|

||||

var DiscordClientId = ""

|

||||

var DiscordClientSecret = ""

|

||||

|

||||

var WeChatServerAddress = ""

|

||||

var WeChatServerToken = ""

|

||||

var WeChatAccountQRCodeImageURL = ""

|

||||

|

||||

var GoogleClientId = ""

|

||||

var GoogleClientSecret = ""

|

||||

|

||||

var TurnstileSiteKey = ""

|

||||

var TurnstileSecretKey = ""

|

||||

|

||||

@@ -79,7 +73,6 @@ var QuotaForInviter = 0

|

||||

var QuotaForInvitee = 0

|

||||

var ChannelDisableThreshold = 5.0

|

||||

var AutomaticDisableChannelEnabled = false

|

||||

var AutomaticEnableChannelEnabled = false

|

||||

var QuotaRemindThreshold = 1000

|

||||

var PreConsumedQuota = 500

|

||||

var ApproximateTokenEnabled = false

|

||||

@@ -92,24 +85,7 @@ var IsMasterNode = os.Getenv("NODE_TYPE") != "slave"

|

||||

var requestInterval, _ = strconv.Atoi(os.Getenv("POLLING_INTERVAL"))

|

||||

var RequestInterval = time.Duration(requestInterval) * time.Second

|

||||

|

||||

var SyncFrequency = GetOrDefault("SYNC_FREQUENCY", 10*60) // unit is second

|

||||

|

||||

var BatchUpdateEnabled = false

|

||||

var BatchUpdateInterval = GetOrDefault("BATCH_UPDATE_INTERVAL", 5)

|

||||

|

||||

var RelayTimeout = GetOrDefault("RELAY_TIMEOUT", 0) // unit is second

|

||||

|

||||

var GeminiSafetySetting = GetOrDefaultString("GEMINI_SAFETY_SETTING", "BLOCK_NONE")

|

||||

|

||||

var Theme = GetOrDefaultString("THEME", "default")

|

||||

var ValidThemes = map[string]bool{

|

||||

"default": true,

|

||||

"berry": true,

|

||||

}

|

||||

|

||||

const (

|

||||

RequestIdKey = "X-Oneapi-Request-Id"

|

||||

)

|

||||

var SyncFrequency = 10 * 60 // unit is second, will be overwritten by SYNC_FREQUENCY

|

||||

|

||||

const (

|

||||

RoleGuestUser = 0

|

||||

@@ -128,10 +104,10 @@ var (

|

||||

// All duration's unit is seconds

|

||||

// Shouldn't larger then RateLimitKeyExpirationDuration

|

||||

var (

|

||||

GlobalApiRateLimitNum = GetOrDefault("GLOBAL_API_RATE_LIMIT", 180)

|

||||

GlobalApiRateLimitNum = 180

|

||||

GlobalApiRateLimitDuration int64 = 3 * 60

|

||||

|

||||

GlobalWebRateLimitNum = GetOrDefault("GLOBAL_WEB_RATE_LIMIT", 60)

|

||||

GlobalWebRateLimitNum = 60

|

||||

GlobalWebRateLimitDuration int64 = 3 * 60

|

||||

|

||||

UploadRateLimitNum = 10

|

||||

@@ -165,64 +141,57 @@ const (

|

||||

)

|

||||

|

||||

const (

|

||||

ChannelStatusUnknown = 0

|

||||

ChannelStatusEnabled = 1 // don't use 0, 0 is the default value!

|

||||

ChannelStatusManuallyDisabled = 2 // also don't use 0

|

||||

ChannelStatusAutoDisabled = 3

|

||||

ChannelStatusUnknown = 0

|

||||

ChannelStatusEnabled = 1 // don't use 0, 0 is the default value!

|

||||

ChannelStatusDisabled = 2 // also don't use 0

|

||||

)

|

||||

|

||||

const (

|

||||

ChannelTypeUnknown = 0

|

||||

ChannelTypeOpenAI = 1

|

||||

ChannelTypeAPI2D = 2

|

||||

ChannelTypeAzure = 3

|

||||

ChannelTypeCloseAI = 4

|

||||

ChannelTypeOpenAISB = 5

|

||||

ChannelTypeOpenAIMax = 6

|

||||

ChannelTypeOhMyGPT = 7

|

||||

ChannelTypeCustom = 8

|

||||

ChannelTypeAILS = 9

|

||||

ChannelTypeAIProxy = 10

|

||||

ChannelTypePaLM = 11

|

||||

ChannelTypeAPI2GPT = 12

|

||||

ChannelTypeAIGC2D = 13

|

||||

ChannelTypeAnthropic = 14

|

||||

ChannelTypeBaidu = 15

|

||||

ChannelTypeZhipu = 16

|

||||

ChannelTypeAli = 17

|

||||

ChannelTypeXunfei = 18

|

||||

ChannelType360 = 19

|

||||

ChannelTypeOpenRouter = 20

|

||||

ChannelTypeAIProxyLibrary = 21

|

||||

ChannelTypeFastGPT = 22

|

||||

ChannelTypeTencent = 23

|

||||

ChannelTypeGemini = 24

|

||||

ChannelAllowNonStreamEnabled = 1

|

||||

ChannelAllowNonStreamDisabled = 2

|

||||

)

|

||||

|

||||

const (

|

||||

ChannelAllowStreamEnabled = 1

|

||||

ChannelAllowStreamDisabled = 2

|

||||

)

|

||||

|

||||

const (

|

||||

ChannelTypeUnknown = 0

|

||||

ChannelTypeOpenAI = 1

|

||||

ChannelTypeAPI2D = 2

|

||||

ChannelTypeAzure = 3

|

||||

ChannelTypeCloseAI = 4

|

||||

ChannelTypeOpenAISB = 5

|

||||

ChannelTypeOpenAIMax = 6

|

||||

ChannelTypeOhMyGPT = 7

|

||||

ChannelTypeCustom = 8

|

||||

ChannelTypeAILS = 9

|

||||

ChannelTypeAIProxy = 10

|

||||

ChannelTypePaLM = 11

|

||||

ChannelTypeAPI2GPT = 12

|

||||

ChannelTypeAIGC2D = 13

|

||||

ChannelTypeAnthropic = 14

|

||||

ChannelTypeBaidu = 15

|

||||

ChannelTypeZhipu = 16

|

||||

)

|

||||

|

||||

var ChannelBaseURLs = []string{

|

||||

"", // 0

|

||||

"https://api.openai.com", // 1

|

||||

"https://oa.api2d.net", // 2

|

||||

"", // 3

|

||||

"https://api.closeai-proxy.xyz", // 4

|

||||

"https://api.openai-sb.com", // 5

|

||||

"https://api.openaimax.com", // 6

|

||||

"https://api.ohmygpt.com", // 7

|

||||

"", // 8

|

||||

"https://api.caipacity.com", // 9

|

||||

"https://api.aiproxy.io", // 10

|

||||

"", // 11

|

||||

"https://api.api2gpt.com", // 12

|

||||

"https://api.aigc2d.com", // 13

|

||||

"https://api.anthropic.com", // 14

|

||||

"https://aip.baidubce.com", // 15

|

||||

"https://open.bigmodel.cn", // 16

|

||||

"https://dashscope.aliyuncs.com", // 17

|

||||

"", // 18

|

||||

"https://ai.360.cn", // 19

|

||||

"https://openrouter.ai/api", // 20

|

||||

"https://api.aiproxy.io", // 21

|

||||

"https://fastgpt.run/api/openapi", // 22

|

||||

"https://hunyuan.cloud.tencent.com", //23

|

||||

"", //24

|

||||

"", // 0

|

||||

"https://api.openai.com", // 1

|

||||

"https://oa.api2d.net", // 2

|

||||

"", // 3

|

||||

"https://api.closeai-proxy.xyz", // 4

|

||||

"https://api.openai-sb.com", // 5

|

||||

"https://api.openaimax.com", // 6

|

||||

"https://api.ohmygpt.com", // 7

|

||||

"", // 8

|

||||

"https://api.caipacity.com", // 9

|

||||

"https://api.aiproxy.io", // 10

|

||||

"", // 11

|

||||

"https://api.api2gpt.com", // 12

|

||||

"https://api.aigc2d.com", // 13

|

||||

"https://api.anthropic.com", // 14

|

||||

"https://aip.baidubce.com", // 15

|

||||

"https://open.bigmodel.cn", // 16

|

||||

}

|

||||

|

||||

@@ -1,7 +0,0 @@

|

||||

package common

|

||||

|

||||

var UsingSQLite = false

|

||||

var UsingPostgreSQL = false

|

||||

|

||||

var SQLitePath = "one-api.db"

|

||||

var SQLiteBusyTimeout = GetOrDefault("SQLITE_BUSY_TIMEOUT", 3000)

|

||||

@@ -1,79 +1,27 @@

|

||||

package common

|

||||

|

||||

import (

|

||||

"crypto/rand"

|

||||

"crypto/tls"

|

||||

"encoding/base64"

|

||||

"errors"

|

||||

"fmt"

|

||||

"net/smtp"

|

||||

"strings"

|

||||

"time"

|

||||

)

|

||||

|

||||

type loginAuth struct {

|

||||

username, password string

|

||||

}

|

||||

|

||||

func LoginAuth(username, password string) smtp.Auth {

|

||||

return &loginAuth{username, password}

|

||||

}

|

||||

|

||||

func (a *loginAuth) Start(_ *smtp.ServerInfo) (string, []byte, error) {

|

||||

return "LOGIN", []byte(a.username), nil

|

||||

}

|

||||

|

||||

func (a *loginAuth) Next(fromServer []byte, more bool) ([]byte, error) {

|

||||

if more {

|

||||

switch string(fromServer) {

|

||||

case "Username:":

|

||||

return []byte(a.username), nil

|

||||

case "Password:":

|

||||

return []byte(a.password), nil

|

||||

default:

|

||||

return nil, errors.New("unknown command from server during login auth")

|

||||

}

|

||||

}

|

||||

return nil, nil

|

||||

}

|

||||

|

||||

func SendEmail(subject string, receiver string, content string) error {

|

||||

if SMTPFrom == "" { // for compatibility

|

||||

SMTPFrom = SMTPAccount

|

||||

}

|

||||

encodedSubject := fmt.Sprintf("=?UTF-8?B?%s?=", base64.StdEncoding.EncodeToString([]byte(subject)))

|

||||

|

||||

// Extract domain from SMTPFrom

|

||||

parts := strings.Split(SMTPFrom, "@")

|

||||

var domain string

|

||||

if len(parts) > 1 {

|

||||

domain = parts[1]

|

||||

}

|

||||

// Generate a unique Message-ID

|

||||

buf := make([]byte, 16)

|

||||

_, err := rand.Read(buf)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

messageId := fmt.Sprintf("<%x@%s>", buf, domain)

|

||||

|

||||

mail := []byte(fmt.Sprintf("To: %s\r\n"+

|

||||

"From: %s<%s>\r\n"+

|

||||

"Subject: %s\r\n"+

|

||||

"Message-ID: %s\r\n"+ // add Message-ID header to avoid being treated as spam, RFC 5322

|

||||

"Date: %s\r\n"+

|

||||

"Content-Type: text/html; charset=UTF-8\r\n\r\n%s\r\n",

|

||||

receiver, SystemName, SMTPFrom, encodedSubject, messageId, time.Now().Format(time.RFC1123Z), content))

|

||||

|

||||

var auth smtp.Auth

|

||||

if SMTPAuthLoginEnabled {

|

||||

auth = LoginAuth(SMTPAccount, SMTPToken)

|

||||

} else {

|

||||

auth = smtp.PlainAuth("", SMTPAccount, SMTPToken, SMTPServer)

|

||||

}

|

||||

receiver, SystemName, SMTPFrom, encodedSubject, content))

|

||||

auth := smtp.PlainAuth("", SMTPAccount, SMTPToken, SMTPServer)

|

||||

addr := fmt.Sprintf("%s:%d", SMTPServer, SMTPPort)

|

||||

to := strings.Split(receiver, ";")

|

||||

|

||||

var err error

|

||||

if SMTPPort == 465 {

|

||||

tlsConfig := &tls.Config{

|

||||

InsecureSkipVerify: true,

|

||||

|

||||

@@ -5,7 +5,6 @@ import (

|

||||

"encoding/json"

|

||||

"github.com/gin-gonic/gin"

|

||||

"io"

|